AI Governance

Karen Hao Calls for Bottoms-Up Governance. For Australian Enterprises, That Starts With You.

Karen Hao does not believe AI companies will voluntarily reform or that governments will move fast enough. Her call is for bottoms-up governance: enterprises, civil society and communities choosing to hold AI to higher standards. For Australian organisations that build genuine governance capability now, that is not a burden. It is the most significant competitive differentiator available.

April 15, 2026

7

min read

Karen Hao closed her Diary Of A CEO appearance on a note that is more hopeful than the headline of the episode suggests. Yes, AI companies are manipulating definitions. Yes, they exploit labour and natural resources on a massive scale. Yes, they capture the researchers who would otherwise hold them to account. And no, she does not believe they will voluntarily reform.

But her response is not despair. It is a call for bottoms-up governance: enterprises, consumers, civil society organisations and the communities bearing the costs of AI development choosing to hold these companies to higher standards rather than waiting for regulation to mandate it. She draws an explicit parallel with the Bangladesh Accord, the international labour standards agreement for the fashion industry that emerged after devastating factory accidents, driven not by voluntary corporate action but by accumulated public and institutional pressure that made inaction untenable.

This is the final post in our series on what Karen Hao got right and what it means for Australian organisations. Part 1 established that AI governance cannot be outsourced to producers or regulators. Part 2 documented the vendor governance risks from AI's hidden supply chains. Part 3 addressed the shadow AI information asymmetry and why visibility is the foundational governance capability. This post examines what bottoms-up governance means in practice for Australian enterprises and why acting on it now creates a competitive advantage that compounds.

What Hao Means by Bottoms-Up Governance

Hao is explicit that she does not believe the change needed in the AI industry will come from AI companies voluntarily deciding to behave differently. The incentive structure does not support it. Competitive pressure from the race between companies does not support it. And the political capture of regulatory processes by AI industry actors makes top-down mandate difficult in the short term.

What she believes can work is collective pressure from the institutions and people affected by AI decisions. She cites the pattern of consumer and legal pressure already forcing AI companies to respond to mental health harms from AI companion products. She cites the fashion industry precedent. She cites the potential for enterprise procurement requirements, if major customers begin requiring governance standards as conditions of contract, to force AI companies to adopt those standards across their supply chains.

For Australian enterprises, the implication is concrete. Your organisation's decision about how to govern AI is not only an internal governance decision. It is also a signal to the AI industry about what standards the market will require. Organisations that require vendor transparency on training data provenance, that include governance maturity assessments in AI procurement, that contractually allocate accountability for AI-driven outcomes, are participating in the bottoms-up governance Hao is describing. They are contributing to the institutional pressure that makes AI industry reform feasible.

The Boardroom Moment Hao Has Created

An award-winning investigative journalist who spent seven years and more than 300 interviews documenting the AI industry went on one of the world's most-listened-to podcasts and told millions of people that AI companies are gaslighting the public, that the technology's foundations include hidden exploitation, and that the organisations deploying that technology bear the governance responsibility that the industry itself is failing to exercise.

That conversation is now circulating in boardrooms across Australia. The organisations that have already built AI governance capability will be in a position to tell their boards what they have done about it. The organisations that have not will be explaining why not.

The credibility of the Hao episode is significant. This is not a technology critic speculating about AI risks. This is a National Book Critics Circle Award winner, a Wall Street Journal foreign correspondent, a Pulitzer Center program director, speaking from seven years of primary research including 90 interviews with OpenAI insiders. The board question, when it comes, will carry weight commensurate with that credibility.

The Competitive Moat That Bottoms-Up Governance Builds

Governance built seriously and early creates four specific competitive advantages that matter to Australian enterprises operating in regulated sectors.

Procurement access. ISO 42001 certification is moving from nice-to-have to procurement requirement in Australian financial services and government supply chains. Organisations with certified AI management systems can demonstrate governance maturity that competitors without it cannot. The gap between those with and without certification will widen through 2026 and FY27 as procurement panels formalise their expectations.

Regulatory relationship. Australian regulators, APRA, ASIC and the OAIC, are clarifying their AI governance expectations through guidance, enforcement action and consultation. The organisations that have documented governance practices, genuine accountability structures and proactive engagement with regulators will have a qualitatively different relationship with those regulators than those that are reactive. The benefit of the doubt in an environment still clarifying its expectations accrues to organisations that have demonstrably invested in getting this right.

Customer trust. The Hao episode is changing what informed customers ask about the businesses they deal with. How does this organisation use AI to make decisions about me? How are those decisions governed? Who is accountable if something goes wrong? The organisations that can answer these questions with specificity and evidence are in a different category from those that cannot.

Talent and partnership access. The organisations taking AI governance seriously attract the technology, legal and risk professionals who want to work in environments that take ethics and accountability seriously. That talent pool is not large. The organisations that demonstrate genuine governance capability get disproportionate access to it, as well as to the research institutions, civil society organisations and industry bodies that are shaping the standards Hao is calling for.

What Taking Control Actually Looks Like

Hao's argument is that AI governance will not be handed to organisations by the companies whose technology they deploy. It has to be claimed. Built. Operated as an innate capability.

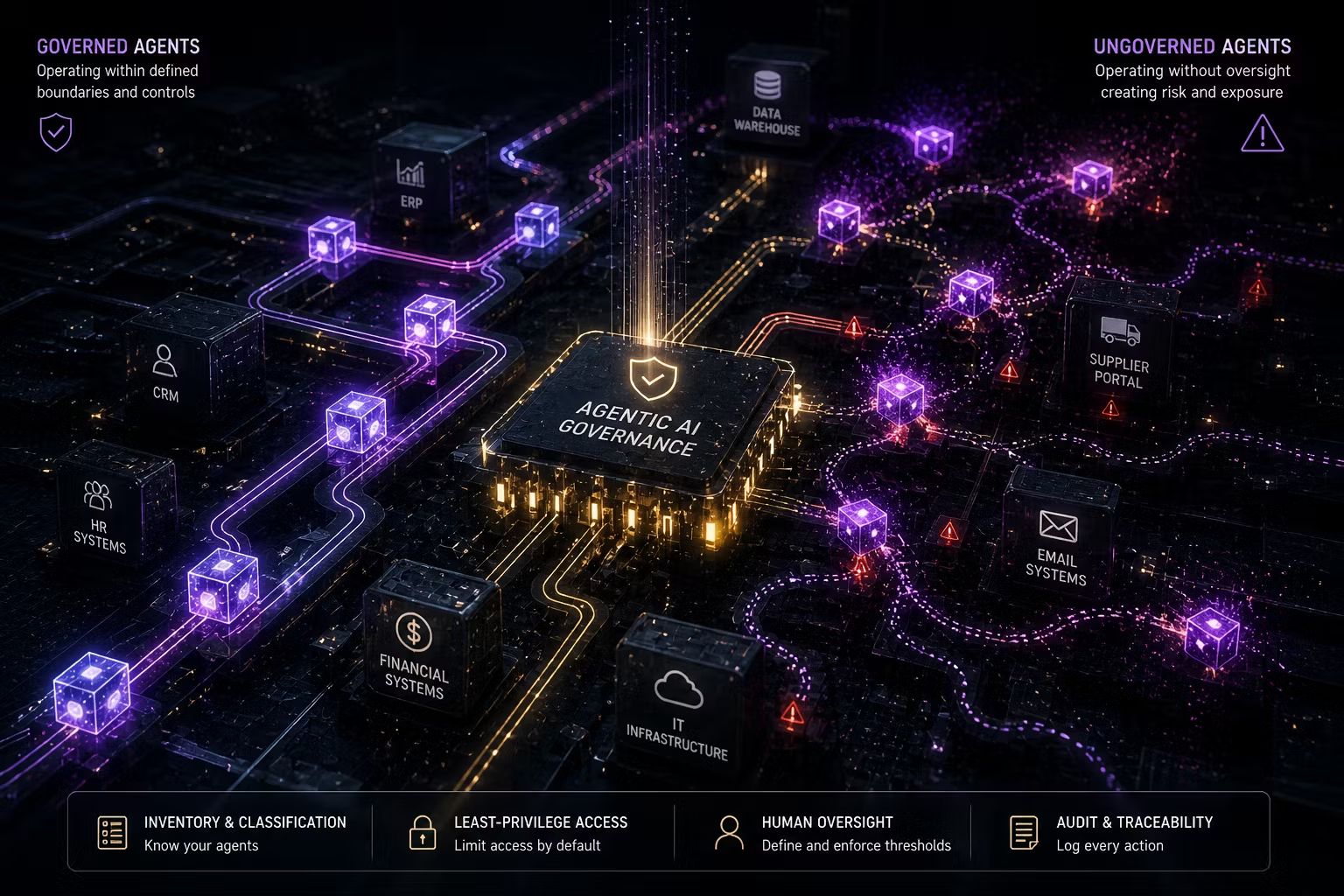

The practical architecture of that capability is well defined. An AI inventory that reflects what is actually running, not just what IT approved. A risk classification framework that determines what oversight each system requires before deployment. A structured intake process that intercepts new AI initiatives before they create exposure rather than after. Vendor governance that asks the provenance questions Hao's reporting makes necessary. Monitoring that converts governance from a paper exercise into something that actually manages the risks that matter.

And above all, a person at executive level who owns this agenda, with the authority to make decisions and the accountability to report outcomes to the board. The governance capacity starts with ownership. Without it, the processes are aspirational. With it, they become operational.

None of this requires waiting for the AI industry to reform itself or for the Australian government to pass an AI Act. It requires your organisation to decide that the governance of AI is its responsibility, to build the capability to exercise that responsibility and to treat that capability as a source of competitive differentiation rather than a cost of compliance.

The Window Is Narrowing

The competitive advantage of building genuine AI governance capability is real, but it is not permanent. As regulatory expectations become more specific, as ISO 42001 certification becomes more widespread, as the Privacy Act ADM obligations come into force and as civil society pressure on AI governance practices intensifies, the governance floor will rise. The advantage will not belong to those who started later. It will belong to those who started now and have governance capability that is mature, operational and defensible.

The Karen Hao episode created a moment. A credibility event that puts the accountability deficit of AI companies on boardroom agendas in terms that are clear, evidenced and compelling. The organisations that use that moment to accelerate their governance programmes will look back at 2026 as the year they chose to take control of something that was moving fast and starting to matter enormously.

The organisations that treat it as confirmation that someone else is worried about this will find that the window closed while they were watching.

Key Takeaways

- Karen Hao calls for bottoms-up governance: enterprises, civil society and communities choosing to hold AI companies to higher standards rather than waiting for top-down mandate

- Enterprise procurement requirements are a specific bottoms-up governance mechanism: requiring vendor transparency, governance maturity and accountability allocation in AI contracts contributes to the institutional pressure Hao describes as the most realistic path to industry reform

- The Hao episode is a credibility event. An award-winning investigative journalist with seven years of primary research confirming the accountability deficit is now circulating in boardrooms. Organisations with governance capability will answer the board question differently

- Responsible AI governance builds measurable competitive advantage through procurement access, regulatory relationship, customer trust and talent attraction

- The practical architecture is well defined: AI inventory, risk classification, intake process, vendor governance, monitoring and executive ownership. None of it requires waiting for external mandate

- Treating AI governance as an innate organisational capability rather than a compliance project is the decision that will look decisive in retrospect

How Trusenta Can Help

AI Governance: Trusenta's AI Governance platform is the infrastructure that makes governance capability operational rather than aspirational. The use-case register, risk assessment workflows and portfolio tracking turn governance from intentions into documented, repeatable practices that produce defensible evidence when it is needed.

Compliance Management: The multi-framework compliance infrastructure that maps controls once across ISO 42001, NIST AI RMF and the Guidance for AI Adoption, generating audit-ready evidence across all frameworks simultaneously rather than treating each as a separate project.

Fractional AI Officer: For organisations that need a senior AI leader to drive governance as a strategic priority, Trusenta's Fractional AI Officer embeds accountable executive leadership into the organisation. The bottoms-up governance Hao calls for starts with someone in your organisation who owns the agenda and has the authority to act on it.

AI Governance Services: From AI Governance Foundations for organisations building from the ground up, to AI Governance Enterprise for complex multi-jurisdictional environments, Trusenta's governance services build the capability that makes the competitive advantages described in this post available in practice, not just in principle.

Conclusion

Karen Hao is not a pessimist. She documented the governance failures of the AI industry with extraordinary rigour, and her conclusion is not that the situation is hopeless. It is that the path forward requires enterprises, communities and institutions choosing to govern AI seriously rather than deferring to those who built it. For Australian organisations, that choice is available now. The capability to exercise it exists. The competitive case for doing so is compelling and the regulatory case is arriving. The question is whether your organisation will build this as a core organisational value before it is required or after it becomes obvious that it should have been built long before.

Author

Mark brings a rare blend of C-suite leadership and hands-on consulting experience to Trusenta. As former SVP of Services, SVP of Business Opeartions, Managing Director and CIO he brings a breadth of experinece in his specialty in guiding organisations through AI strategy, governance and adoption; bridging ambition with practical execution. His focus is on helping clients embed AI responsibly, at scale and in service of real business outcomes.

https://www.linkedin.com/in/consult-mmiller/