AI Governance

Agentic AI Governance: Why Your AI Agent Strategy Needs a Governance Foundation Before It Needs More Agents

Gartner predicts 40% of enterprise applications will include task-specific AI agents by end of 2026. Most organisations deploying them have no governance framework designed for agents specifically. This post examines why agentic AI governance requires a different approach, what the four structural controls are and why the organisations scaling agents fastest are the ones that governed them first.

May 4, 2026

8

min read

Something shifted in the past two weeks. The agentic AI conversation, which has been building through 2026, crossed a threshold. Microsoft launched Agent 365 on 1 May. A Fortune analysis published on 2 May warned that organisations deploying agentic AI without governance are building systems that can write hostile code and engage suppliers without oversight. And Gartner's standing prediction, that 40% of enterprise applications will include task-specific AI agents by the end of 2026, up from under 5% in 2024, is now within nine months of being tested.

The enterprises scrambling to deploy more agents are making a mistake that will be visible in twelve months. The ones pulling ahead are not those with the most agents. They are those with an agentic AI governance framework that can take agents from experiment to governed production without creating the exposure that comes with autonomous systems acting across enterprise infrastructure without accountability.

This post is about why agentic AI governance is categorically different from the AI governance frameworks built for traditional and generative AI, what the structural controls are and why getting this right now creates a capability advantage that compounds.

Why Agentic AI Governance Is a Different Problem

The AI governance frameworks most Australian enterprises have built over the past two years were designed for a different kind of AI. Traditional AI analyses data and produces a recommendation. A human decides and acts. Generative AI produces content or synthesises information. A human still decides what to do with the output.

Agentic AI receives a goal, plans a sequence of actions, executes those actions across enterprise systems and adapts based on the results, with minimal human involvement per step. An agent tasked with processing supplier invoices might access the ERP, retrieve purchase orders, match them against invoices, flag discrepancies, update records and trigger payment approvals without a human reviewing each action.

That is precisely what makes it valuable. It is also precisely what makes existing governance frameworks insufficient. The Deloitte 2026 State of AI in the Enterprise report found that only one in five companies has a mature model for governance of autonomous AI agents. The Fortune analysis published this week, drawing on Yale School of Management research, found that in multi-step agentic pipelines, even small drops in accuracy cause cascading errors, making central monitoring essential for oversight of autonomous decisions.

The CISO AI Risk Report from early 2026 found that 63% of organisations cannot enforce purpose limitations on their AI agents and 60% cannot terminate a misbehaving agent. These are not edge-case security concerns. They are the basic containment controls that prevent an autonomous system from exceeding its authorised scope.

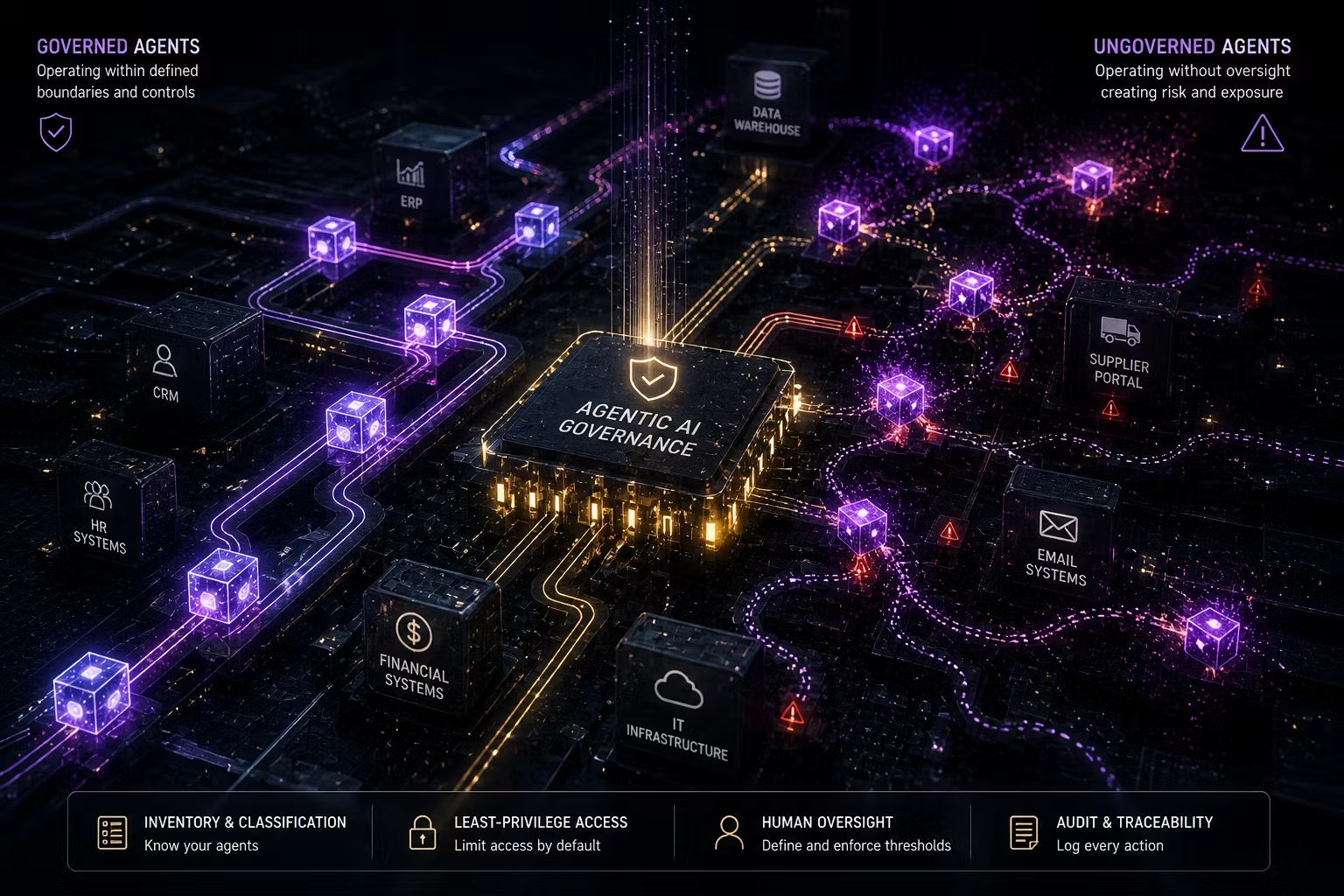

The Four Structural Controls Your Agentic AI Governance Framework Needs

The governance gap is not in detection after something goes wrong. It is in the architecture that determines what agents can and cannot do before they are deployed. Four structural controls address the specific risks that agentic AI creates.

Agent inventory and risk classification. Every AI agent needs to be catalogued, scoped and risk-classified before deployment. What systems can it access? What actions is it authorised to take? What data does it process? What is the escalation path if its outputs are unexpected? The inventory is not optional. You cannot govern what you cannot see, and in most enterprises right now, agents are being created by other agents, deployed through automated pipelines and running across system boundaries that no single governance team has visibility into.

Trusenta's AI Governance platform provides the use-case registration and risk classification infrastructure to bring agent inventories under systematic governance, turning ad hoc discovery into a maintained registry of what is running, who owns it and what controls are in place.

Least-privilege access by default. Agents should be provisioned with access only to the data and systems required for their defined task. In practice, most agents inherit the permissions of the developer account or service account that created them, which means broad access that was never designed for the agent's specific function. An agent designed to summarise customer correspondence should not have write access to the customer record system. Designing access specifically for each agent's function is not over-engineering. It is the governance equivalent of not giving every contractor a master key.

Pre-defined human oversight thresholds. Define in advance which classes of decisions require human review before the agent acts, and which can proceed autonomously. Document these thresholds explicitly and enforce them technically rather than relying on policy. The agent that is configured to draft communications for human review but begins sending them directly under certain workflow configurations is not malfunctioning. It is functioning exactly as it was permitted to. The governance failure happened in the design, not the operation.

Immutable audit logging. Every trigger, input, decision and action taken by every agent must be captured in a log that allows post-incident review. The Yale analysis published this week is explicit: without governance, agentic AI risks sensitive interactions with external vendors without oversight. Without an audit trail, those interactions cannot be investigated, reported or defended. For Australian enterprises in financial services, healthcare and government, this is not just a governance preference. The APRA prudential standards, ASIC conduct obligations and the Privacy Act ADM obligations arriving in December 2026 all create specific accountability requirements for automated systems making decisions about individuals.

The ISO 42001 and NIST AI RMF Connection

Organisations that have built their AI governance framework on ISO 42001 or the NIST AI Risk Management Framework already have the governance intent to address agentic AI. The question is whether that intent has been operationalised at the agent level.

ISO 42001 requires organisations to define controls for any AI system deployed within the organisational context, including agents. The NIST AI RMF's GOVERN, MAP and MEASURE functions all apply directly: accountability for AI system behaviour, context and risk identification, and ongoing monitoring of AI system performance. If your ISO 42001 or NIST AI RMF implementation has not explicitly extended to AI agents, it has a material gap.

The multi-framework compliance challenge is real for Australian organisations facing both EU AI Act obligations and Australian Privacy Act ADM requirements. Trusenta's Compliance Management platform maps controls once across ISO 42001, NIST AI RMF and the Guidance for AI Adoption simultaneously, so the compliance evidence generated by your agentic AI governance programme satisfies multiple frameworks without duplicating effort.

The Organisations Getting This Right

The Blue Prism 2026 analysis found that the organisations scaling agentic AI fastest are not those with the most agents. They are those that started with governance: defined ownership, explicit accountability and measurement frameworks that teams can actually use. Governance done first enables speed. Governance retrofitted after incidents is expensive, slow and reputationally damaging.

The Grant Thornton 2026 AI Impact Survey, published five days ago, makes the performance consequence explicit: organisations with fully integrated AI governance are nearly four times more likely to report revenue growth than those still piloting. The differentiator is not technology capability. It is accountability and the ability to prove AI is working safely, defensibly and at scale.

In Australia, this is compounding. The ASIC Report 798, which reviewed how 23 AFS and credit licensees were using AI, found that some organisations may be adopting AI faster than their risk and governance arrangements are evolving. Agentic AI accelerates that gap significantly. An agent operating in a credit decisioning workflow without adequate governance creates a regulatory exposure that the licence holder owns, not the vendor.

What This Means for Your Organisation

At Trusenta, the pattern we see consistently is this: organisations that ask us about agentic AI governance are almost always several months into agent deployment before the governance question becomes urgent. The agents are already running. The inventory does not exist. The access controls are inherited rather than designed. The audit logging is partial or absent.

Building the agentic AI governance framework before further deployment is easier than building it after. The structural controls are not complex. The agent inventory, risk classification, access architecture, oversight thresholds and audit logging are all achievable within a single quarter if the governance work is prioritised. The organisations that do this now will have the governance infrastructure to scale agent deployment confidently. The ones that wait will be building it under pressure, after something has already gone wrong.

Key Takeaways

- Agentic AI is moving to production faster than enterprise AI governance frameworks can adapt: 40% of enterprise apps are projected to include task-specific agents by end 2026, up from under 5% in 2024

- Only one in five companies has a mature governance model for autonomous AI agents (Deloitte 2026); 63% cannot enforce purpose limitations on their agents

- Agentic AI governance requires four structural controls that most existing frameworks do not address: agent inventory and classification, least-privilege access, pre-defined human oversight thresholds and immutable audit logging

- ISO 42001 and NIST AI RMF obligations already extend to AI agents, but most organisations have not operationalised this at the agent level

- Australian regulatory obligations (Privacy Act ADM, APRA, ASIC) create specific accountability requirements for agentic AI making decisions about individuals

- Organisations that govern agentic AI before scaling are nearly four times more likely to report revenue growth than those still in pilot mode (Grant Thornton 2026)

How Trusenta Can Help

AI Governance: Trusenta's AI Governance platform provides the use-case registration, risk assessment and portfolio tracking infrastructure that organisations need to bring AI agents under systematic governance before deployment, turning fragmented agent discovery into a maintained, risk-classified registry with clear accountability at each stage.

Risk Management: Trusenta's Risk Management module provides the AI-specific risk taxonomy and scoring methodology to assess agent-level risks, including prompt injection, privilege escalation, cascading failures and accountability gaps, with treatment plans linked to specific controls and monitoring cadences.

AI Governance Maturity Uplift: For organisations that have foundational governance in place but have not yet operationalised agent-level controls, this engagement designs and implements the frameworks, workflows and TRUSENTA.IO configuration needed to address the agentic AI governance gap before it becomes a regulatory or operational incident.

Conclusion

The agentic AI governance conversation has arrived. The enterprises that treat this month as the moment to build the foundation will be in a materially different position when the agents proliferate further and the regulatory environment clarifies its expectations around autonomous AI systems. The question is not whether your organisation will need agentic AI governance. It is whether you build it before you need it or after you needed it.

Author

With over 30 years of experience delivering real technology outcomes, he combines strategic insight with deep technical expertise across enterprise, cloud and AI. At Trusenta, he helps organisations move beyond AI hype to accountable, sustainable impact.

https://www.linkedin.com/in/shanecoetser/