AI Governance

AGI Is a Marketing Tool. Karen Hao Just Proved It. Here Is What That Means for Your Business.

Investigative journalist Karen Hao spent years interviewing over 300 people, including 90 current and former OpenAI insiders, to document how AI companies redefine AGI for every audience that needs to be mobilised. Her conclusion: you cannot trust the producers of AI to govern themselves. Your organisation must build that governance as a core capability of its own.

April 1, 2026

7

min read

On 26 March 2026, investigative journalist Karen Hao appeared on The Diary Of A CEO and said something every executive in Australia should take seriously. Over seven years and more than 300 interviews, including 90 current and former OpenAI employees and executives, she documented how the AI industry systematically manipulates public understanding of its technology. The definition of AGI, she argues, is not a scientific concept. It is a political tool, redefined for every audience that needs to be mobilised: cancer cure for Congress, digital assistant for consumers, $100 billion revenue engine for Microsoft. There is no coherent vision. There are only narratives calibrated for whoever controls the next resource that the industry needs.

Her conclusion is not that AI is inherently bad. It is that the governance of AI cannot be left to the companies building it, and cannot wait for governments to catch up. The accountability has to come from somewhere else. That somewhere is your organisation.

This is the first in a four-part series examining what Karen Hao got right and what it means for Australian organisations that take AI governance seriously. Not as a compliance exercise or a policy document. But as an innate organisational capability, built from the inside, owned permanently and continuously improved.

The AGI Definition Problem Is a Governance Problem

Hao's most striking finding in the episode is that the term artificial general intelligence has never had a stable definition. The field was named in 1956 partly because its founders could not agree on what intelligence meant. That ambiguity was not resolved. It was inherited by the industry and, in Hao's telling, weaponised.

When Hao asked OpenAI what AGI means, she found four different definitions: a system that will cure cancer and solve climate change when addressing government; the most powerful digital assistant imaginable when speaking to consumers; a system generating $100 billion of revenue in the investment documents with Microsoft; and highly autonomous systems that outperform humans in most economically valuable work on the company's own website. These are not variations on a single idea. They are four different things, chosen based on what each audience needs to believe.

The practical consequence for enterprises is significant. If the companies building the AI your organisation uses cannot define what their technology is supposed to achieve, they cannot be held to a coherent account of whether it is delivering. The accountability vacuum that Hao documents at the industry level begins with the definitional vacuum at the conceptual level.

For Australian organisations deploying AI, the mirror question is: do you know what your AI systems are supposed to do, well enough to measure whether they are doing it and to be accountable when they do not? If the producers of AI cannot answer this for themselves, your organisation needs to answer it for every system it deploys.

The Imperial Agenda and What It Means for Enterprise Risk

Hao's framework for understanding AI companies is empire. Not as a metaphor of convenience but as a structural analysis. Historic empires extracted land, labour and resources from people who did not consent and did not benefit. AI companies, she argues, operate on the same model: extracting data from creators and individuals without payment or consent, exploiting hundreds of thousands of low-wage workers in Kenya, Venezuela and elsewhere to annotate the data that makes the models function, monopolising AI research by employing or funding most of the scientists who would otherwise independently evaluate the technology, and using the narrative of a rival empire, historically China, to justify unchecked expansion before regulation can catch up.

For enterprise governance, the imperial agenda creates three specific risks that most Australian organisations are not yet managing.

The first is data provenance. The training data underlying the AI tools your organisation uses was, in many cases, extracted from creators and individuals without their knowledge or consent. The copyright exposure from this extraction is real and is the subject of active litigation globally. Understanding what training data underpins your vendor's models is a vendor governance question that belongs in your AI risk register.

The second is vendor accountability. Hao's reporting makes clear that AI companies are legally required to maximise profit and are structurally designed to prioritise that over public interest. When you deploy an AI tool, you are deploying a system built by an organisation whose incentive structure is not aligned with your customers' interests or your regulatory obligations. The accountability for outcomes stays with you, not with the vendor.

The third is the monopolisation of expertise. AI companies employ or fund most of the researchers who would otherwise provide independent assessment of AI capabilities and risks. The information available to enterprise decision-makers about the actual capabilities and failure modes of AI systems is filtered through a corporate lens. Your organisation's AI governance framework needs to account for the fact that vendor claims about AI capability and safety are not independent assessments.

Why You Cannot Wait for Government to Fix This

Hao is clear in the episode that she does not believe government regulation alone will resolve the accountability deficit she documents. Governments are operating in information environments partly controlled by the companies they would regulate. Regulatory frameworks are slow. The AI industry is fast. And the political will to impose genuine accountability on an industry generating so much economic growth is, in most jurisdictions, limited.

Australia's situation illustrates this precisely. The December 2025 National AI Plan confirmed a standards-led approach: existing laws, voluntary guidance and a new AI Safety Institute rather than a standalone AI Act. The Privacy Act automated decision-making obligations arriving in December 2026 establish a floor. The Guidance for AI Adoption sets out reasonable practices. None of this tells your organisation what to do about the specific AI systems you are deploying, in your specific operating context, against your specific regulatory obligations. That determination is yours to make.

Hao calls for bottoms-up governance: pressure from consumers, enterprises, civil society and professional communities forcing AI companies to meet higher standards. In the enterprise context, bottoms-up governance means your organisation choosing to govern AI systematically, as an internal capability, rather than waiting for external mandate to specify exactly what to do. It means treating AI governance as a core organisational value, as foundational to how you operate as financial governance or occupational health and safety.

The Five Questions Your Board Should Be Able to Answer

If Hao is right that AI companies cannot be trusted to define, let alone govern, their own technology, the question for your organisation is whether your internal governance structures are more coherent than the industry ones she is critiquing. Five questions make that concrete.

Can you name the executive accountable for AI risk in your organisation, along with the scope of their authority and when they last reported to the board?

For every AI system currently influencing decisions about your customers or employees, can you state what it is supposed to do and how you measure whether it is doing it?

Have you assessed the data provenance of your AI vendors, including whether the training data underlying their models creates copyright or privacy exposure for your organisation?

Do your AI vendor contracts establish accountability for governance outcomes, or does all risk default to you?

Are your deployed AI systems monitored on a schedule that matches how fast they can create problems, rather than how convenient governance meetings are?

If the honest answer to two or more of these is no, the accountability gap Hao describes at the industry level exists inside your organisation's AI programme as well.

How Trusenta Can Help

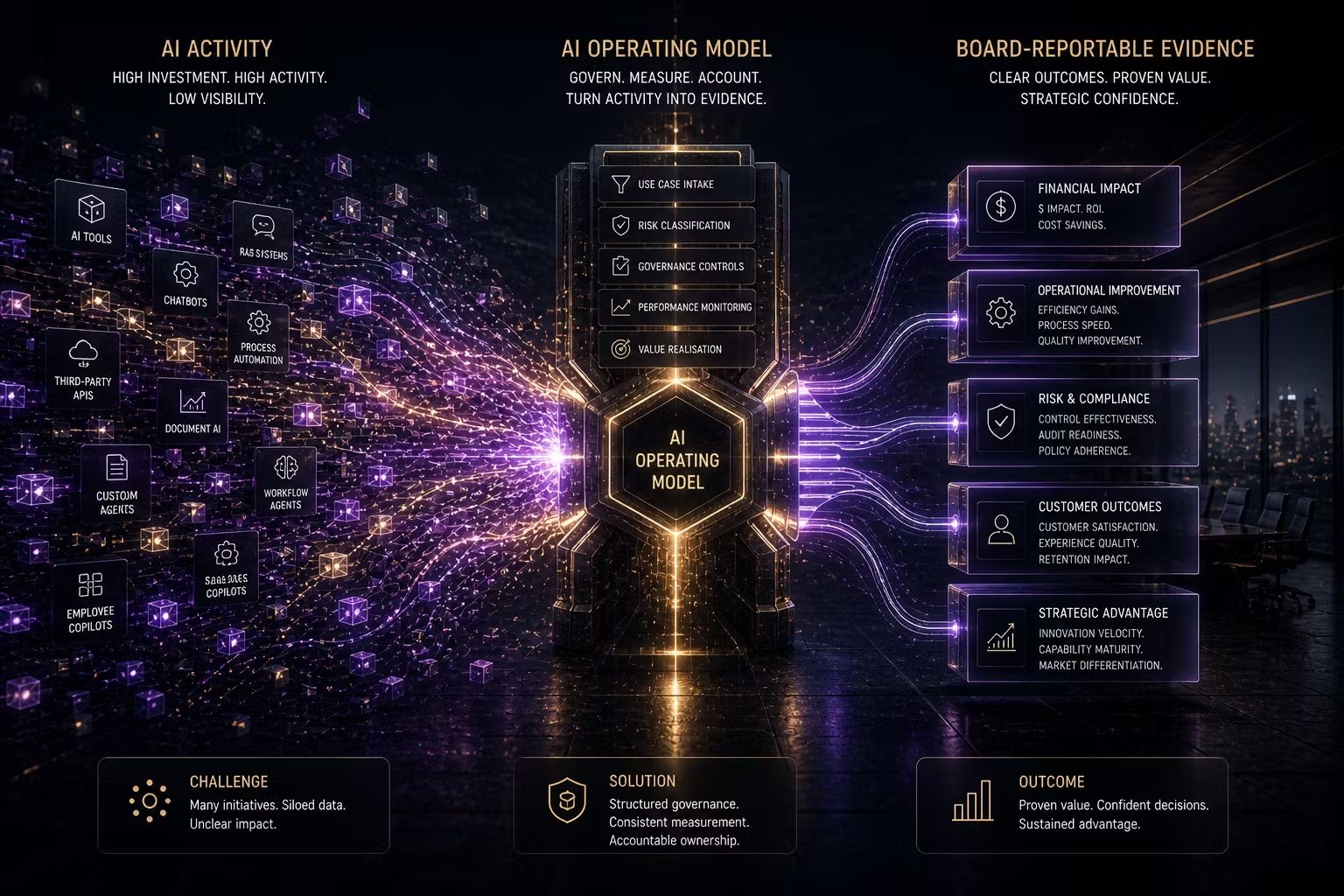

AI Governance: Trusenta's AI Governance platform provides the use-case register, risk assessment workflows and accountability tracking infrastructure that organisations need to build genuine internal governance capability. The accountability that Hao says is absent at the industry level can be built deliberately at the organisational level, with the right infrastructure and processes in place.

Fractional AI Officer: For organisations that need a senior AI leader to own the governance agenda without full-time headcount, Trusenta's Fractional AI Officer service embeds accountable executive leadership directly into the business. The governance capability starts with someone who owns it.

AI Governance Services: Trusenta's AI Governance engagements establish the intake processes, risk classification frameworks and accountability structures that turn governance from aspiration into operational practice.

Conclusion

Karen Hao spent seven years and more than 300 interviews documenting why AI companies cannot be trusted to govern themselves and why governments are struggling to keep pace. Her argument is not that AI should not exist. It is that the current governance model, where accountability is deferred to either the producers or the regulators, is inadequate. The organisations that hear this as a call to build internal governance capability rather than as a reason to wait for external direction will be in a fundamentally different position in 18 months. AI governance is not something that happens to your organisation. It is something your organisation builds, owns and continuously improves. The capacity to do that exists now. The question is whether your organisation will use it.

Author

Mark brings a rare blend of C-suite leadership and hands-on consulting experience to Trusenta. As former SVP of Services, SVP of Business Opeartions, Managing Director and CIO he brings a breadth of experinece in his specialty in guiding organisations through AI strategy, governance and adoption; bridging ambition with practical execution. His focus is on helping clients embed AI responsibly, at scale and in service of real business outcomes.

https://www.linkedin.com/in/consult-mmiller/