AI Governance

The EU AI Act's August Deadline Is Closer Than You Think. Here Is What It Actually Requires.

On 2 August 2026, the EU AI Act begins enforcing its most consequential set of requirements: the obligations governing high-risk AI systems. If your organisation uses AI to make or influence decisions about employees, customers or credit applicants, and those decisions touch anyone in the EU, the compliance window is shorter than most teams realise.

May 1, 2026

8

min read

On 2 August 2026, the EU AI Act begins enforcing its most consequential set of requirements. Not the prohibitions, which came into force in February 2025, and not the general-purpose AI model obligations, which landed in August 2025. What kicks in on 2 August is the full compliance framework for high-risk AI systems: the tier of AI that most enterprises are actively deploying, and the tier that carries the most demanding obligations.

If your organisation uses AI to evaluate employee performance, assess customer creditworthiness, screen job candidates or make decisions that significantly affect individuals, you are almost certainly in scope. And the obligation applies regardless of where your organisation is headquartered. EU users mean EU obligations.

Most compliance teams know this deadline exists. Fewer are genuinely ready for what it requires.

What the EU AI Act Actually Is

The EU AI Act, formally Regulation (EU) 2024/1689, is the world's first comprehensive legal framework for artificial intelligence. It entered into force in August 2024 and takes a risk-based approach: the higher the potential harm, the more stringent the obligations.

The Act classifies AI systems into four tiers. Unacceptable risk systems are banned outright: real-time biometric surveillance in public spaces, social scoring by governments, and AI manipulating behaviour through subliminal techniques. These prohibitions have been in force since February 2025. Minimal and limited risk systems face lighter or no mandatory requirements. High-risk systems, defined in Annex III of the Act, sit in the middle: permitted, but subject to a substantial compliance framework before they can be deployed to EU users.

The August 2026 deadline is specifically about Annex III. Everything in the highest-stakes tier of permitted AI becomes enforceable on that date.

Which AI Systems Qualify as High-Risk

Annex III lists the categories of AI systems the Act treats as high-risk. Understanding whether your AI deployments fall within scope is the starting point for any compliance programme.

The categories include AI systems used in biometrics and biometric categorisation; critical infrastructure management; education and vocational training (determining access, grading, assessing exam integrity); employment and worker management, including recruitment, CV screening, performance evaluation and task allocation; access to essential private services such as credit scoring and creditworthiness assessment; law enforcement; migration and border control; and the administration of justice.

In practice, the most common enterprise exposure falls in three areas. HR and people management AI, which covers any system influencing hiring decisions, performance ratings, promotion or termination. Financial services AI, which covers creditworthiness assessment, credit scoring and insurance risk evaluation. And customer-facing AI that influences access to services, pricing tiers or product eligibility based on automated assessment of individual characteristics.

A common misconception is that the high-risk classification only applies to systems your organisation built. It does not. If your organisation deploys a third-party AI tool that falls within an Annex III category, you are a deployer under the Act and subject to deployer obligations. The compliance responsibility does not transfer to the vendor by default. In fact, one of the most important governance steps is reviewing your AI vendor contracts to confirm that accountability is clearly allocated, because the Act does not assume it is.

What Compliance Actually Requires

The obligations for providers and deployers of high-risk AI systems are substantive. This is not a transparency requirement or a disclosure exercise. It is a systematic compliance framework requiring documented governance, ongoing monitoring and in some cases third-party assessment.

For providers, the principal obligations include implementing and documenting a risk management system that runs continuously throughout the AI system's lifecycle, not just at the point of deployment. Providers must maintain detailed technical documentation covering system design, training data, testing methodology and performance metrics. They must establish a quality management system. They must ensure the high-risk AI system undergoes a conformity assessment, either self-assessment or third-party depending on the category. They must register the system in the EU database before placing it on the market. And they must affix CE marking, the same mechanism used for physical products under EU product safety law.

For deployers, the obligations are somewhat lighter but still demanding. Deployers must implement appropriate technical and organisational measures to ensure they use high-risk AI systems in accordance with the provider's instructions and monitor their operation continuously. Certain deployers, specifically public bodies and private entities providing public services, plus deployers of AI systems evaluating creditworthiness or assessing insurance risk, must also conduct a Fundamental Rights Impact Assessment before deploying the system. This is a structured assessment of the system's potential impact on the rights of individuals it affects, comparable in intent to a data protection impact assessment under GDPR.

Human oversight is a specific requirement, not a general principle. High-risk AI systems must be designed to enable effective human oversight, including the ability to halt the system or override its outputs when necessary. This is not satisfied by having a human somewhere in the process. The oversight mechanism must be meaningful and documented.

The Penalties, Accurately Stated

The EU AI Act's penalty regime is significant, and it is worth being precise about which penalties apply to which violations.

For the prohibited practices in Annex I, such as real-time biometric surveillance or social scoring, fines reach up to €35 million or 7% of global annual turnover, whichever is higher. These are the headline numbers that most coverage leads with.

For violations of the high-risk AI system obligations, the ceiling is up to €15 million or 3% of global annual turnover. That is still higher than most GDPR enforcement actions in practice, and regulators have been explicit that enforcement will not begin softly.

For supplying incorrect, incomplete or misleading information to authorities, the ceiling is €7.5 million or 1% of global annual turnover.

One clarification worth noting: the penalty regime for general-purpose AI model providers has been delayed until August 2026, aligning with the high-risk enforcement powers. But the penalty regime for the prohibited practices and most other obligations has been in effect since August 2025. This is not a future risk. The enforcement infrastructure, national competent authorities in every EU member state and the EU AI Office, is already operational.

The Extraterritorial Reach

The EU AI Act mirrors the GDPR in its territorial scope. Any organisation, regardless of where it is incorporated or headquartered, must comply if its AI systems are used within the EU or produce outputs that affect EU residents.

An Australian financial institution using AI for loan approvals that serves European customers is within scope. An Australian HR technology company whose AI recruitment tool is used by EU-based employers is a provider within scope. An Australian professional services firm using a third-party AI tool to evaluate the performance of EU-based staff is a deployer within scope.

The extraterritorial reach is not theoretical. Holland and Knight's April 2026 analysis specifically flags that US and non-EU companies face this compliance window, and the same logic applies to Australian organisations. The relevant test is not where you are located. It is whether your AI systems touch EU users or EU residents.

The Digital Omnibus Caveat

In November 2025, the European Commission proposed a Digital Omnibus package that, if enacted, would potentially extend the August 2026 deadline for Annex III high-risk systems. The proposal would link the application of high-risk rules to the availability of harmonised technical standards, with a long-stop date of December 2027 for some categories.

As of May 2026, the Digital Omnibus has not been enacted. It is under negotiation in the European Parliament and Council. The August 2026 deadline remains the legally binding obligation under existing law.

Legal advisers, including Holland and Knight and DLA Piper, have been explicit on this point: organisations should not plan around the Omnibus extension. If the extension passes, compliance work done in the interim will not be wasted. If it does not pass, organisations that relied on the extension will be non-compliant from 2 August. The appropriate posture is to plan for August 2026 while monitoring legislative progress.

Where Most Organisations Stand Right Now

The honest picture is that most organisations are behind. A ComplianceHub analysis published in April 2026, with 96 days to the deadline, found that the majority of enterprises have not completed the Annex III audit, conformity assessment or technical documentation required before the deadline.

The work required is not trivial. A Fundamental Rights Impact Assessment for a credit scoring system takes weeks to design and complete properly. Technical documentation for a recruitment AI system requires coordinating with the vendor, understanding model architecture and documenting training data governance. Quality management systems require process design and evidence of operation, not just policy documents. And the EU database registration requires completing the conformity assessment first.

Three months is enough time to start. It is not enough time to do everything from a standing start. The organisations that will be in a defensible position on 2 August are those that have already mapped their AI systems against the Annex III categories and begun the governance work, rather than those planning to begin it in July.

What This Means for Australian Organisations

At Trusenta, the pattern is familiar. Regulatory deadlines that feel distant in January become urgent in May and critical in July. The EU AI Act August deadline is following that trajectory, and the consequences of non-compliance are not abstract: regulatory fines, mandatory suspension of high-risk AI systems in EU markets, civil liability from affected individuals and reputational damage in the markets where trust in AI governance is increasingly a commercial differentiator.

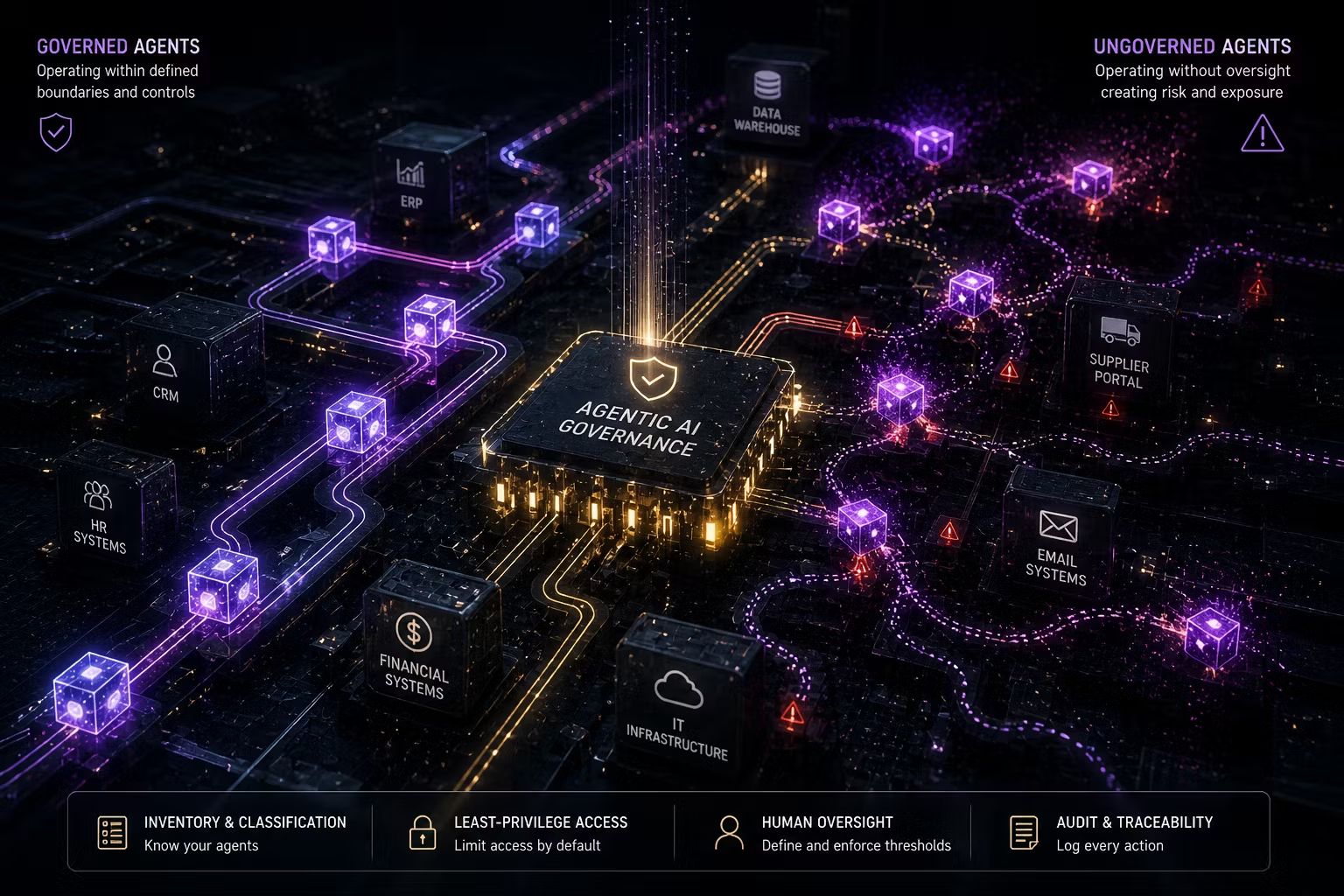

For Australian organisations with EU exposure, the governance work required for EU AI Act compliance overlaps substantially with the governance work that responsible AI practice requires in any jurisdiction. Building an AI inventory that maps systems to risk categories, conducting structured risk assessments before deployment, establishing human oversight mechanisms and maintaining technical documentation are not compliance costs. They are the infrastructure of an AI programme that is built to last.

The EU AI Act is the most significant AI governance obligation Australian organisations with EU operations currently face. It will not be the last.

Key Takeaways

- 2 August 2026 is the enforcement date for Annex III high-risk AI system obligations under the EU AI Act. This applies to any organisation whose AI systems touch EU users, regardless of where the organisation is headquartered

- High-risk AI includes systems used in employee performance management, recruitment, credit scoring, creditworthiness assessment, biometric categorisation and certain customer access decisions

- Deploying a third-party AI tool does not transfer compliance responsibility to the vendor. Deployers carry their own obligations, including monitoring, instructions compliance and in some cases Fundamental Rights Impact Assessments

- Fines for high-risk system violations reach up to €15 million or 3% of global annual turnover. Fines for prohibited practices reach up to €35 million or 7%. The penalty regime for most obligations has been in effect since August 2025

- The Digital Omnibus proposal could extend the deadline to December 2027, but it has not been enacted as of May 2026. Plan for August 2026

- Most organisations are behind on the compliance work required before the deadline. A risk classification audit, conformity assessment, technical documentation and quality management system cannot be completed from a standing start in a few weeks

How Trusenta Can Help

Compliance Management: Trusenta's Compliance Management platform maps controls across the EU AI Act, ISO 42001, NIST AI RMF and the Australian Guidance for AI Adoption simultaneously, so organisations with multi-jurisdictional obligations can generate compliance evidence across frameworks without duplicating effort. For organisations facing both the EU AI Act August deadline and Australia's Privacy Act ADM obligations in December 2026, the platform approach significantly reduces the compliance burden.

Risk Management: The Annex III risk classification audit, Fundamental Rights Impact Assessment and ongoing monitoring obligations all require structured risk assessment infrastructure. Trusenta's Risk Management module provides the AI-specific risk taxonomy, scoring methodology and treatment plan documentation needed to satisfy the EU AI Act's risk management system requirements.

AI Governance Services: For organisations that need expert support to complete the Annex III classification audit, technical documentation, conformity assessment preparation and quality management system design before 2 August, Trusenta's AI Governance engagements are structured to deliver these outputs within the available compliance window.

Conclusion

The EU AI Act's August 2026 deadline is not a future risk to monitor. It is an approaching obligation that requires governance work your organisation needs to have started. The technical documentation, risk management systems, conformity assessments and human oversight mechanisms required for Annex III high-risk AI systems take months to build properly. The organisations that treat this as a governance infrastructure investment rather than a last-minute compliance sprint will be in a fundamentally different position when 2 August arrives. The deadline does not move for organisations that were not ready. The enforcement infrastructure is already in place.

Author

Mark brings a rare blend of C-suite leadership and hands-on consulting experience to Trusenta. As former SVP of Services, SVP of Business Opeartions, Managing Director and CIO he brings a breadth of experinece in his specialty in guiding organisations through AI strategy, governance and adoption; bridging ambition with practical execution. His focus is on helping clients embed AI responsibly, at scale and in service of real business outcomes.

https://www.linkedin.com/in/consult-mmiller/