AI Governance

The AI Industry Is Built on Hidden Labour. What That Means for Your Vendor Governance.

Karen Hao's Empire of AI documents the Kenyan data workers earning a few dollars a day to make ChatGPT safe for Western consumers. The enterprise governance lesson from this is not about sympathy. It is about vendor accountability, data provenance and the legal exposure Australian organisations are carrying without knowing it.

April 7, 2026

7

min read

In the Diary Of A CEO episode published 26 March 2026, Karen Hao described a woman named Winnie who worked for a platform called Remotasks, the back end for Scale AI, one of the primary contractors providing training data to the AI industry. Winnie was one of hundreds of thousands of workers in Kenya, Venezuela and other countries doing the foundational data annotation work that makes AI models function: labelling images, moderating toxic content, teaching models what dialogue looks like. For this work, some earned a few dollars a day. Many encountered deeply distressing content with minimal psychological support. None had meaningful recourse when the work disappeared or conditions changed.

Hao is clear that this is not incidental to how AI companies work. It is structural. The business model depends on access to enormous volumes of cheap labour. AI companies extracted that labour from vulnerable workers in the Global South the same way historic empires extracted resources from the territories they occupied: at scale, at minimal cost, and with the narrative that the enterprise ultimately served the greater good.

This is Part 2 of our series on what Karen Hao got right and what it means for Australian organisations. Part 1 examined the AGI definition problem and the governance vacuum at the industry level. This post examines the labour and supply chain dimension, and the specific vendor governance risks it creates for Australian enterprises.

The Three Resource Extractions Hao Documents

Hao's framework is empire. The parallel she draws is not rhetorical. Empires extract resources from people who do not consent and do not proportionately benefit. AI companies, she argues, do the same across three categories.

Data from creators. The training datasets underlying most major AI models were built by scraping the internet without the consent of the writers, artists, photographers, journalists and other creators whose work was used. The legal status of this extraction is actively contested in courts in Australia and globally. The copyright exposure from deploying AI tools trained on contested data is real and not yet fully understood.

Labour from data workers. Hao's reporting on data annotation workers in Kenya, Venezuela and the Philippines documents people earning wages well below what the value they create would suggest is equitable, doing work that is psychologically demanding, with minimal stability or recourse. This is not a problem confined to the companies doing the annotation. It is a problem in the supply chain of every enterprise using the AI models those workers trained.

Natural resources from communities. Hao's book documents Chilean communities whose water supply is strained by data centres built to power AI development, and communities near AI infrastructure in Memphis experiencing increased rates of respiratory illness from associated power generation. The environmental and community costs of the AI infrastructure your organisation's vendors depend on are borne by people who have no relationship with your business and no voice in the decisions being made.

Why This Is an Enterprise Governance Problem, Not Just an Ethics Problem

The question for Australian enterprises is not whether they agree with Hao's analysis of the AI industry. The question is what legal and reputational exposure the practices she documents create for organisations deploying AI from these supply chains.

Three Australian-specific exposures are worth being direct about.

Vendor accountability under Australian consumer law. If an AI tool your organisation deploys produces a discriminatory or misleading outcome because of how it was trained, the accountability for that outcome does not rest with the vendor. The Australian Consumer Law, APRA prudential standards and ASIC conduct obligations all establish that the organisation deploying the tool is accountable for its outputs. Understanding the training data provenance of your AI vendors is not a philosophical exercise. It is a due diligence requirement for managing your organisation's legal exposure.

Privacy Act implications from contested training data. The Privacy Act amendments arriving in December 2026 require disclosure about substantially automated decisions affecting individuals. If those decisions are made by a model trained on personal data extracted without consent, the governance question of whether your organisation has adequately disclosed the nature of the automated decision system becomes significantly more complex. The Privacy Commissioner's guidance on AI and the Privacy Act, published in 2024, is explicit that existing obligations apply regardless of whether the AI system was internally built or externally sourced.

Modern slavery and supply chain obligations. Australia's Modern Slavery Act requires organisations with annual consolidated revenue of $100 million or more to report on modern slavery risks in their operations and supply chains. The data annotation labour practices Hao documents, involving workers earning minimal wages under conditions with limited worker protections, meet the definitional threshold that warrants assessment and disclosure. Most Australian modern slavery statements do not yet address AI supply chains. That gap is likely to attract regulatory and civil society attention as the practices become more widely understood.

What Genuine Vendor Governance Looks Like

The answer to the vendor governance risk Hao documents is not to avoid using AI. The productivity case for AI tools is real, and the competitive pressure is genuine. The answer is to govern the vendor relationship with the same rigour your organisation applies to other significant third-party relationships.

That means asking vendors specific questions about training data provenance: what data was used, from what sources, under what licence or consent framework, and what the organisation's position is on the legal challenges that data extraction is currently facing.

It means reviewing your AI vendor contracts to confirm that accountability for governance outcomes, including outcomes that emerge from training data or model design rather than your organisation's configuration, is clearly allocated rather than defaulted entirely to you.

It means including AI vendors in your third-party risk assessment processes with a risk tier that reflects both the sensitivity of what those systems do and the supply chain practices underlying the technology.

And it means monitoring vendor practices over time, not just at the point of procurement. AI companies are changing rapidly. A vendor that meets your governance standards today may not meet them in twelve months if they change their training approach, modify their data governance policies or face adverse findings in litigation that changes the legal landscape around their data practices.

AI Governance as an Innate Organisational Capability

Hao's call to action in the episode is for bottoms-up governance: enterprises, consumers and civil society organisations choosing to hold AI companies to higher standards rather than waiting for regulation to mandate it. For Australian enterprises, that bottoms-up governance begins with treating vendor governance as a genuine component of your AI risk framework, not a procurement formality completed at the point of signing a contract.

The organisations that build this capability now, making vendor accountability, data provenance assessment and supply chain transparency part of how they adopt AI, will be in a materially different position when regulatory requirements become more specific and when civil society pressure on AI supply chain practices intensifies. That pressure is building. The Karen Hao episode is the most public articulation yet of why it is legitimate and why it will not diminish.

Your organisation does not need to wait for a regulator to tell it to govern its AI vendors rigorously. The case for doing so is already complete. The capability to do it exists. The question is whether your organisation treats vendor governance as an innate part of how it adopts AI or as a box to check when required.

Key Takeaways

- Karen Hao documents three resource extractions underlying AI: data from creators without consent, labour from low-wage workers in the Global South and natural resources from communities hosting AI infrastructure

- Under Australian law, accountability for AI-driven outcomes stays with the deploying organisation, not the vendor. Training data provenance is a vendor due diligence requirement, not a philosophical position

- Privacy Act ADM obligations arriving December 2026 apply regardless of whether the AI system was internally built or externally sourced

- Australia's Modern Slavery Act may require disclosure of AI data annotation supply chains for organisations over the $100 million reporting threshold

- Genuine vendor governance means asking specific provenance questions at procurement, allocating accountability clearly in contracts, including AI vendors in third-party risk processes and monitoring vendor practices over time

- Bottoms-up governance from enterprises is Hao's prescribed response. For Australian organisations, that governance starts with treating AI vendor risk as real and consequential

How Trusenta Can Help

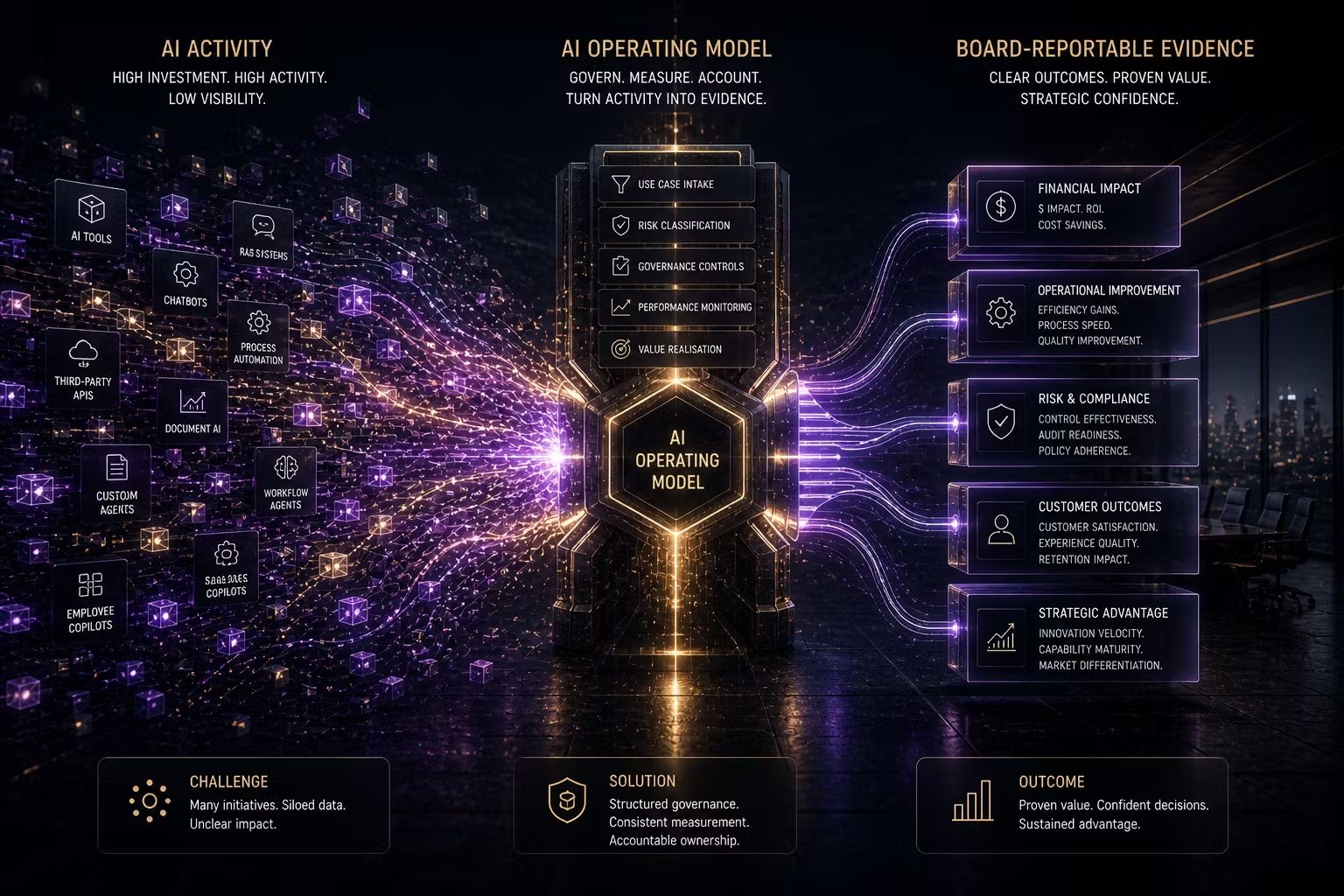

AI Governance: Trusenta's AI Governance platform provides the use-case intake and risk assessment infrastructure to register AI systems, document their vendor relationships and classify their risk profile, including the vendor governance and data provenance dimensions that the Karen Hao episode brings into focus.

Risk Management: Trusenta's Risk Management module provides the AI-specific risk taxonomy and scoring methodology to assess vendor supply chain risk, data provenance exposure and accountability allocation consistently across your AI portfolio.

AI Governance Maturity Uplift: For organisations that have some governance foundations in place but have not yet systematically addressed vendor governance and supply chain risk, this engagement designs and implements the frameworks that make vendor accountability a genuine operating discipline rather than a procurement formality.

Author

Mark brings a rare blend of C-suite leadership and hands-on consulting experience to Trusenta. As former SVP of Services, SVP of Business Opeartions, Managing Director and CIO he brings a breadth of experinece in his specialty in guiding organisations through AI strategy, governance and adoption; bridging ambition with practical execution. His focus is on helping clients embed AI responsibly, at scale and in service of real business outcomes.

https://www.linkedin.com/in/consult-mmiller/