AI Governance

Sovereign AI Australia: What the National AI Plan Means for Your Compliance Programme Right Now

The Australian Government's March 2026 National AI Plan expectations, the Defence AI policy and the Privacy Act ADM obligations arriving in December are creating a layered sovereign AI compliance framework faster than most compliance teams have planned for. This post maps what sovereign AI means for Australian enterprise compliance and how to build governance infrastructure that satisfies multiple obligations simultaneously.

May 11, 2026

7

min read

On 23 March 2026, the Australian Government released formal expectations for data centre and AI infrastructure developers as part of the National AI Plan. The same month, the Department of Defence published a binding policy on responsible AI use applying across the ADF and its broader portfolio. Combined with the Privacy Act ADM obligations arriving in December, Australia is moving from voluntary AI guidance to a layered, enforceable sovereign AI framework faster than most compliance teams have planned for.

For compliance and risk leaders, sovereign AI Australia is no longer a policy discussion or a geopolitical term. It is an active compliance obligation that sits at the intersection of privacy law, critical infrastructure regulation, national security obligations and AI governance frameworks. The organisations without structured assurance processes in place are already behind.

This post maps what sovereign AI means for Australian enterprise compliance specifically, what the emerging obligations require and how organisations can build the governance infrastructure to satisfy them without creating a parallel workstream that competes with existing compliance priorities.

What Sovereign AI Actually Means for Australian Enterprises

The term sovereign AI entered widespread circulation in 2024 and 2025 through the lens of national governments wanting strategic independence from foreign AI infrastructure. Australia's National AI Plan applies this logic directly: it ties AI to the Future Made in Australia agenda, to domestic data residency, to energy security and workforce obligations associated with AI infrastructure.

For enterprises, sovereign AI in the Australian context means four things.

Data residency and sovereignty requirements. The National AI Plan expects data used in AI systems for sensitive government and critical infrastructure applications to remain within Australian jurisdiction. For organisations in the government supply chain, this creates specific data architecture requirements. For those using AI vendors with offshore infrastructure, it creates a vendor governance obligation: understanding where data processed by AI systems physically resides and under which legal jurisdiction.

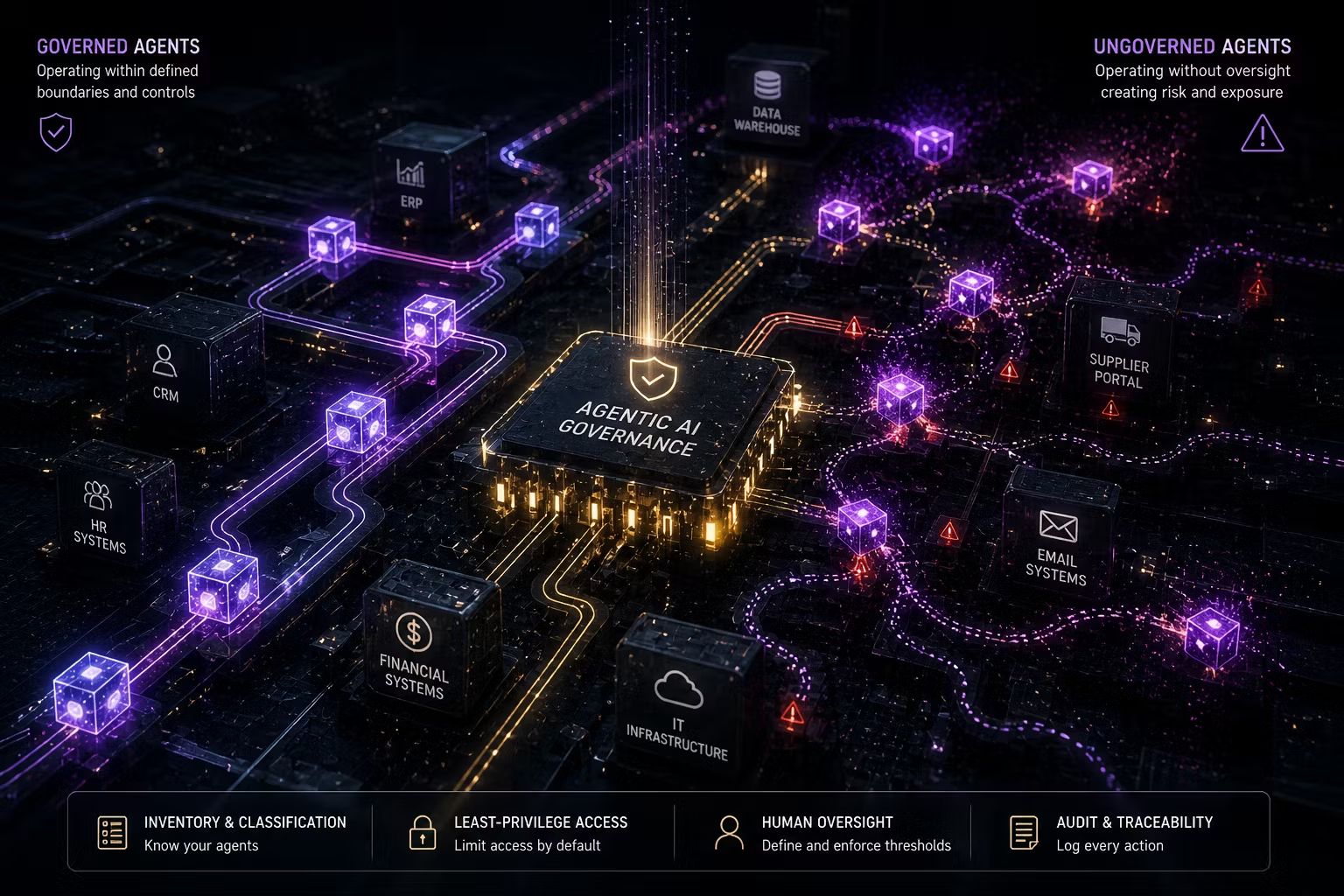

Security of Critical Infrastructure Act obligations. For organisations in critical infrastructure sectors (financial services, healthcare, energy, water, transport, communications, data storage), the SOCI Act creates obligations around how AI systems that could affect critical assets are managed and secured. AI agents and automated decision systems operating within critical infrastructure now sit within this framework.

Defence supply chain obligations. The Department of Defence's March 2026 Responsible AI in Defence policy is a binding governance framework. Organisations that supply to Defence or that are part of the broader defence industrial base need to understand how their AI governance frameworks align with this policy's requirements around accountability, explainability and human oversight.

National AI Plan data centre expectations. The March 2026 expectations for data centre and AI infrastructure developers create compliance surfaces around energy, water, workforce and national security that organisations building or operating AI infrastructure must now satisfy. For compliance leaders in this space, the cumulative effect is a new category of risk that does not fit neatly into any single existing framework.

The Privacy Act ADM Obligation: What Sovereign AI Compliance Requires by December 2026

The most immediately actionable sovereign AI compliance obligation for most Australian enterprises is the Privacy Act automated decision-making requirement taking effect on 10 December 2026. This is not confined to government or critical infrastructure. Any organisation using AI to make or substantially contribute to decisions that significantly affect individuals, including credit decisions, employment decisions, insurance risk assessment, content personalisation that affects access to services and healthcare triage, needs to disclose this in its privacy policy.

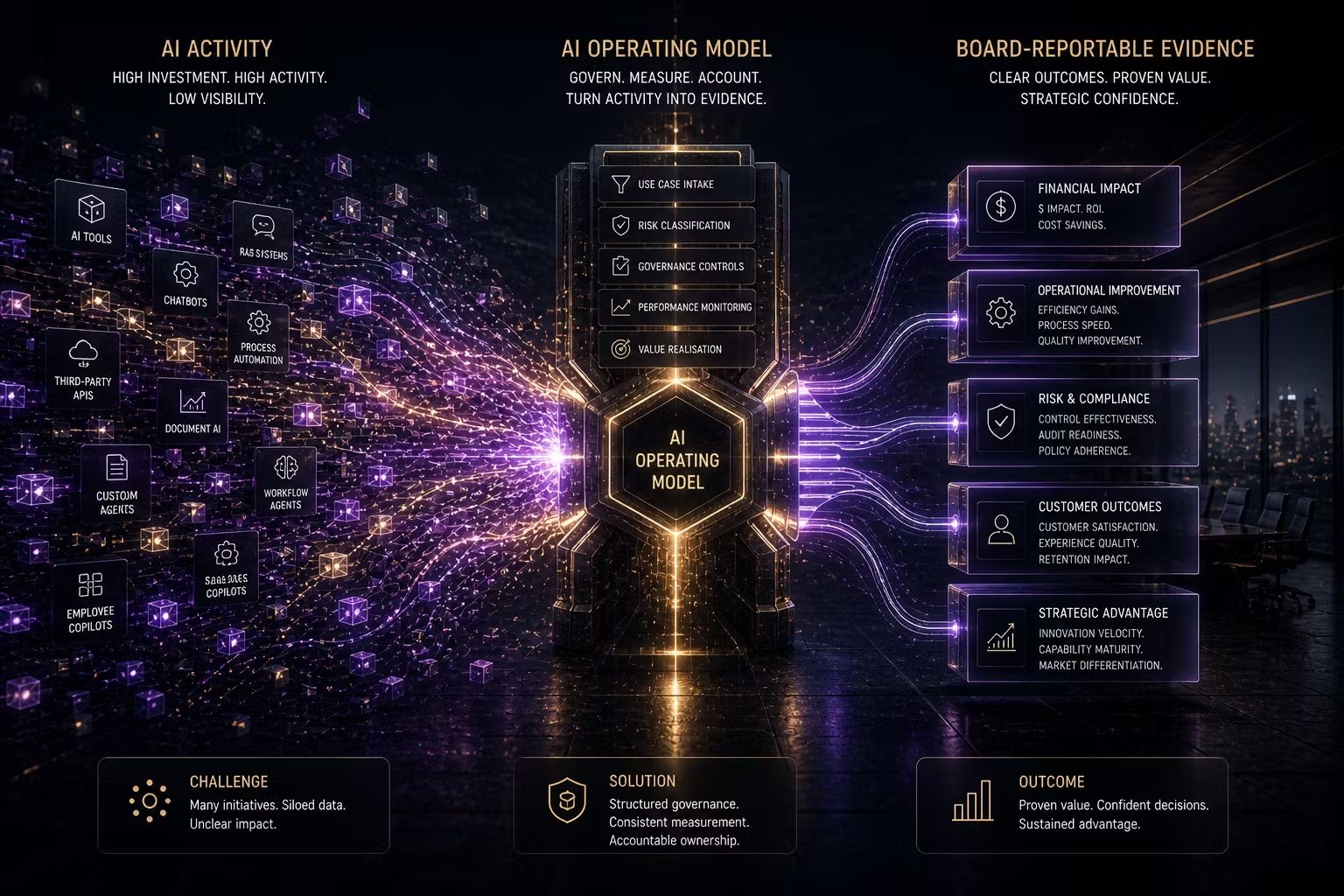

The practical work this requires is identical to the work an AI governance framework requires: build an inventory of AI systems making or influencing decisions about individuals, classify which meet the substantially automated threshold, document what data those systems use and how decisions are made, and ensure that documentation is current enough to be incorporated into a privacy policy disclosure.

Organisations that approach this as a privacy compliance exercise in isolation will complete it twice: once for the privacy policy disclosure and again when the next regulatory or procurement request asks for the same information in a governance context. Trusenta's Compliance Management platform is designed to eliminate this duplication. Controls mapped against the Privacy Act ADM requirements also map against ISO 42001 Annex A controls, the NIST AI RMF GOVERN and MAP functions and the Guidance for AI Adoption's accountability requirements. Build it once. Use it across every framework that asks for the same evidence.

Sovereign AI and the ISO 42001 Alignment

ISO 42001, formally AS ISO/IEC 42001:2023 in Australia, provides the management system architecture within which sovereign AI governance obligations can be operationalised. The standard's requirements for AI use case registration, risk assessment, accountability structures and ongoing monitoring directly address the accountability and oversight expectations embedded in the National AI Plan, the Defence AI policy and the emerging critical infrastructure guidance.

For organisations already pursuing ISO 42001 certification, the sovereign AI compliance obligations are not additional work. They are contextual requirements that inform the scope and content of the AI Management System. The AI systems in scope for sovereign AI compliance are a subset of the AI systems in scope for ISO 42001. The controls required overlap substantially. The organisations that have built their AIMS to ISO 42001 specifications are building the same infrastructure that sovereign AI compliance requires.

For organisations that have not yet started an ISO 42001 journey, sovereign AI compliance provides a compelling argument for doing so. It is the only independently certifiable AI governance standard available in Australia, and it provides the documented, auditable evidence trail that satisfies regulatory scrutiny in a way that policy documents alone cannot.

The APRA and ASIC Dimension

ASIC's Report 798 reviewed AI governance practices at 23 AFS and credit licensees and found that some may be adopting AI faster than their governance arrangements are evolving. ASIC's concern is not academic. In the sovereign AI context, an AFS or credit licensee using AI that relies on offshore data processing, that makes credit decisions using models trained on data outside Australian jurisdiction or that operates without the accountability documentation ASIC expects creates a specific regulatory exposure.

APRA's prudential standards create equivalent obligations. Regulated entities are required to understand and manage the risks of AI systems they deploy, regardless of whether those systems are internally built or vendor-supplied. The offshore AI vendor whose model processes Australian customer data under foreign jurisdiction is not the source of the prudential obligation. The Australian licence holder is.

The governance infrastructure required to satisfy APRA prudential standards in an AI-enabled environment is the same infrastructure required for sovereign AI compliance: AI system inventory, data provenance documentation, vendor governance frameworks and ongoing monitoring of deployed AI systems.

What This Means for Compliance Leaders Right Now

At Trusenta, the organisations we work with that are furthest ahead on sovereign AI compliance are not those that have built a separate sovereign AI programme. They are those that have built an AI governance framework rigorous enough that sovereign AI compliance is a configuration of that framework, not a new workstream.

The practical implication is clear: sovereign AI compliance is not a reason to build a parallel governance structure. It is a reason to build your AI governance framework with sufficient rigour that it can satisfy multiple overlapping obligations simultaneously. The Privacy Act ADM requirements arriving in December, the ISO 42001 certification process, the APRA and ASIC governance expectations and the National AI Plan accountability requirements all draw from the same foundational capability: an organisation that knows what AI systems it is running, what those systems do, who owns them and how they are monitored.

Building that capability now, before December, is the compliance programme. Everything else is a reporting exercise on top of it.

Key Takeaways

- Sovereign AI Australia is no longer a policy term. The Australian Government's March 2026 National AI Plan expectations and the Defence AI policy create active compliance obligations for organisations in the government supply chain and critical infrastructure sectors

- The Privacy Act ADM requirements from December 2026 apply to most Australian enterprises using AI in decisions affecting individuals, not just government or critical infrastructure operators

- Four sovereign AI obligations apply to different enterprise segments: data residency requirements, SOCI Act obligations, Defence supply chain requirements and National AI Plan data centre expectations

- ISO 42001 provides the management system architecture within which sovereign AI compliance can be operationalised: organisations building an AI Management System to ISO 42001 are building the same infrastructure sovereign AI compliance requires

- APRA and ASIC governance expectations apply to AI regardless of whether systems are internally built or vendor-supplied, and regardless of where data is processed by those systems

- Building a single AI governance framework rigorous enough to satisfy multiple overlapping obligations is materially more efficient than treating each regulatory requirement as a separate compliance project

How Trusenta Can Help

Compliance Management: Trusenta's Compliance Management platform maps controls across the Privacy Act ADM requirements, ISO 42001, the NIST AI RMF and the Guidance for AI Adoption simultaneously, generating audit-ready evidence across all frameworks without duplicating effort. For organisations facing sovereign AI obligations alongside existing compliance programmes, the platform approach is what makes the compliance infrastructure sustainable.

AI Governance: The AI system inventory, data provenance documentation and accountability structure that sovereign AI compliance requires are built on Trusenta's AI Governance platform, providing the foundational visibility into what AI systems are running and who owns them that no compliance programme can succeed without.

AI Governance Foundations: For organisations that need to build the governance infrastructure required for sovereign AI compliance from the ground up, this 10-day engagement establishes the intake processes, risk classification framework and accountability structures that make compliance possible from the outset rather than retrofitted after obligations take effect.

Conclusion

Sovereign AI Australia is the governance story of 2026. The policy landscape that was emerging twelve months ago is now a set of active compliance obligations with specific deadlines and enforcement mechanisms. The organisations that treat this as a reason to build rigorous, multi-framework AI governance capability will find that the investment satisfies regulatory requirements they cannot yet anticipate. The organisations that treat each emerging obligation as a separate compliance project will spend the next three years rebuilding the same foundational infrastructure multiple times. One approach is sustainable. The other is not.

Author

With over 30 years of experience delivering real technology outcomes, he combines strategic insight with deep technical expertise across enterprise, cloud and AI. At Trusenta, he helps organisations move beyond AI hype to accountable, sustainable impact.

https://www.linkedin.com/in/shanecoetser/