AI Strategy

Boards Are Asking for Proof That AI Is Working. Here Is Why Most Organisations Cannot Provide It.

In 2026, boards have stopped asking whether their organisations are using AI and started asking for proof that it is delivering returns. Most organisations are reaching for consulting firm surveys to answer that question. This post explains why that is the wrong answer and what the AI operating model actually looks like when it is built to produce evidence your organisation owns.

May 13, 2026

7

min read

Venky Ganesan, a partner at Menlo Ventures, put it plainly to Axios earlier this year: "2026 is the show me the money year for AI." That observation does not require a survey to validate. Walk into any CFO conversation or board strategy session in Australia right now and the question has visibly changed. It used to be "are we doing AI?" It is now "prove it is working."

Most organisations are not ready for that question. Not because they lack ambition or investment, but because they have confused deploying AI with governing it. They have the tools. They do not have the measurement infrastructure to demonstrate what those tools are actually producing.

The instinctive response is to reach for consulting firm research. A survey finding that governance-mature organisations outperform their peers. A benchmark showing AI leaders growing faster. These feel like evidence. They are not. A board asking for proof that your AI investment is working is not asking how governance-mature organisations perform in general. It is asking what your organisation has to show for the specific investment it made. No external survey can answer that. Only your organisation's own data can.

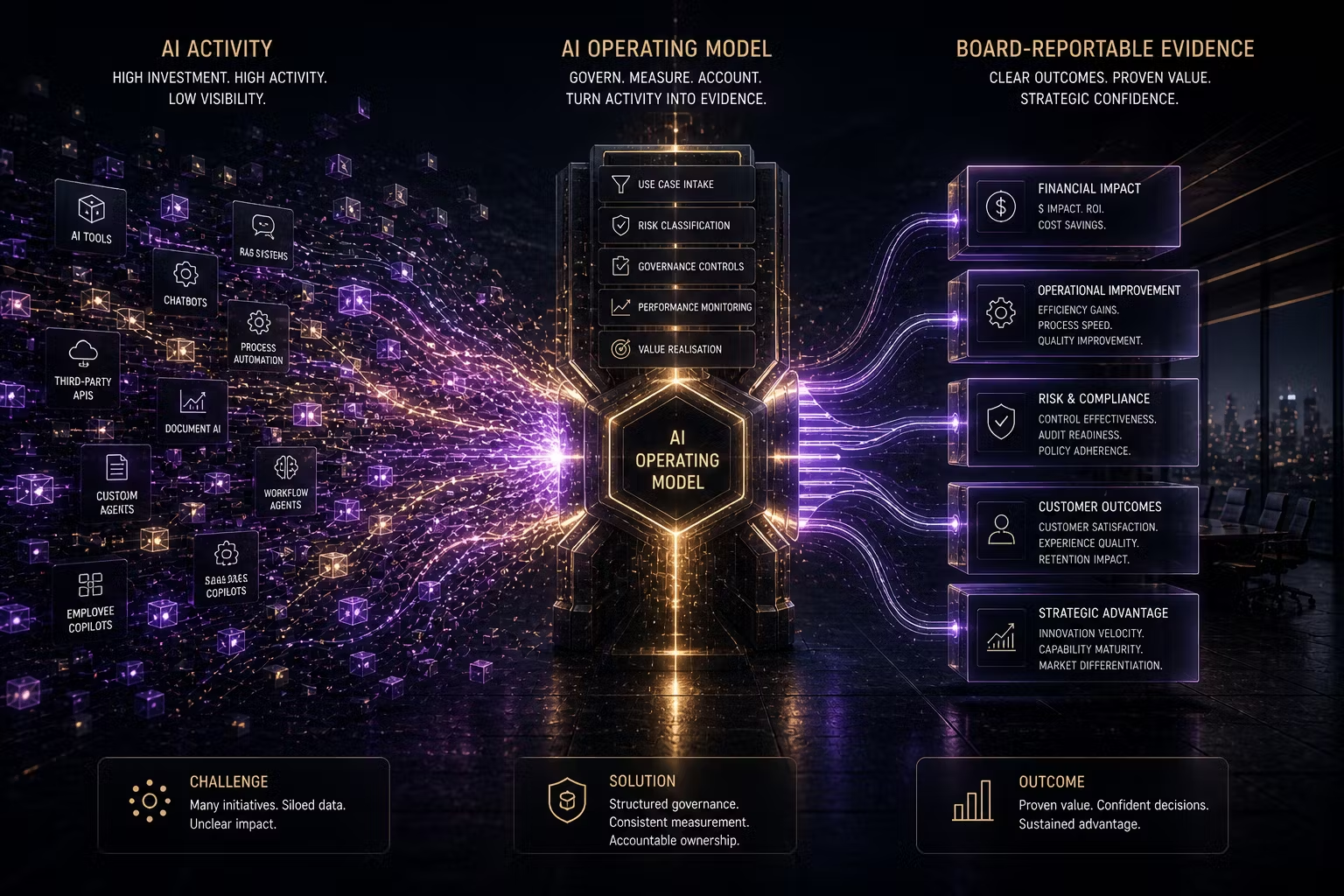

This is what the AI operating model exists to produce. Not compliance. Not risk mitigation, though it delivers both. Measurement infrastructure that your organisation owns, that produces board-reportable evidence of AI performance from your own operations rather than from an industry report.

Why Survey Data Is the Wrong Answer to a Board Question

The AI research market has become extraordinarily productive. Deloitte, McKinsey, Grant Thornton, PwC and a dozen others are publishing AI performance surveys simultaneously, each finding that governance-mature organisations outperform those still piloting, each concluding that you need the services the publishing firm provides. The directional finding is probably real. Well-governed organisations tend to be better managed across the board, and AI governance maturity is likely both a cause and a reflection of broader organisational discipline.

But the specific numbers are self-reported estimates from respondents with a commercial relationship to the firms that recruited them. The definitions of "governance maturity" vary between surveys. The sample composition is rarely transparent. And the correlation between governance and performance, even where it holds, cannot tell you whether your organisation's AI investment is delivering the specific outcomes you committed to when you funded it.

A board that has moved to "prove it" is not looking for benchmarks. It is looking for attribution. This system cost this much. It produced this outcome. We know because we measured it this way. That answer requires measurement infrastructure you built and own. It cannot be borrowed from a survey.

The Gap Between AI Activity and AI Value

The gap between deploying AI and demonstrating its value is structural, not attitudinal. Most organisations have invested significantly in AI adoption. The tools are running. Productivity gains are being felt. Processes are faster. But when the board asks for the P&L impact, the honest answer at most organisations is a qualified estimate rather than a documented measurement.

The reason is that AI was deployed without a value hypothesis. Not a general expectation that AI would improve productivity, but a specific, pre-deployment statement of what the system was supposed to produce, how that production would be measured and what the baseline was before the system was introduced. Without a pre-deployment baseline and a defined measurement approach, post-deployment attribution is guesswork dressed as analysis.

This is the operating model failure. Not a lack of AI ambition. Not a lack of AI capability. A lack of the governance infrastructure that converts AI deployment into AI evidence. The Klarna case makes the pattern concrete: a CEO publicly claimed AI had replaced hundreds of customer service workers, the claim was made without adequate measurement of what was being lost alongside what was being gained, and the organisation subsequently had to quietly reverse course as quality problems surfaced. The efficiency metric looked good. The quality metric was not being tracked.

The organisations getting this right are not those with better technology. They are those that defined what success looked like before they deployed, built the monitoring to track it during operation and have the accountability structures to own the outcome when it diverges from the plan.

What an AI Operating Model Actually Is

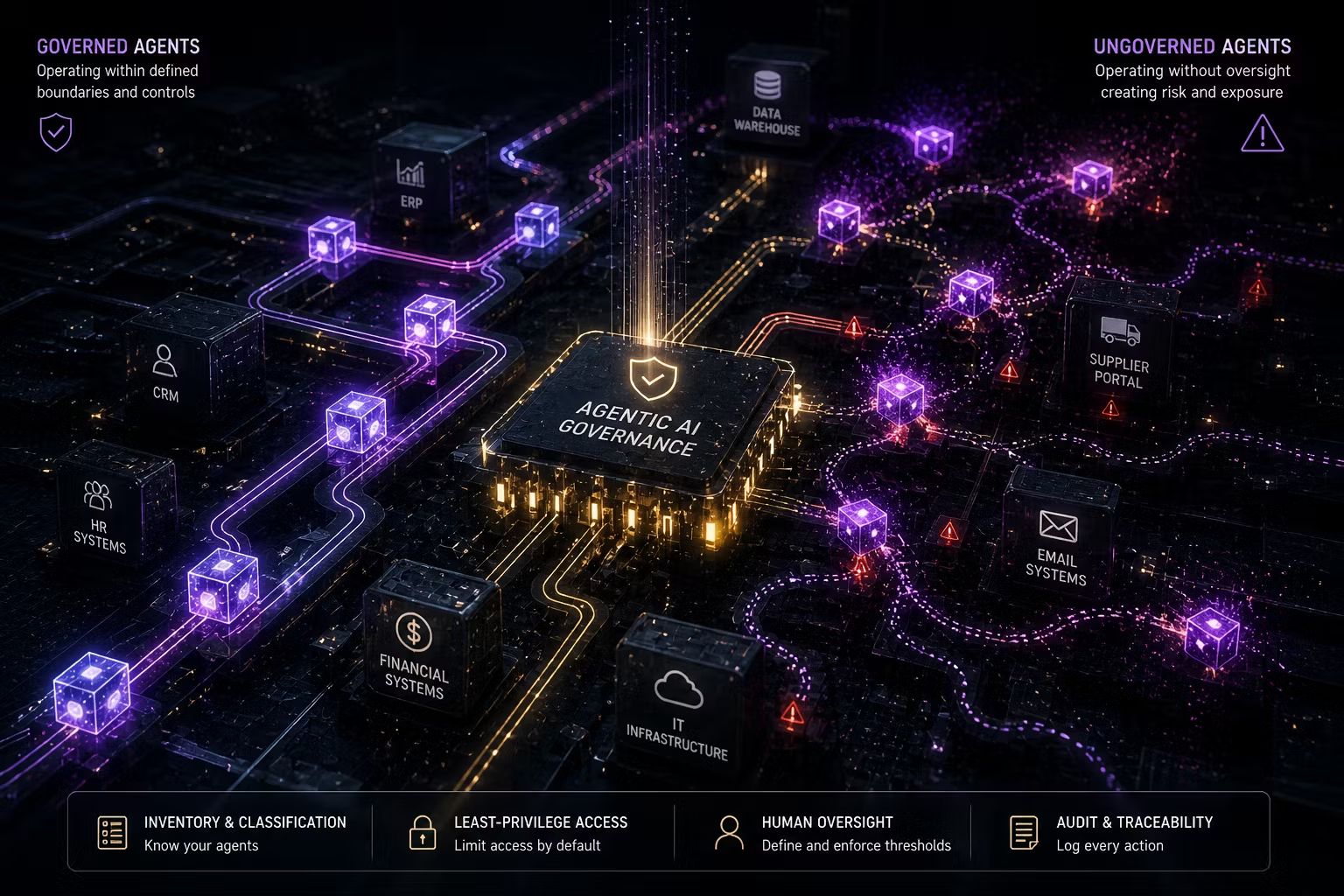

An AI operating model is the combination of governance structures, accountability frameworks, measurement disciplines and process designs that determine how an organisation decides to deploy AI, governs it in production and holds itself accountable for the outcomes it produces.

It is not a technology investment. It is not a policy document. It is not a Centre of Excellence that advises business units on tool selection. It is the operating architecture that makes AI a managed business asset rather than an uncoordinated portfolio of experiments.

An effective AI operating model has five components that work together.

Structured use case intake with value hypotheses. Every AI initiative enters through a defined channel before investment is committed. The intake process requires a value hypothesis: not "this will improve efficiency" but "this will reduce average handling time by X minutes in this function, saving approximately $Y per quarter, measurable against this baseline." Without this, post-deployment measurement is impossible and the board question cannot be answered.

Risk classification before deployment. Each AI system is assessed against a consistent framework before it goes into production. The framework distinguishes between low-risk productivity tools and high-risk systems making consequential decisions about individuals. The classification determines what oversight, documentation and monitoring the system requires, and ensures governance effort is proportionate rather than uniform across a portfolio of very different risk profiles.

Distributed business accountability. Business unit leaders own the AI systems their teams use, not IT. They are accountable for the outcomes those systems produce, including the outcomes that take six months to become visible. This is the governance change that makes AI value measurable, because the person accountable for the outcome is also the person with the P&L line the outcome affects and the standing to report on it credibly to the board.

Portfolio-level visibility and reporting. The board sees AI as a portfolio: which initiatives are delivering against their value hypotheses, which are stalled, what the aggregate risk exposure looks like and what the performance pipeline looks like over the next twelve months. Trusenta's AI Governance platform provides the portfolio tracking and reporting infrastructure that makes this visibility possible without manual aggregation across business units.

Ongoing monitoring against pre-defined metrics. AI systems drift. Outputs change as data changes. The metrics that looked good at launch may be measuring the wrong things, as Klarna discovered. The AI operating model includes a monitoring cadence designed around what actually matters, matched to how fast each system can create problems, not to how convenient the governance meeting schedule is.

The Fractional AI Officer: When the Operating Model Needs a Driver

The AI operating model does not run itself. It requires someone at executive level who owns the agenda, has the authority to make governance decisions and is accountable to the board for AI performance. In practice, most organisations have either no one owning this specifically or a governance function that lacks the commercial authority to make the decisions that matter.

For mid-market organisations, hiring a full-time Chief AI Officer is often not the right investment at this stage. The Fractional AI Officer model solves this precisely: experienced executive leadership that owns the AI operating model agenda, drives the measurement disciplines that produce board-reportable evidence and represents the governance function with the credibility that an advisory role cannot provide.

Trusenta's Fractional AI Officer service is designed for this moment: organisations that are serious about building the operating model but need the executive leadership embedded to drive it rather than advising from outside.

What Trusenta Actually Observes

Rather than citing external survey data, here is what the pattern of engagements actually looks like from where Trusenta sits.

The organisations that struggle most to answer the board's "prove it" question are almost universally those that deployed AI without pre-deployment baselines. They have real productivity gains. They cannot attribute them specifically enough to satisfy a board that has moved past the aspiration phase and into the accountability phase. The measurement problem is not a future problem to solve. It was created at the point of deployment, when the value hypothesis was absent.

The organisations answering the board question credibly are those that treated the value hypothesis as a condition of funding. Not a project management formality. A genuine commitment: this is what we will measure, this is the baseline, this is the timeline, and this is who owns the outcome. Those organisations are not necessarily using more sophisticated AI. They built the governance infrastructure before they deployed, which means they have the evidence when they need it.

The operating model components described above are not theoretical. They are the practical difference between organisations that can answer the board question and those that cannot.

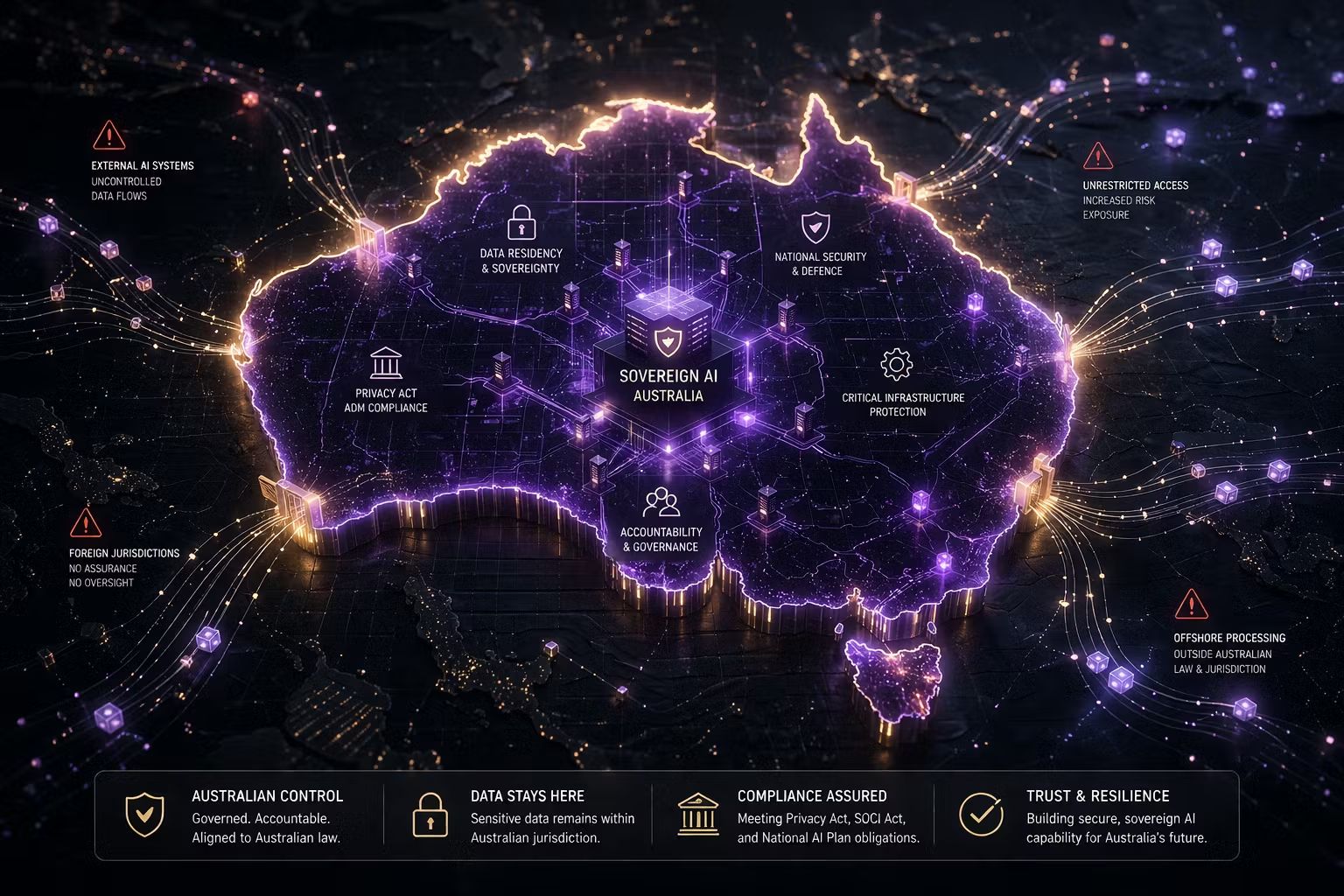

The Australian Regulatory Dimension

For Australian enterprises, the board question about AI performance is arriving alongside a regulatory question about AI accountability. The Privacy Act automated decision-making obligations taking effect on 10 December 2026 require organisations to disclose how AI is used in substantially automated decisions affecting individuals. This is not a disclosure about AI in general. It requires knowing which specific systems are in scope, what data they use, how decisions are made and who is accountable.

That information is only available to organisations that have already built the AI operating model. The system inventory, the accountability structures and the monitoring cadence required for the Privacy Act disclosure are the same foundations required to answer the board's performance question. Build the operating model for value realisation and regulatory compliance is a reporting exercise on top of it rather than a separate project.

APRA and ASIC regulated entities face the additional dimension that accountability for AI-driven outcomes cannot be contracted to a vendor. The accountability stays with the licence holder. The AI operating model is what makes that accountability real rather than theoretical.

Key Takeaways

- Boards have stopped asking whether organisations are doing AI and started asking for proof it is working. Most organisations cannot answer from their own data because they deployed without measurement infrastructure

- Reaching for consulting firm survey benchmarks is the wrong answer to a board question about your organisation's specific AI performance. No external survey can produce that evidence. Only your own measurement infrastructure can

- The structural failure is the absence of pre-deployment value hypotheses. Without a baseline and a defined measurement approach, post-deployment attribution is guesswork

- An AI operating model has five components: structured use case intake with value hypotheses, risk classification before deployment, distributed business accountability, portfolio-level board reporting and ongoing monitoring against pre-defined metrics

- The Fractional AI Officer model provides the executive ownership needed to drive the AI operating model without the cost of a full-time hire

- For Australian enterprises, the AI operating model satisfies both the board performance question and the Privacy Act ADM compliance obligation arriving December 2026. Build it once. Use it for both.

How Trusenta Can Help

AI Strategy Services: Trusenta's AI Strategy engagements build the use case portfolio and value hypotheses that sit at the foundation of a functioning AI operating model. The starting point is always defining what value looks like and building the measurement framework that makes board-reportable evidence possible before the first dollar is committed.

AI Governance: Trusenta's AI Governance platform provides the intake, risk classification, accountability tracking and portfolio reporting infrastructure that makes the AI operating model operational rather than aspirational. It turns governance from a coordination exercise into the systematic measurement infrastructure that boards are now requiring.

Fractional AI Officer: For organisations that need the executive leadership to own and drive the AI operating model agenda, Trusenta's Fractional AI Officer service embeds an accountable senior leader who drives the governance disciplines, owns the board relationship and makes the operating model real rather than aspirational.

Conclusion

The board question has changed. Proof that AI is working is not something you borrow from a consulting firm's survey. It is something your organisation produces from its own measurement infrastructure, built before deployment and maintained through the operating model that governs everything that follows. The organisations that can answer the board question in 2026 are not those with the best AI tools. They are those that built the governance infrastructure to know what those tools are actually producing. That infrastructure is available to build now. The organisations that build it will be counting documented outcomes. The ones that do not will be counting pilots and hoping the board keeps accepting benchmarks as a substitute for evidence.

Author

Mark brings a rare blend of C-suite leadership and hands-on consulting experience to Trusenta. As former SVP of Services, SVP of Business Opeartions, Managing Director and CIO he brings a breadth of experinece in his specialty in guiding organisations through AI strategy, governance and adoption; bridging ambition with practical execution. His focus is on helping clients embed AI responsibly, at scale and in service of real business outcomes.

https://www.linkedin.com/in/consult-mmiller/