AI Governance

Every Consulting Firm Has an AI Governance Survey Right Now. Here Is What You Should Actually Believe.

In the past 90 days, at least a dozen consulting firms have published AI governance surveys showing alarming gaps, all concluding you need what they sell. This post asks an uncomfortable question: when the research industry is producing this much survey data this quickly, what should Australian executives actually believe and act on?

May 8, 2026

7

min read

In the past 90 days, at least a dozen major consulting and advisory firms have published AI governance surveys. The findings are remarkably consistent: somewhere between 70% and 90% of organisations are not ready for AI governance scrutiny. The sample sizes are typically between 300 and 1,000 respondents. The methodology is rarely explained in the report itself. And they all happen to reach conclusions that support the services of the firm that conducted the research.

You are allowed to be sceptical of this.

The AI governance survey has become a content marketing format. That does not mean the underlying concern is wrong. Organisations genuinely are deploying AI faster than governance frameworks can adapt. The accountability gap between AI adoption and AI oversight is real and consequential. But when the research industry is producing this volume of survey data this quickly, some of it written with AI assistance by the same firms warning about AI risks, the numbers deserve scrutiny rather than citation.

This post takes a different approach. Rather than leading with someone else's survey data, it asks the first-principles questions that matter regardless of whose research you believe and whether your organisation can answer them honestly.

The Survey Data Problem

Consider a typical AI governance survey finding: "X% of executives lack confidence they could pass an AI governance audit within 90 days."

There are several things worth examining here. First, that is self-reported confidence, not actual audit performance. An executive saying they lack strong confidence and an organisation actually failing an audit are very different things. Second, what is an "AI governance audit"? There is no universal standard for this. An audit against ISO 42001 requirements looks entirely different from an internal review against a proprietary framework developed by the firm conducting the survey. The question is measuring something, but it is not entirely clear what.

Third, who are the respondents? Senior executives at large enterprises who were recruited to complete a survey have self-selected into the sample. They may be the people already thinking about AI governance, which would make the finding directionally optimistic rather than pessimistic. Or they may be the people most exposed to vendor sales cycles, which would bias the finding the other way. Without knowing the sampling methodology, the number is an illustration rather than a measurement.

Fourth, and most importantly: the firm publishing the data has a commercial interest in the conclusion. Every firm that publishes a finding showing that most organisations are unprepared for AI governance also sells AI governance services. This does not mean the finding is wrong. It does mean it should be read with the same critical lens you would apply to any research with a commercial author.

None of this is unique to AI governance surveys. It applies to most B2B research. The volume of it landing simultaneously right now, as AI governance has become commercially interesting, is simply making the pattern more visible.

What the Pattern of Surveys Actually Tells You

Here is what is genuinely worth taking from the current wave of AI governance research, regardless of which firm produced it.

The fact that this many organisations are publishing this research simultaneously tells you that AI governance has crossed a threshold from specialist concern to mainstream business priority. Board members are asking about it. Regulators are signalling expectations. Procurement panels are starting to include it in vendor assessment. The research is a lagging indicator of a real shift in the conversation, not a leading indicator of actual governance gaps.

The directional consistency across different methodologies and different firms is meaningful even if the specific numbers are not. When Deloitte, McKinsey, IBM and a dozen others all find that AI governance maturity correlates with better business outcomes, the mechanism is probably real even if each firm has measured it slightly differently. Well-governed organisations tend to be better managed across the board. AI governance maturity is likely both a cause and an effect of broader organisational discipline.

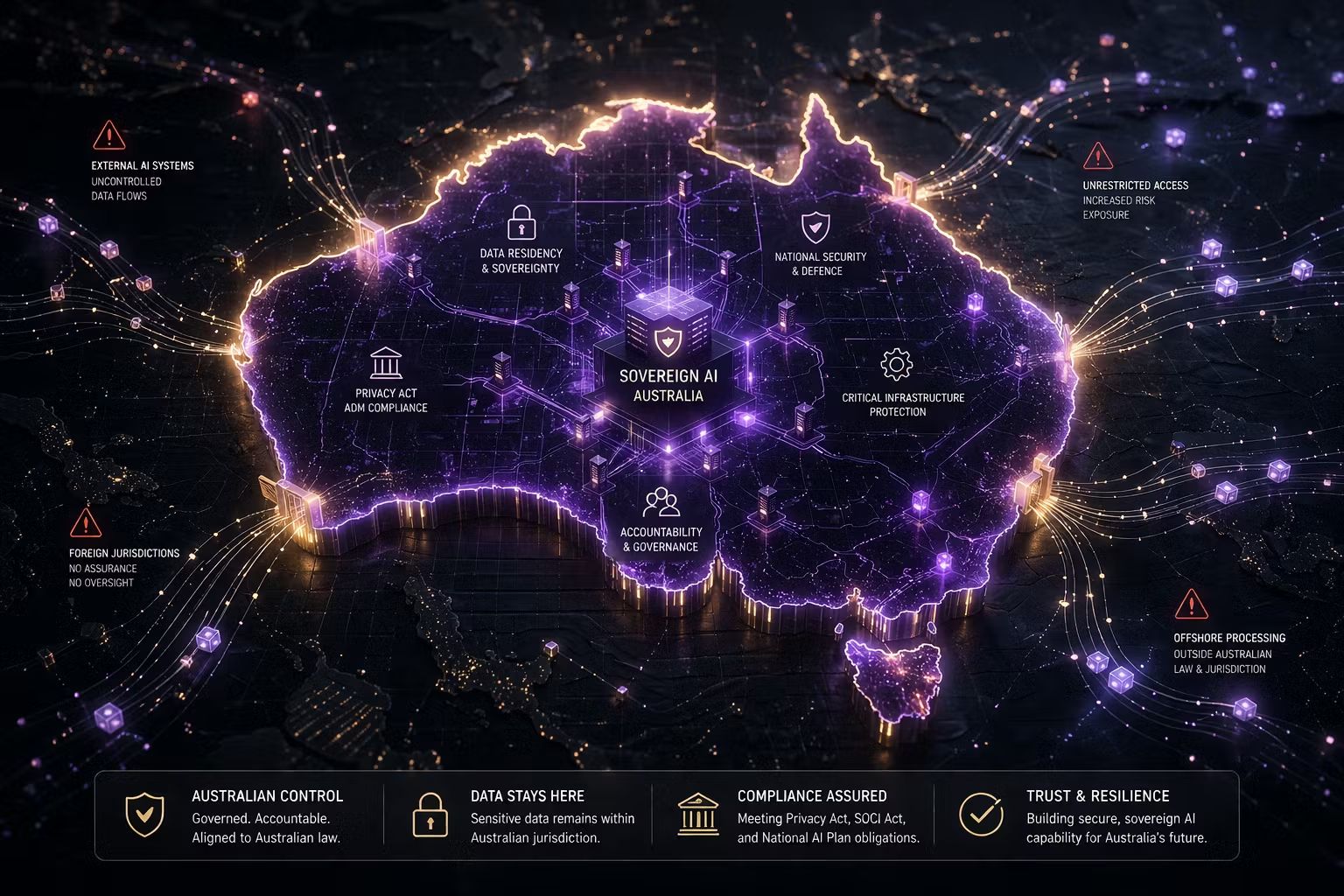

The regulatory deadlines, by contrast, are not survey data. They are law. Australia's Privacy Act automated decision-making obligations take effect on 10 December 2026. The EU AI Act's high-risk system enforcement deadline is 2 August 2026. These are not estimates from a research report. They are calendar dates.

The Five Questions That Matter, Regardless of the Surveys

Rather than asking whether your organisation could pass an AI governance audit measured on someone else's scale, here are five questions that are directly useful regardless of how you weight any particular piece of research.

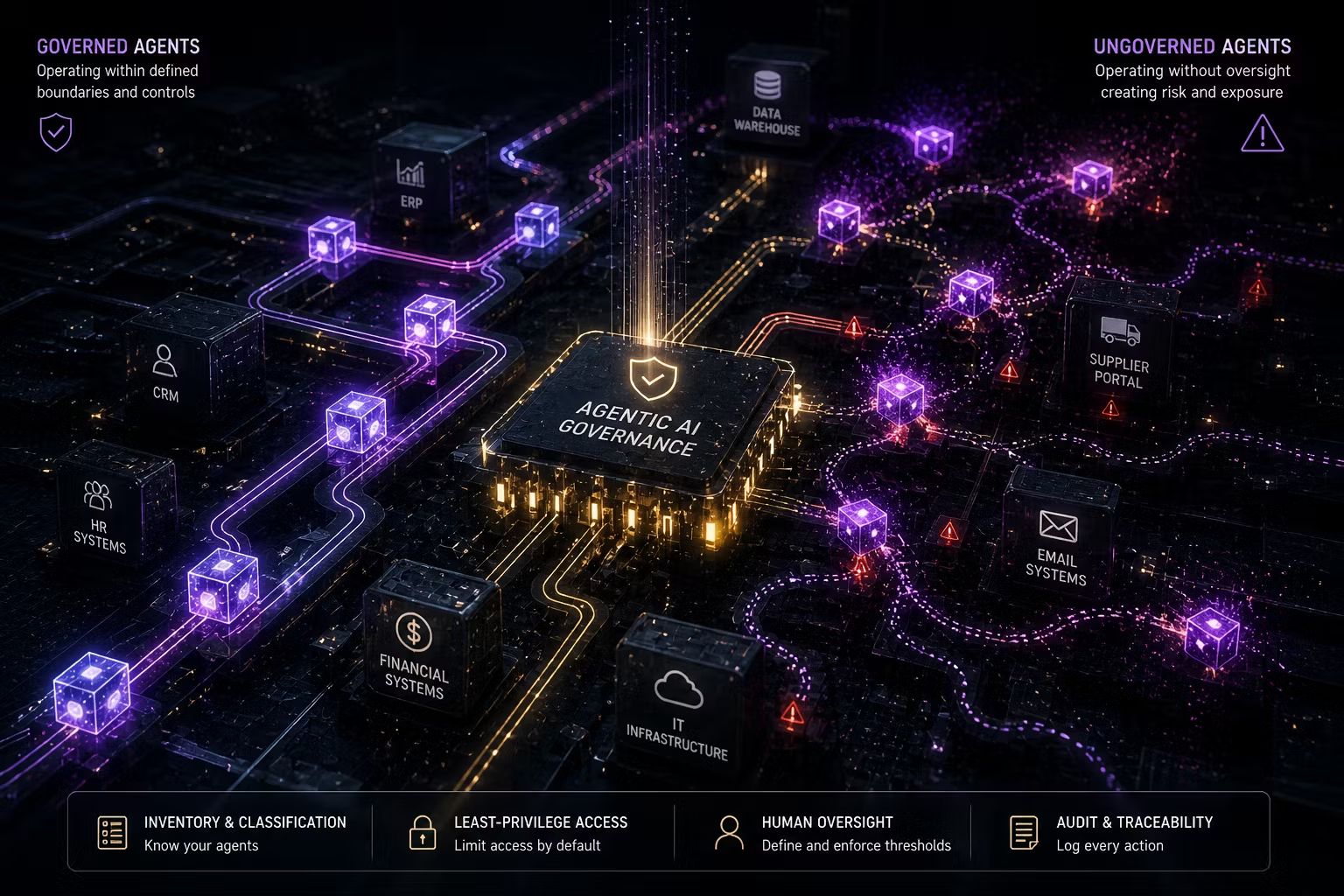

Can you produce a current inventory of every AI system making or influencing decisions about your customers, employees or operations? Not a list of approved tools. Every AI system, including those embedded in SaaS platforms your teams are already using and those vendor-supplied as part of contracted services. If the answer is no, the gap is not in your governance framework. It is in basic visibility.

For each system in that inventory, can you name who is accountable for its outputs? Not who approved the procurement. Not who manages the vendor relationship. Who is responsible when the system produces an outcome that was not intended or that harms someone? If accountability cannot be named at the system level, governance is theoretical rather than operational.

Do you have a defined process for assessing new AI initiatives before they are deployed? Not a policy document. An actual process that people actually follow. The distinction matters because most organisations have governance policies that function as documentation rather than as operational controls. A process that does not change anyone's behaviour is not a governance control.

Are your AI vendors contractually accountable for the outcomes you need from them? Under Australian law, the accountability for AI-driven outcomes stays with the organisation deploying the system, not the vendor who built it. Understanding where vendor accountability ends and your organisation's accountability begins is a contract review question, not a governance framework question.

When was the last time your board received a substantive AI risk report? Not an update on AI initiatives or a technology strategy presentation. A report that covered what AI systems are running, what risk exposure they create, what controls are in place and what is not yet governed. If the honest answer is never, the governance gap is real regardless of which survey you believe.

The ISO 42001 Question

One piece of external validation worth taking seriously is ISO 42001, precisely because it is independently certifiable rather than self-reported.

AS ISO/IEC 42001:2023 is the world's first certifiable AI management system standard. Unlike a survey asking executives how confident they feel, an ISO 42001 certification audit is conducted by an accredited third party against a defined set of controls. It produces a verifiable outcome rather than a self-reported sentiment. And because the standard follows the same Annex SL structure as ISO 27001, organisations with existing information security management systems can extend to ISO 42001 coverage 30 to 40% faster than those starting from scratch.

In Australia, there are currently only 10 to 15 properly accredited auditors who can certify to ISO 42001. That number is not a survey estimate. It is a market constraint. Organisations that want certification before end of FY27 need to begin scoping engagements now, not because a consulting firm's survey says so, but because auditor availability is genuinely limited and lead times are extending as demand grows.

Trusenta's Compliance Management platform maps controls across ISO 42001, the NIST AI RMF and the Australian Guidance for AI Adoption simultaneously, so the governance work required for certification also satisfies other compliance frameworks without duplication.

What Trusenta Actually Sees

Rather than citing survey data, here is what the pattern of engagements actually looks like from where Trusenta sits.

Most organisations beginning a governance engagement genuinely cannot produce a complete AI inventory. Not because they have not tried, but because AI is entering the enterprise through too many channels simultaneously: embedded features in existing SaaS tools, vendor-supplied capabilities in contracted services, developer-built agents using open-source frameworks and formally approved IT deployments. The inventory exercise almost always surfaces more than leadership expected.

The accountability gap is real and structural, not attitudinal. Most organisations have a named executive responsible for AI governance. Very few have accountability structures below that level that are operational. Business unit leaders frequently do not know which AI systems they own. There is typically no defined escalation path when a system behaves unexpectedly.

The organisations doing this well did not get there by reading survey data. They made a decision that AI governance was a capability worth building rather than a compliance exercise worth completing. The distinction sounds minor. It produces very different organisations.

Key Takeaways

- The AI governance survey market is producing significant volume right now, much of it from firms with a commercial interest in the conclusions. Read all of it critically, including this post.

- The directional consistency across different research sources is meaningful even if the specific numbers are not: AI governance maturity does correlate with better organisational outcomes, though causation is complex

- Regulatory deadlines are not survey data. Australia's Privacy Act ADM obligations (December 2026) and the EU AI Act high-risk deadline (August 2026) are calendar dates, not estimates

- Five questions matter more than any survey score: inventory visibility, system-level accountability, a real intake process, vendor contract clarity and board-level AI risk reporting

- ISO 42001 is the only independently certifiable AI governance standard available in Australia and produces verifiable outcomes rather than self-reported confidence levels

- Trusenta's consistent finding: the AI inventory exercise almost always surfaces more than leadership expected, and the accountability gap is structural rather than attitudinal

How Trusenta Can Help

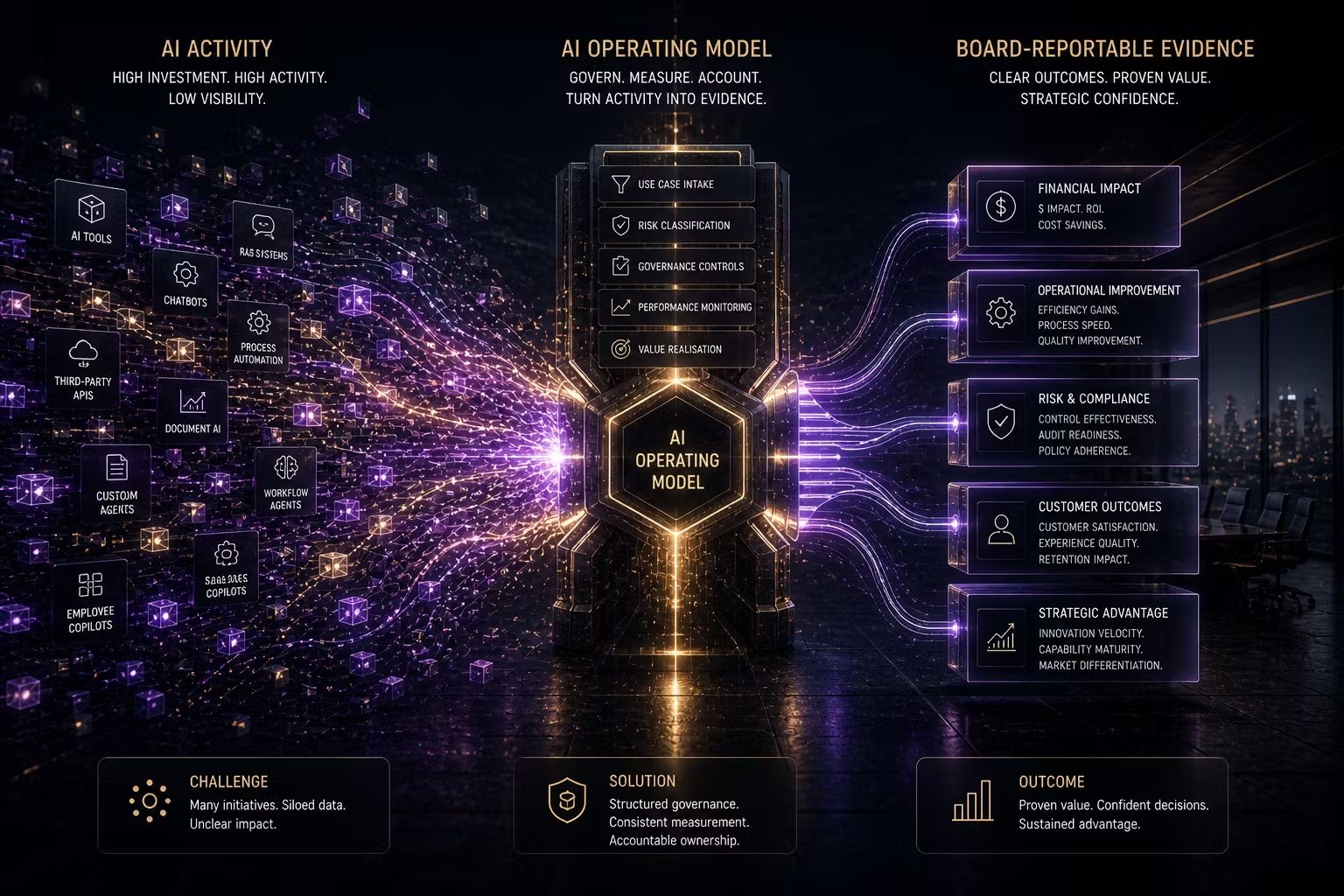

AI Governance: Trusenta's AI Governance platform provides the use-case register, risk assessment workflows and accountability tracking that address the five first-principles questions directly. The inventory, the accountability structures and the intake process are all operational outputs of the platform, not just policy positions.

Compliance Management: For organisations working toward ISO 42001 certification or managing multiple regulatory frameworks simultaneously, Trusenta's Compliance Management platform maps controls once across ISO 42001, the NIST AI RMF and the Guidance for AI Adoption, generating verifiable audit evidence rather than self-reported confidence.

AI Governance Foundations: For organisations that want to understand their actual governance position rather than their survey position, this 10-day engagement establishes what is genuinely in place, what the real gaps are and what the practical path to a defensible governance position looks like.

Conclusion

The AI governance gap is real. The evidence for it does not come primarily from any particular firm's survey. It comes from the five questions at the centre of this post and from whether your organisation can answer them with specificity rather than approximation. The surveys are a signal that the conversation has reached mainstream urgency. The questions are what you actually need to answer. Whether you use a consulting firm's research to motivate that exercise or your own honest assessment is secondary to whether the exercise happens at all.

Author

Mark brings a rare blend of C-suite leadership and hands-on consulting experience to Trusenta. As former SVP of Services, SVP of Business Opeartions, Managing Director and CIO he brings a breadth of experinece in his specialty in guiding organisations through AI strategy, governance and adoption; bridging ambition with practical execution. His focus is on helping clients embed AI responsibly, at scale and in service of real business outcomes.

https://www.linkedin.com/in/consult-mmiller/