AI Strategy

The AI ROI Reckoning: Why Most Enterprise AI Investments Aren't Delivering and What to Do About It

Global AI investment is at record highs, yet 56% of CEOs say AI has produced neither revenue growth nor cost reduction in the past year. This post examines why enterprise AI ROI remains so elusive, what three layers of AI value most organisations are missing and why governance maturity, not technology, is the real differentiator between those getting returns and those still waiting.

March 17, 2026

8

min read

Fifty-three per cent of institutional investors expect positive returns from their AI investments within six months. Only 16 per cent of chief executives believe they can deliver that. That gap is not a communication problem. It is a governance crisis.

PwC's 29th Global CEO Survey, released at Davos in January 2026, found that 56 per cent of chief executives say AI has produced neither increased revenue nor reduced costs over the past twelve months. MIT's Project NANDA found that 95 per cent of organisations deploying generative AI saw zero measurable profit-and-loss impact within six months. The money is committed. The results are not coming.

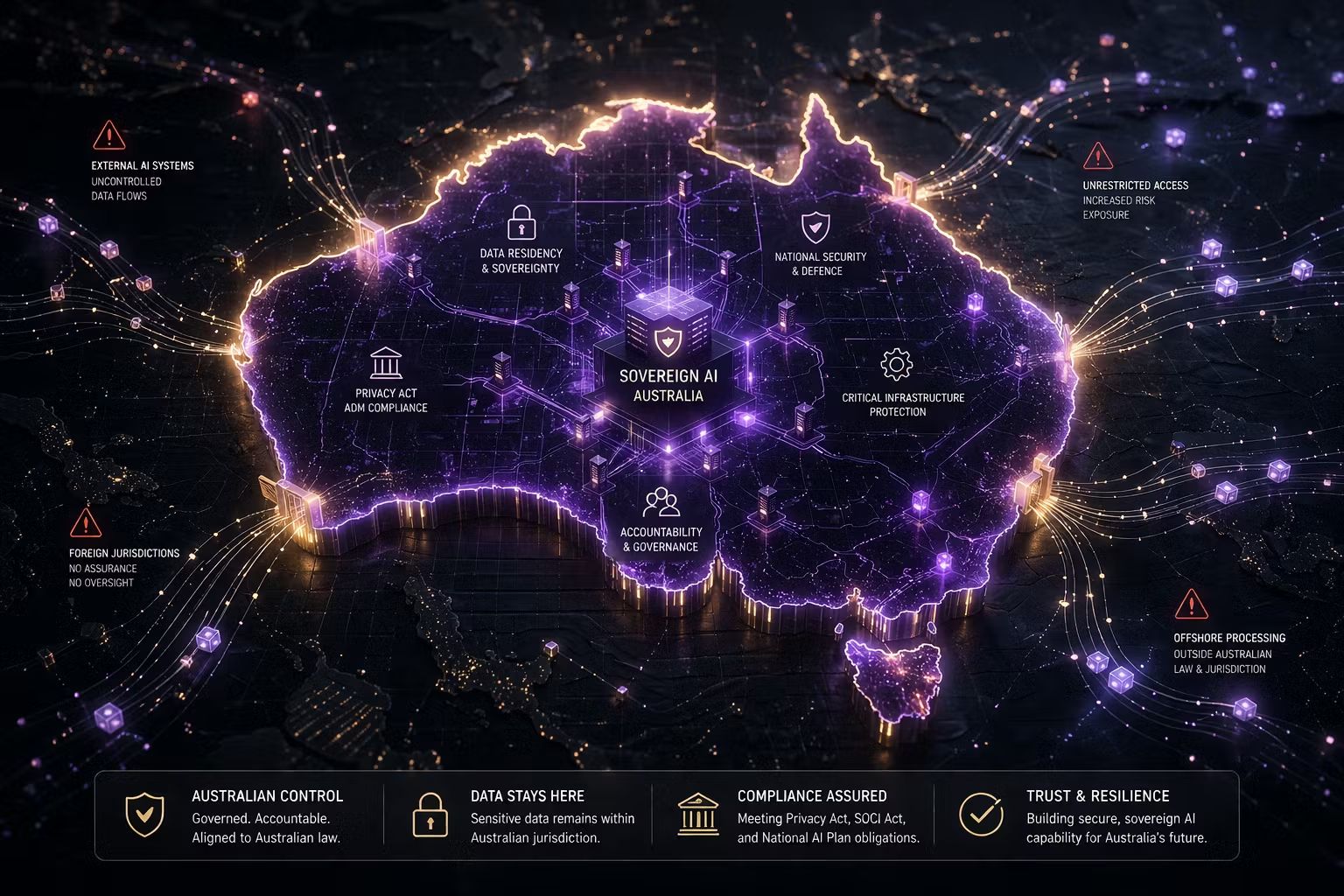

Kyndryl's 2025 Readiness Report, covering 3,700 senior leaders across 21 countries, found that 61 per cent feel more pressure to demonstrate enterprise AI ROI than they did a year ago. Forrester expects a quarter of planned 2026 AI budgets to slip into 2027, not because organisations have lost confidence in AI but because they cannot yet show it is working. In Australia, where regulatory scrutiny of AI is intensifying alongside record investment, the pressure to demonstrate AI ROI responsibly is compounding by the quarter.

The Numbers That Should Concern Every Board

IBM's CEO study found that only 29 per cent of executives can measure AI ROI confidently, even as 79 per cent perceive productivity gains. That 50-point gap, between feeling that AI is working and being able to prove it financially, is the space where board credibility is won or lost.

A Dataiku survey of 600 enterprise CIOs found that 74 per cent say their role is at risk if measurable AI gains are not delivered within two years. The timeline is compressing: many report that if AI initiatives fail to show performance within six months, funding could be frozen entirely. Nearly all CIOs now brief the board on AI performance at least quarterly and nearly half do so monthly. When AI performance is reviewed on the same schedule as revenue and operational KPIs, experimentation is no longer sufficient.

The AI ROI measurement challenge is consistent across Australian financial services, government and professional services. The Cisco AI Readiness Index found that only 23 per cent of organisations consider their governance processes ready for AI. This matters directly: without governance readiness, measurement infrastructure does not exist.

Three Layers of AI Value Most Organisations Are Missing

Most organisations measure AI ROI against a single dimension: efficiency. Hours saved, tasks automated, headcount-equivalent productivity gain. This was the right starting metric for the pilot phase. It is no longer sufficient.

The Futurum Group's 1H 2026 Enterprise Software Decision Maker Survey, covering 830 global IT decision-makers, documents a structural shift: direct financial impact nearly doubled as the primary AI ROI measure, while productivity gains fell from 23.8 per cent to 18 per cent as the leading success metric. Boards have matured. They want P&L, not productivity stories.

The efficiency layer is where most organisations start: automation, speed and cost reduction. These gains are real, but they are diffuse and difficult to tie to financial outcomes. A 20 per cent reduction in document processing time does not automatically translate to a line in the profit-and-loss statement.

The risk mitigation layer is almost universally ignored in AI ROI conversations, but it is significant. AI governance failures cost money: regulatory fines, reputational damage, remediation and litigation. PwC's 2025 Responsible AI survey found that 60 per cent of executives said responsible AI boosts ROI and efficiency. Yet nearly half admitted they have not operationalised it. Governance done well has measurable financial value. Governance done poorly has measurable financial cost.

The strategic value layer is where real separation happens. The organisations in PwC's AI Vanguard, the 12 per cent who have achieved both revenue growth and cost reduction, redesigned how the business operates: new revenue models, better decision-making and faster market response. This layer requires governance that connects AI deployment to business strategy and tracks value at the decision level, not the task level.

Why Governance Is the Hidden Multiplier of Enterprise AI ROI

This is the insight that most AI ROI analysis misses entirely: governance maturity is the most significant predictor of whether AI generates measurable financial returns.

Deloitte's 2026 State of AI in the Enterprise report, based on a survey of 3,235 global leaders, is direct: enterprises where senior leadership actively shapes AI governance achieve significantly greater business value than those delegating it to technical teams alone. This is not marginal. It is structural.

When AI systems are deployed without clear governance, with no ownership, no value baselines or accountability structures, value creation is accidental and value destruction is systematic. By contrast, organisations that treat governance as an enabler create the conditions where AI value can be measured, attributed and compounded. Only 23 per cent of organisations consider their governance processes ready for AI (Cisco AI Readiness Index). That means 77 per cent are building AI systems without the measurement infrastructure to demonstrate what those systems produce.

How to Measure AI ROI in a Way That Survives a Board Meeting

Boards are no longer satisfied with model metrics, activity counts or pilot showcases. They want answers to four questions: What did this cost, and what did we get back? Where are we exposed to risk, and how are we managing it? Which AI investments should we continue, expand or stop? Are we ahead of or behind our competitors?

A credible AI ROI framework has three characteristics.

It connects to the P&L. Every AI initiative needs a value hypothesis before funding is committed. Not "this will improve efficiency" but "this will reduce the cost of customer dispute resolution by X per cent, generating $Y per quarter." Without this, post-deployment measurement is impossible.

It includes risk value. Governance activities have financial value. Catching a biased AI system before it affects ten thousand customer decisions is worth money. Documenting AI accountability for a regulatory audit is worth money. Risk avoidance must be included in ROI calculations, or the true cost and value of governance remains invisible.

It reports at the portfolio level. Individual initiatives will have mixed results. The board needs to see AI as a portfolio: which initiatives are delivering, which are stalled, what the aggregate risk exposure looks like and what the pipeline of value looks like over the next twelve months.

The 12% Who Are Winning and Why

The organisations generating genuine returns from AI share consistent characteristics. They set value hypotheses before funding initiatives. They track outcomes at the business level, not the model level. They treat governance as an operational discipline, not a compliance requirement.

MIT's research found that top performers achieved 90 days from pilot to full implementation, compared to nine months for large enterprises. The constraint is not technology. It is governance clarity: defined ownership, explicit accountability and measurement frameworks that teams can actually use. The 12 per cent winning is not a closed club. Entry requires rethinking what AI ROI means and building the governance infrastructure to prove it.

What This Means for Your Organisation

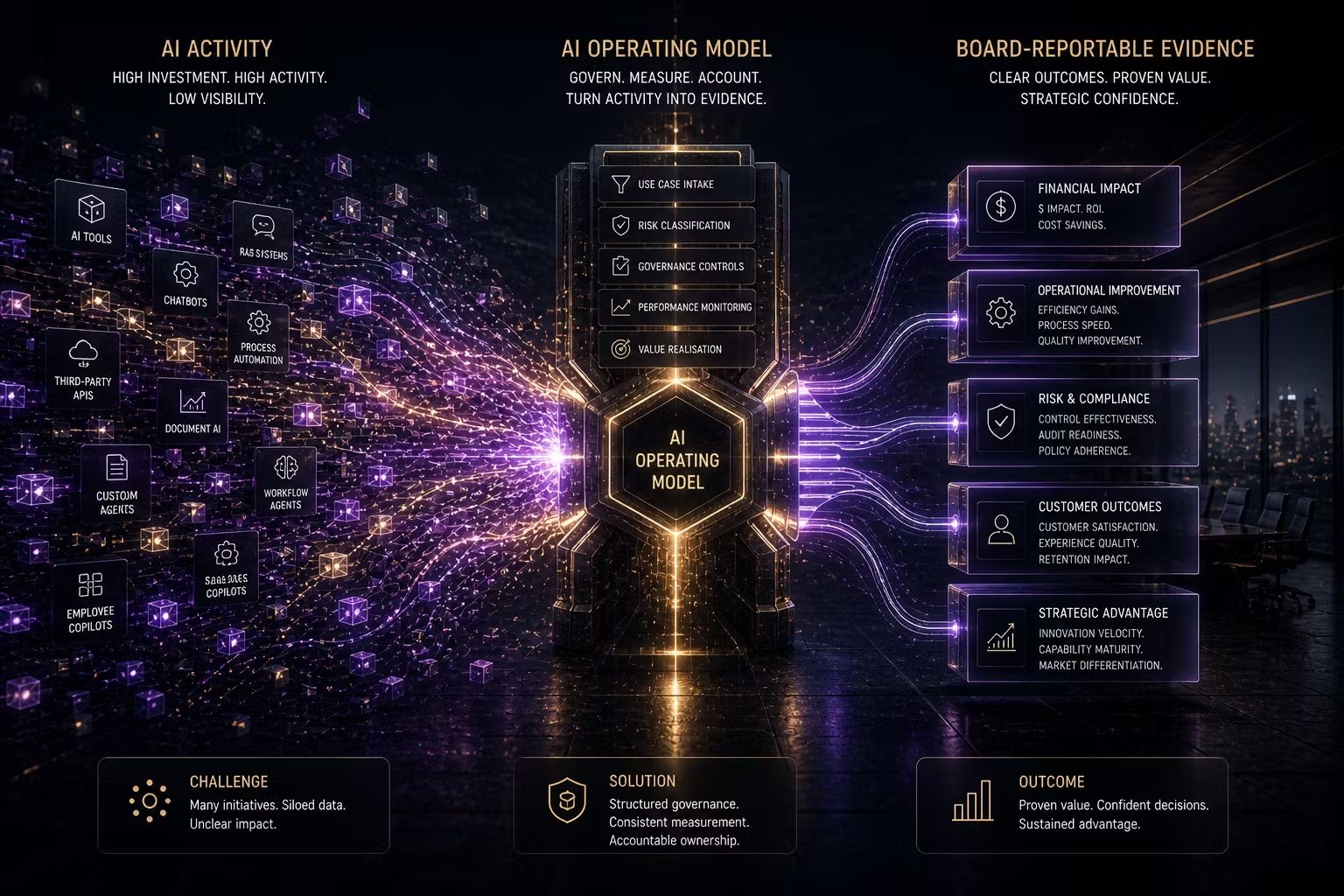

At Trusenta, the pattern is consistent: the measurement problem is almost never a technology problem. It is a governance maturity problem. When AI systems are deployed without clear ownership, value baselines or accountability structures, measuring ROI becomes guesswork. When governance is embedded from the start, with risk classification, use-case tracking and portfolio-level reporting, the measurement infrastructure is already in place and the board conversation shifts from "can you prove this is working?" to "here is what it delivered and what comes next."

The organisations making real progress on enterprise AI ROI in Australia are not those with the most sophisticated models. They are those with the clearest accountability structures, the most disciplined approach to use-case selection and the governance maturity to distinguish between AI activity and AI value.

Key Takeaways

- 95% of enterprise AI pilots deliver zero measurable P&L impact, yet 61% of senior leaders face growing pressure to demonstrate returns

- Boards and investors are demanding P&L impact; productivity metrics are losing credibility as primary ROI evidence

- Three layers matter: efficiency gains, risk mitigation value and strategic business outcomes. Most organisations only measure the first.

- Governance maturity is the structural difference between AI initiatives that deliver value and those that do not

- A credible AI ROI framework connects to the P&L, includes risk avoidance value and reports at the portfolio level

How Trusenta Can Help

AI Governance: Trusenta's AI Governance product provides the use-case register, risk assessment workflows and portfolio tracking infrastructure that organisations need to create the measurement baseline required for credible AI ROI reporting at board level.

AI Strategy Services: Trusenta's AI strategy engagements are built around identifying high-value use cases and defining value hypotheses before investment is committed, the foundational step that makes AI ROI demonstrable rather than assumed.

AI Governance Foundations: For organisations that do not yet have the governance infrastructure to measure AI ROI, this 10-day engagement establishes the accountability structures, risk classification framework and portfolio tracking that make measurement possible from the outset.

Conclusion

The AI ROI reckoning is not a temporary pressure that will ease as models improve. It is a structural shift in how boards, investors and regulators expect AI to be managed and justified. The organisations that emerge from this period with credibility will be those that treated governance not as overhead but as the foundation that makes value measurement possible. The question for every organisation is not whether AI delivers value. It is whether the governance infrastructure exists to prove that it does.

Author

Mark brings a rare blend of C-suite leadership and hands-on consulting experience to Trusenta. As former SVP of Services, SVP of Business Opeartions, Managing Director and CIO he brings a breadth of experinece in his specialty in guiding organisations through AI strategy, governance and adoption; bridging ambition with practical execution. His focus is on helping clients embed AI responsibly, at scale and in service of real business outcomes.

https://www.linkedin.com/in/consult-mmiller/