AI Strategy

Stuck in Pilot Purgatory: Why AI Projects Stall Before Scale and How to Break Through

Most enterprise AI never leaves the pilot stage. MIT research found 60% of organisations evaluated enterprise AI tools, 20% reached pilot stage and just 5% reached production. This post examines the governance and operating model failures keeping AI trapped in experimentation and outlines a practical 90-day path to move from pilot to governed, production-ready deployment.

March 19, 2026

7

min read

Sixty per cent of organisations evaluated enterprise-grade AI tools in 2025. Twenty per cent reached pilot stage. Five per cent reached production.

Those numbers come from MIT's GenAI Divide study, based on analysis of more than 300 public AI deployments. They represent an industry-wide failure that very few people are willing to name plainly. The AI pilot to production gap is not a technology problem. It is a governance, ownership and operating model problem. And until organisations understand that, the story does not change.

A survey of enterprise CIOs found that 88 per cent of AI pilots never reach production at all. Large enterprises, the organisations with the largest AI budgets, are the slowest to convert: nine months on average from pilot to implementation, compared to 90 days for mid-market firms. Yet organisations are not stopping. They are launching more pilots, replicating the same failure pattern at greater scale.

The Uncomfortable Maths of AI Pilots

MIT found that 95 per cent of enterprise generative AI pilots deliver zero measurable profit-and-loss impact. BCG's research found that 74 per cent of organisations have yet to show any tangible value from their AI investments. Gartner forecast that 30 per cent of generative AI projects would be abandoned entirely after the proof-of-concept phase by end of 2025. S&P Global data shows that 42 per cent of companies scrapped most of their AI initiatives in 2025, up sharply from 17 per cent the year before.

This is not a technology failure. The models work. The vendors are ready. The problem is what happens between "this pilot impressed the innovation team" and "this system is governed, monitored and generating verified business value in production." That gap is where most enterprise AI investments disappear.

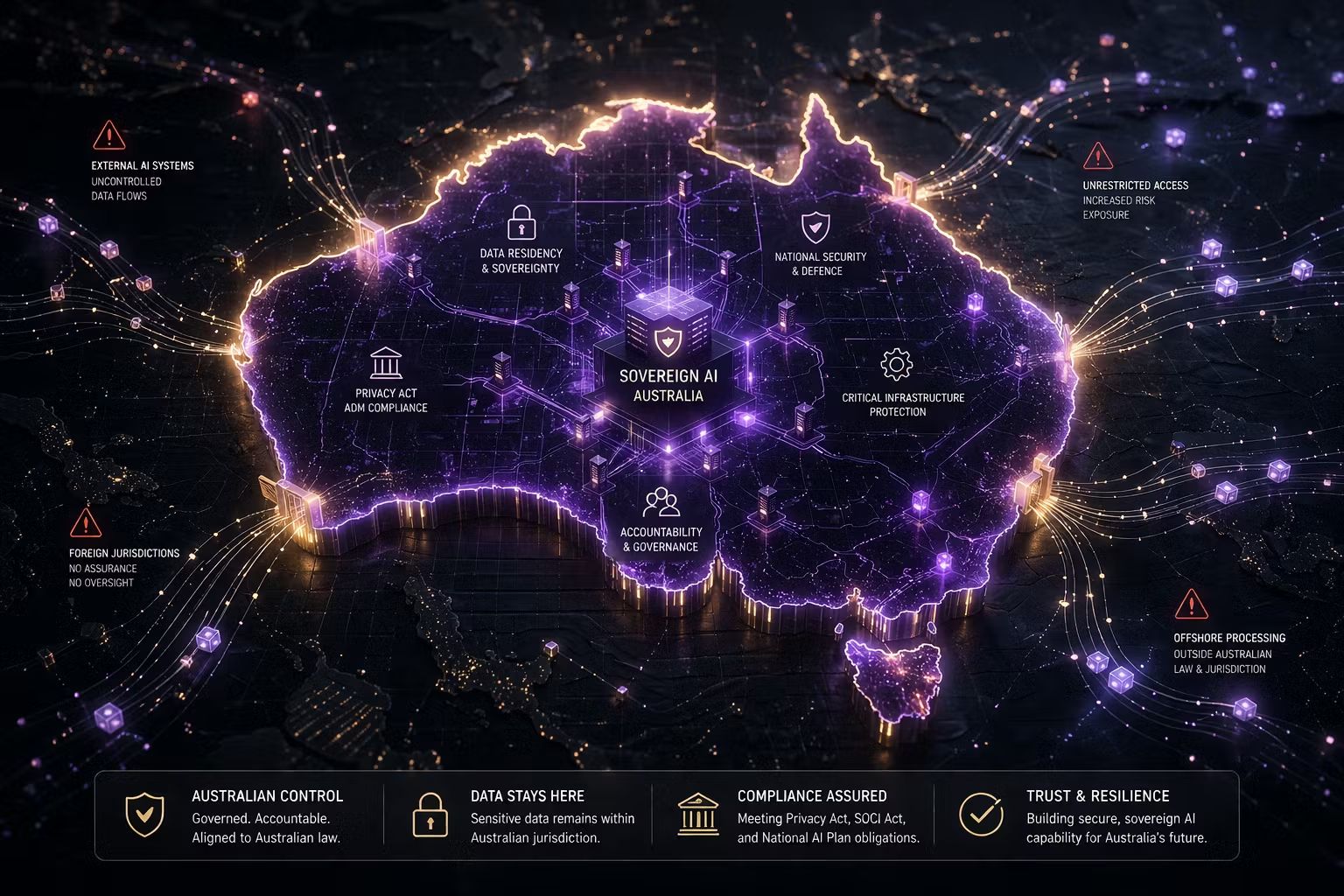

For organisations working to scale AI in Australia, the same structural gaps apply. With APRA, ASIC and evolving Privacy Act obligations around automated decision-making, AI systems moving to production without governance documentation are not just operationally risky. They may be non-compliant from day one.

Why Do AI Pilots Fail? Five Root Causes

The research on AI project failure is clear and remarkably consistent across studies. Almost none of the primary causes are technical.

No governance ownership. When a pilot shows promise, the question of who owns it in production is frequently unanswered. Engineering teams do not want operational accountability. Business units do not understand the technical requirements. Governance teams were not involved from the start. Nobody owns the path forward, so the pilot quietly dies.

No value baseline. Most pilots are approved on qualitative enthusiasm without a defined value hypothesis, success metrics or measurement baseline. When it comes time to justify production investment, there is nothing to measure against. Analysis of 2,400 enterprise AI initiatives found that 73 per cent of failed projects lacked clear metrics from the outset.

No risk policy. Moving an AI system from pilot to production requires understanding its risk profile: what decisions it makes, what data it accesses, what the failure modes are and who is accountable. Organisations without a risk framework cannot answer these questions, so the approval process stalls indefinitely.

Data quality below production threshold. Pilots often run on curated datasets that do not reflect the messiness of real operational data. Moving to production exposes data quality issues the pilot successfully concealed, and the cost of remediation is underestimated or left out of scope entirely.

No operating model for ongoing governance. A pilot is a contained experiment. A production AI system is an ongoing business asset requiring monitoring for drift, review when outputs change and clear accountability when something goes wrong. Organisations without an AI operating model have no structure to absorb that responsibility.

What Production-Ready AI Actually Requires

Here is a finding worth sitting with: successful AI projects do not spend less than failed ones. They spend differently. Projects that reached production allocated 47 per cent of their budget to foundations, covering data quality, governance and change management, versus just 18 per cent for failed projects.

What this tells you is that the organisations succeeding at AI deployment are not cutting corners on governance to move faster. They are treating governance as the investment that makes speed sustainable.

Production-ready AI requires five things that pilots routinely skip.

Clear business ownership. Someone in a business unit must be accountable for what the system does in production, not just for whether it performed well in a controlled environment, but for its real-world decisions and their consequences.

A documented risk assessment that categorises the AI system by the nature of its decisions, the data it accesses and its potential for harm. This is the minimum required to make a defensible production decision.

A governance workflow that defines how the system will be monitored, audited and updated. AI systems drift. Outputs change as data changes. A production AI system with no monitoring workflow is a risk event waiting to happen.

Defined performance metrics tied to business outcomes. Not model accuracy. Business impact. How much did this reduce cost? How much did this improve decision quality? These metrics must be defined before deployment, not after.

A change management plan. MIT found that resistance to adopting new tools was the leading barrier to AI scaling, ranking above infrastructure, regulation and talent. People who will work with the AI system need to understand it, trust it and know what to do when it behaves unexpectedly.

The Operating Model Changes That Unlock Scale

Most organisations approach AI deployment the way they approach software procurement: choose a vendor, configure the tool, train the staff and move on. AI does not work this way.

AI systems require ongoing governance: monitoring for drift, auditing for bias and reviewing when the business context changes. They need someone accountable for their outputs, not just their initial configuration. And they need to fit into an operating model that treats AI management as an ongoing business activity, not a one-time technology implementation.

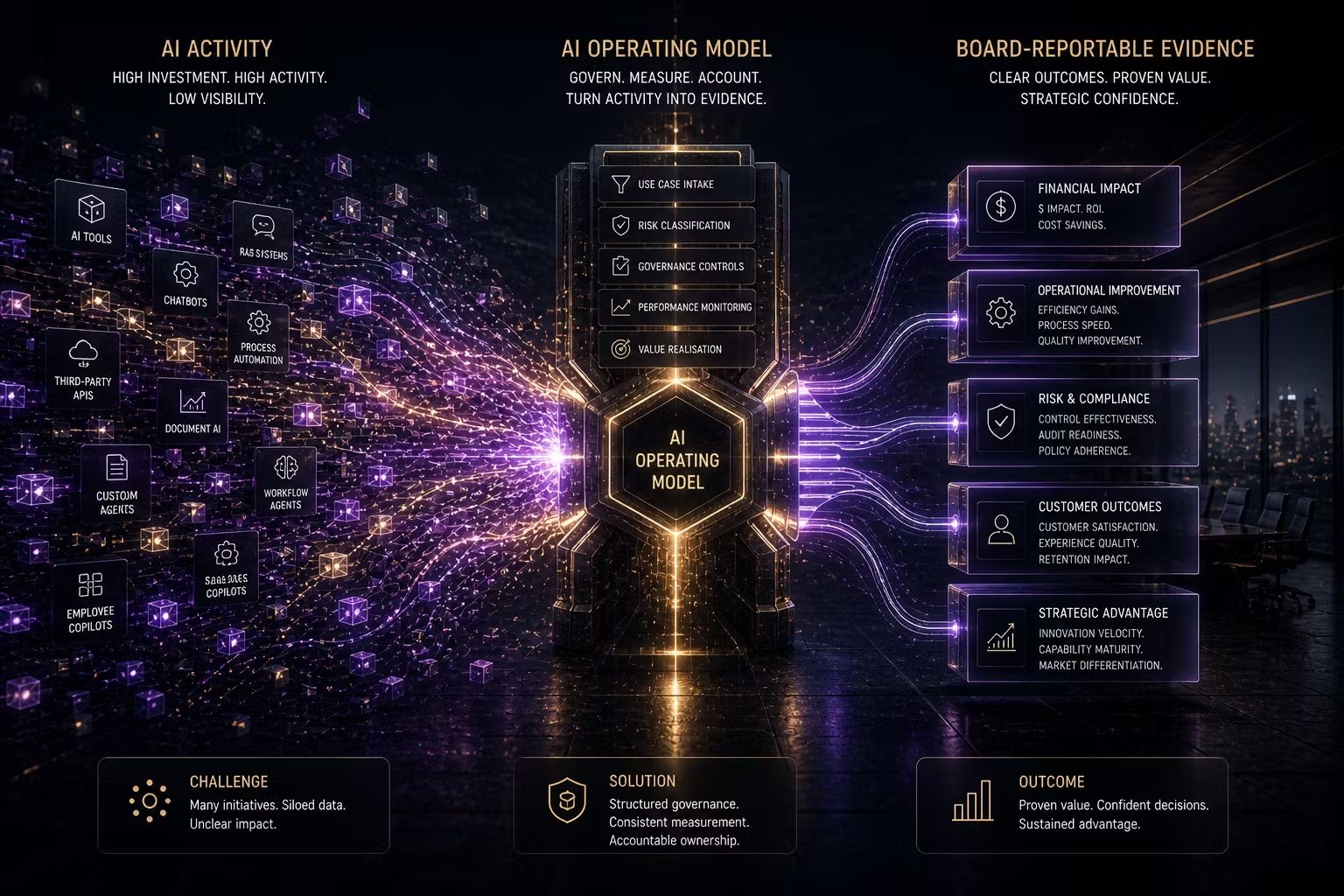

The organisations successfully scaling AI in enterprise have made three operating model changes. They have created structured intake and approval processes that take a pilot from experiment to approved production system with defined checkpoints and clear accountability at each stage. They have distributed governance accountability so business units own their AI systems rather than delegating entirely to IT. And they have established portfolio-level visibility: a central view of what is in production, what is in assessment and what has been declined and why.

That last change matters more than most people expect. Portfolio visibility is what allows leadership to distinguish between AI activity and AI value, which is exactly the question boards are now asking.

A Practical 90-Day Path From Pilot to Governed Production

The 90-day path is not about speed for its own sake. It is about building the governance process so that subsequent deployments move through it faster.

Month 1: Governance sprint. Define the risk classification framework, ownership model and intake process. Establish the principle that no new pilots are funded without a value hypothesis and defined success metrics. Apply this retrospectively to pilots currently in flight.

Month 2: Production pathway. Apply the governance framework to the two or three pilots with the highest business value potential. Conduct structured risk assessments. Define the production monitoring plan. Identify and close data quality gaps before they become production incidents.

Month 3: Controlled deployment. Deploy the strongest candidate to production with full governance documentation, monitoring in place and a defined review cadence. Use this as the template for the next cohort. Document what worked and what did not.

Once the pathway is established and working, subsequent deployments move through it faster. The governance process that takes three months to build accelerates everything that follows.

What This Means for Your Organisation

At Trusenta, the pattern is consistent: organisations stuck in pilot purgatory have confused experimentation with deployment. They have built the capability to run pilots but not the capability to govern production AI systems. Those are different capabilities, and only one of them generates verifiable business value.

The 90-day path outlined here is a governance-first approach that creates the conditions for AI to move from interesting experiment to verified business asset. It requires investment in operating model design, accountability structures and risk frameworks. That investment pays back in every subsequent deployment that moves through the pathway faster and with greater confidence.

Key Takeaways

- 95% of enterprise AI pilots deliver no measurable P&L impact; 88% never reach production at all

- The dominant failure causes are governance gaps (no ownership, no value baseline and no risk policy), not technology limitations

- Successful AI projects allocate 47% of budget to governance and change management foundations versus 18% for failed projects

- Production-ready AI requires defined business ownership, a documented risk assessment, a governance monitoring workflow, business-outcome metrics and a change management plan

- The organisations scaling AI fastest have created structured intake processes, distributed business accountability and portfolio-level visibility

- A governance-first 90-day path can move the strongest pilots to governed production while building the operating model for scale

How Trusenta Can Help

AI Governance: Trusenta's AI Governance product provides the structured use-case intake, risk assessment and portfolio tracking that organisations need to take AI pilots through a defined, defensible path to production, turning the governance process from a manual coordination exercise into a systematic workflow.

AI Governance Foundations: For organisations at the start of this journey, this 10-day engagement establishes the essential governance infrastructure covering intake processes, risk classification and accountability structures that makes production deployment possible in the first place.

AI Strategy Enterprise: For large organisations with fragmented pilots across business units, this engagement develops the prioritisation framework and governance structures needed to identify which pilots deserve production investment and how to align the organisation behind a coherent path forward.

Conclusion

Pilot purgatory is not an inevitable feature of enterprise AI. It is a symptom of deploying AI without the governance infrastructure to take it anywhere. The organisations breaking out of it are not those with better technology. They are those with clearer ownership, more disciplined use-case selection and operating models designed to take AI all the way from idea to governed, measured production. The path through pilot purgatory runs directly through governance maturity.

Author

Mark brings a rare blend of C-suite leadership and hands-on consulting experience to Trusenta. As former SVP of Services, SVP of Business Opeartions, Managing Director and CIO he brings a breadth of experinece in his specialty in guiding organisations through AI strategy, governance and adoption; bridging ambition with practical execution. His focus is on helping clients embed AI responsibly, at scale and in service of real business outcomes.

https://www.linkedin.com/in/consult-mmiller/