AI Governance

The AI Agent Identity Crisis: Why Autonomous AI Systems Are Your Biggest Ungoverned Risk Right Now

Most enterprises can say how many humans have access to their critical systems. Few can say the same about their AI agents. With 80.9% of technical teams running AI agents in production and only 14.4% deploying with full security and IT approval, the agent identity governance gap is a live risk. This post examines what makes AI agents uniquely difficult to govern and what organisations need to put in place before regulators do it for them.

March 24, 2026

8

min read

Most organisations can tell you how many human users have access to their critical financial systems. Very few can tell you how many AI agents do.

That single observation captures the governance gap that is now the most urgent security and compliance challenge in enterprise AI. In January and February 2026, the National Institute of Standards and Technology (NIST) launched a formal AI Agent Standards Initiative, including a request for information on AI agent security and a concept paper on agent identity and authorisation. More than 930 organisations and individuals responded. The urgency is not theoretical.

According to the Gravitee State of AI Agent Security 2026 report, 80.9 per cent of technical teams have moved past planning into active testing or production of AI agents. Only 14.4 per cent of those agents went live with full security and IT approval. The majority of AI agents running in enterprise environments today are operating without the identity governance controls that organisations apply as standard to human users.

What Makes AI Agents Fundamentally Different From Traditional Software

Traditional software runs deterministic logic. You can predict its outputs from its inputs. It operates within defined boundaries and its behaviour does not change based on context, conversation history or intermediate reasoning.

AI agents are different in three ways that matter profoundly for governance.

They have no stable identity. A traditional software system has a defined identity: a service account with specific credentials and permissions. AI agents can be ephemeral, spun up for a task, operating across multiple systems and then terminated. Their identity may not be consistently tracked across the systems they touch. When an agent accesses your financial system, your HR platform and your customer database in the same session, the audit trail may show three different actors, or none at all.

They have no defined lifecycle. AI agents can be created by other agents, cloned, updated mid-deployment and terminated without formal process. The Gravitee report found that 25.5 per cent of deployed agents can create and task other agents, compounding the identity problem with every new agent spawned.

They operate with multi-system access by design. An AI agent executing a complex task may legitimately need access to files, databases, APIs and external services. Managing that access with least privilege, just-in-time provisioning and scope limitation is significantly more complex than managing a human user's access profile.

The Specific Governance Gaps Putting Organisations at Risk

NIST's NCCoE concept paper on AI agent identity and authorisation identifies the core problem clearly: organisations need to understand how identity principles, specifically identification, authentication and authorisation, apply to agents to provide appropriate protections. Most do not.

The Gravitee report found that 47.1 per cent of organisations' AI agents are actively monitored or secured. More than half operate without consistent security oversight or audit logging.

Ungoverned identities. If an AI agent does not have a distinct, persistent identity within enterprise systems, organisations cannot attribute its actions, audit its behaviour or detect anomalous activity. You cannot manage what you cannot identify.

Unconstrained access. Many AI agents are provisioned with access that far exceeds what any individual task requires. Without least-privilege controls applied at the agent level, an agent can access data it has no legitimate reason to touch, and that access is invisible in standard identity and access management reporting.

No lifecycle management. When an agent is no longer needed, or when its task context changes, there is no equivalent of the identity lifecycle management that organisations apply to departing employees or role changes. Agents accumulate access; they rarely have it revoked in a structured way.

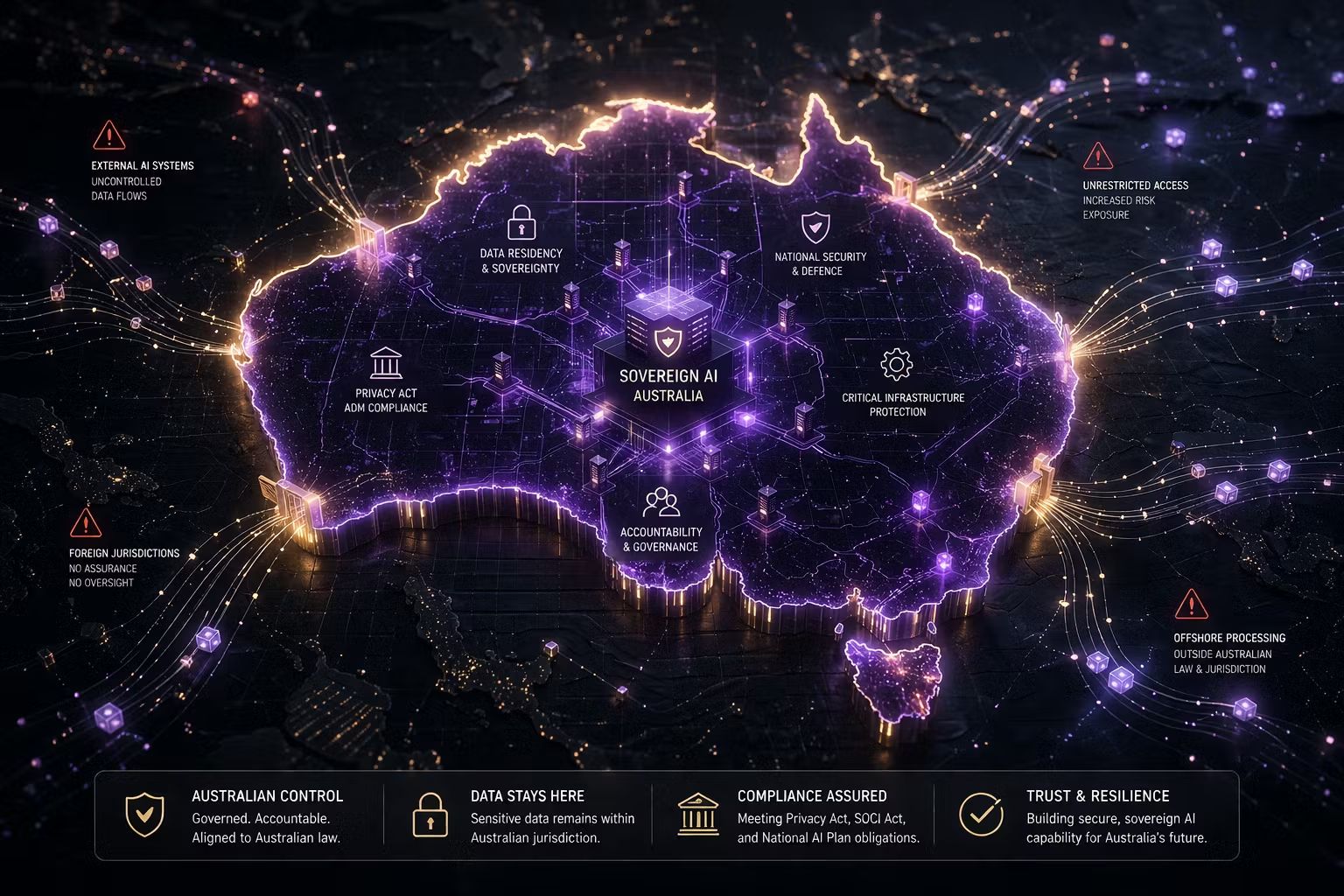

In Australia, the regulatory framework, including Privacy Act obligations, APRA prudential standards and the emerging AI ethics framework, creates specific accountability requirements for automated systems that AI agent governance must directly address.

Real Risk Scenarios Organisations Are Ignoring

NIST's Federal Register notice on AI agent security explicitly identifies prompt injection as a live concern. This is where malicious content in data sources directs an agent to take unintended actions. An agent instructed to summarise customer documents can be directed by content within those documents to exfiltrate data, send unauthorised communications or modify records.

Privilege escalation is a related concern. An agent with broad enterprise access can be manipulated into accessing data beyond the scope of its intended task. If access was not scoped to the minimum required, the attack surface is the full extent of the agent's permissions, which may span multiple critical systems.

Unintended autonomous action is subtler but equally serious. An agent designed to draft responses for human review may, in certain workflow configurations, begin sending those responses directly. These are not cyberattacks. They are governance failures, and they are indistinguishable from deliberate attacks if audit logging is not in place.

How to Govern AI Agents

The answer begins with applying the same identity and access management discipline to agents that organisations already apply to humans, and then extending it to cover agent-specific risks. NIST's concept paper proposes four foundational categories of control.

Identification. Every AI agent must have a distinct, persistent identity within the enterprise architecture, with metadata about its purpose, scope and authorised actions. Identity must be specific enough to distinguish agents with different tasks, not a shared service account across all agentic activity.

Authentication. Agents must prove their identity to the systems they access, using standards-based mechanisms such as OAuth 2.0 that can be consistently enforced and audited. Key management, covering issuance, rotation and revocation, must be explicitly defined.

Authorisation. Zero-trust principles apply: every agent action should be authorised as if it were a new request. Just-in-time, scoped permissions that expire at task completion are the target architecture. Standing permissions with broad scope are the vulnerability.

Logging and transparency. Every trigger, input, decision and action of an AI agent must be captured in an immutable audit trail. Without this, post-incident investigation is impossible and regulatory compliance in financial services and healthcare cannot be demonstrated. Healthcare organisations face a 92.7 per cent incident rate related to AI agent activity (Gravitee 2026). It is telling that identity assurance for AI ranked as the second-highest CISO priority heading into 2026, scoring 4.46 out of 5 in the IANS research community poll.

ISO 42001 and NIST AI RMF: What Your Obligations Already Require

Organisations pursuing ISO 42001 certification or operating under the NIST AI Risk Management Framework already have the governance intent to address AI agent identity. The question is whether that intent has been operationalised at the agent level.

ISO 42001 requires organisations to define controls for their AI systems, including access management and accountability structures. The standard extends to any AI system deployed within the organisational context, including agents. If your ISO 42001 implementation has not explicitly addressed AI agent identity and access, it has a material gap.

The NIST AI RMF's GOVERN, MAP and MEASURE functions all apply directly. The GOVERN function requires clear accountability for AI system behaviour, which is impossible without stable agent identity. The MAP function requires identifying context and risk, which is impossible without lifecycle visibility. The MEASURE function requires ongoing monitoring, which is impossible without comprehensive audit logging.

Organisations building towards AI governance maturity in Australia need to ensure their governance frameworks explicitly address agentic AI, not just traditional AI systems. The regulatory environment is moving in this direction whether organisations are ready or not.

What This Means for Your Organisation

At Trusenta, we are observing the same inflection point in AI agent governance that cybersecurity went through when the industry recognised that network perimeter defence was insufficient for a distributed, identity-based world. The perimeter failed. Identity became the new control layer.

The same transition is underway for AI agents. Agents are being created by other agents, provisioned through automated pipelines and operating across system boundaries that traditional access control was never designed to span. The organisations building identity governance for their AI agents now, before a privilege escalation or unintended autonomous action becomes a regulatory incident, will be significantly better positioned than those treating this as tomorrow's problem.

Key Takeaways

- 80.9% of technical teams have AI agents in active testing or production; only 14.4% deployed with full security and IT approval

- AI agents are fundamentally different from traditional software: no stable identity, no defined lifecycle and multi-system access by design

- Over half of deployed AI agents operate without consistent security oversight or audit logging

- NIST launched its formal AI Agent Standards Initiative in February 2026, with identity and authorisation as core areas of focus

- The four foundational controls are identification, authentication, least-privilege authorisation and immutable audit logging

- ISO 42001 and NIST AI RMF obligations already extend to AI agents, but most organisations have not operationalised this at the agent level

How Trusenta Can Help

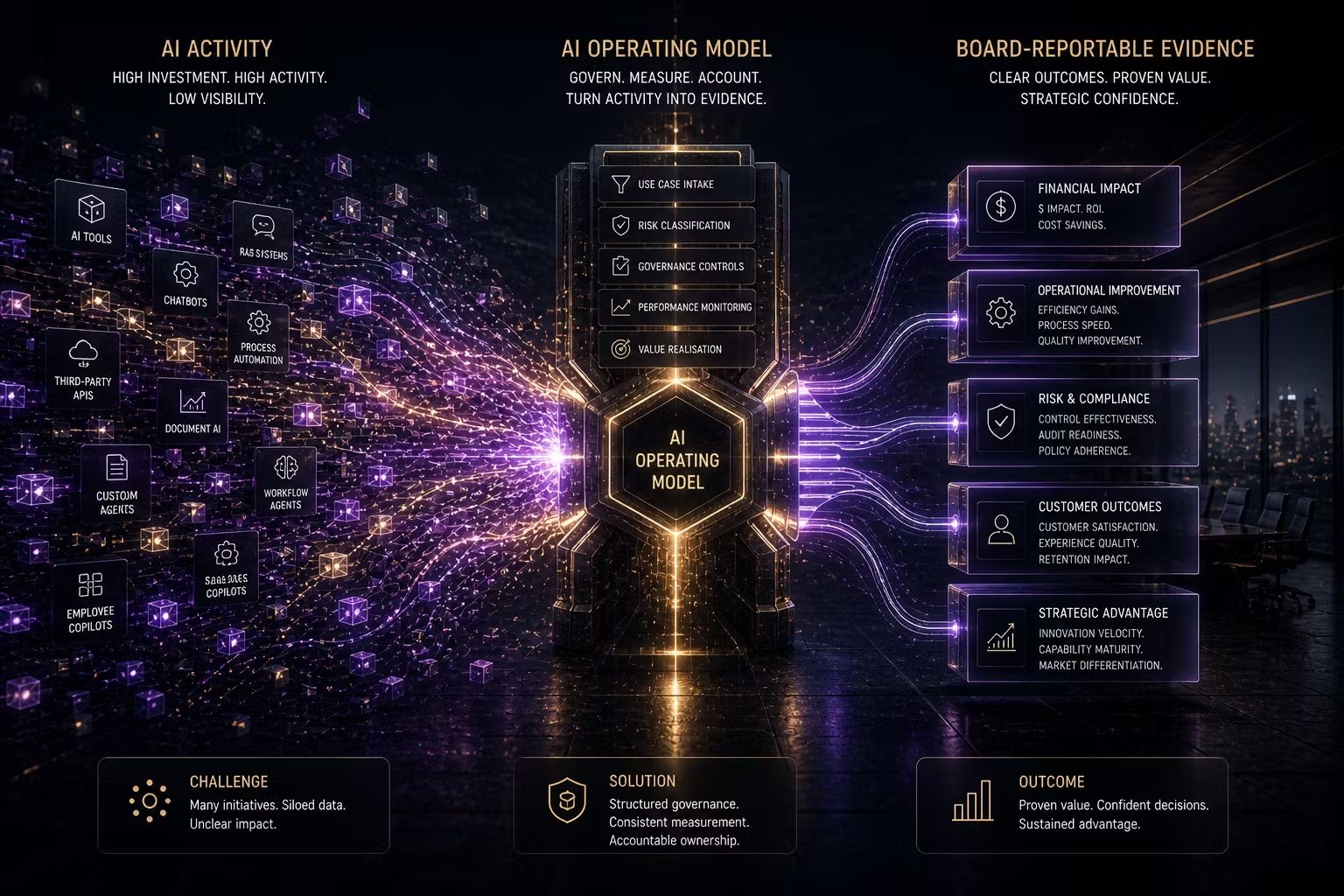

AI Governance: Trusenta's AI Governance product provides the use-case registration and risk assessment infrastructure needed to classify AI agents, document their access requirements and track their deployment status, forming the foundation for any meaningful agent-level governance programme.

Risk Management: Enables organisations to build and maintain an AI-specific risk register that explicitly captures agent-level risks, including prompt injection, privilege escalation and lifecycle management gaps, with documented treatment plans linked to specific controls.

AI Governance Maturity Uplift: For organisations that have foundational governance in place but have not yet operationalised agent-level controls, this engagement designs and implements the frameworks, workflows and TRUSENTA.IO configuration needed to address the AI agent governance gap before it becomes a regulatory or security incident.

Conclusion

The window to get AI agent governance right, before regulators force the issue, is narrowing. NIST is moving. The Australian regulatory environment is watching. The enterprises that build identity governance for their AI agents now will be significantly better positioned than those that treat it as tomorrow's problem. Identity is the new control layer. For AI agents, the time to build it is now.

Author

With over 30 years of experience delivering real technology outcomes, he combines strategic insight with deep technical expertise across enterprise, cloud and AI. At Trusenta, he helps organisations move beyond AI hype to accountable, sustainable impact.

https://www.linkedin.com/in/shanecoetser/