Enterprise Architecture

Enterprise AI Platform Architecture: The Reference Architecture That Actually Scales

Most organisations are building AI capability one pilot at a time, with no shared platform underneath. This post presents a vendor-neutral, six-layer reference architecture for enterprise AI and explains why the governance layer, where codified EA artefacts live and are enforced, is the one most teams forget until it is too late.

March 5, 2026

11

min read

Gartner predicts that by the end of 2027, more than 40% of agentic AI projects will be cancelled. Not because the models were bad. Because organisations tried to scale AI on a foundation of individual pilots, disconnected tools and governance that lived in documents no system ever read. The platform was never built. The governance was never codified. And when the cost and risk of operating without those things became undeniable, the projects were shut down.

This post is about preventing that outcome. It presents a vendor-neutral reference architecture for enterprise AI platforms; six layers, each with a clear purpose, and a governance layer that is not bolted on at the end but sits at the centre of everything.

Why Platformisation Is the Only Path to Scale

Every enterprise AI journey starts with pilots. That is appropriate. Pilots validate ideas, build organisational confidence and generate the evidence needed to justify investment. The problem is not piloting, it is staying in pilot mode.

When each AI use case is built independently, organisations accumulate technical debt at speed. Every team chooses its own model provider, its own orchestration approach, its own monitoring setup and its own security posture. Within eighteen months, the enterprise has dozens of AI deployments with no shared observability, no consistent policy enforcement and no way to understand its aggregate AI risk exposure. The cost of managing that landscape grows faster than the value it produces.

Platformisation solves this. A shared AI platform provides the infrastructure, tooling and governance that individual use cases draw on rather than rebuild. Teams move faster because the foundations already exist. Governance is consistent because it is enforced at the platform level, not left to individual teams to interpret and apply.

The Six-Layer Enterprise AI Platform Reference Architecture

The following reference architecture is vendor-neutral. It describes the layers that need to exist, not the specific products that fill them. The right product choices depend on the organisation's existing technology landscape, cloud posture and procurement constraints.

Layer 1: Model Access

The foundation of any AI platform is a managed, governed interface to the AI models the organisation uses. This is not simply a list of approved model providers. It is an abstraction layer that normalises access, enforces usage policies and captures telemetry for every model call.

A well-designed model access layer allows the organisation to swap model providers without rewriting downstream applications, enforces approved model versions and prevents shadow usage of unapproved models. Every model invocation passes through this layer, which means every invocation is logged, measured and governed.

Layer 2: Orchestration

The orchestration layer manages the composition of AI capabilities into workflows. For simple use cases this might be a single model call with a structured prompt. For agentic workflows it involves multi-step planning loops, tool invocations and conditional branching based on intermediate outputs.

The orchestration layer is where agent behaviour is defined and bounded. The tools an agent can invoke, the conditions under which it escalates to a human and the maximum number of planning steps it can take before requiring human review. All of these are configured in the orchestration layer and enforced at runtime. They are not suggestions left to individual agent implementations.

Layer 3: Retrieval and Knowledge

Most enterprise AI use cases require access to organisational knowledge: documents, databases, policies, product information, etc.. The retrieval and knowledge layer manages how that knowledge is indexed, maintained and surfaced to AI systems through retrieval-augmented generation (RAG) patterns.

This layer is where data quality and lineage requirements from the AI-readiness foundations (covered in Part 2) are enforced in practice. Only data that meets the AI-readiness standard for its domain can be indexed. Access controls at the retrieval layer enforce the permissions defined in the data architecture. When the knowledge base is updated, the change is governed to ensure that any AI deployments that draw on the updated knowledge for impact assessment before the update is applied.

Layer 4: Evaluation and Safety

The evaluation and safety layer provides the mechanisms for assessing AI output quality and enforcing safety constraints before outputs reach end users or downstream systems. This includes automated evaluation against defined acceptance criteria, content safety filtering and output validation against business rules.

This layer does not replace human review for high-stakes decisions. It handles the volume of routine outputs that do not require human review, freeing human oversight for the decisions that genuinely warrant it. The boundary between automated handling and human escalation is a codified artefact, a policy object that defines the conditions under which outputs must be reviewed before being acted upon.

Layer 5: Monitoring and Audit

The monitoring and audit layer provides the operational observability that the AI-readiness foundations require. It captures model invocation traces, evaluation metric timeseries, cost telemetry and drift signals. It surfaces anomalies, triggers alerts and provides the audit trail that regulatory compliance and internal governance require.

Critically, this layer is not built after deployment. It is operational before the first AI use case goes live. Teams that instrument monitoring retrospectively consistently discover that the data they need to understand historical AI behaviour was never captured. The audit trail cannot be reconstructed after the fact.

Layer 6: Governance and Standards

This is the layer most teams think about last and should think about first. The governance and standards layer is where the codified EA artefacts live; the data standards, identity patterns, policy objects and approved technology choices that every other layer is bound by.

When a delivery team wants to use a model provider not on the approved list, the governance layer surfaces the relevant policy object and triggers the change workflow. When an agent's tool permissions are proposed to expand, the governance layer assesses the impact against the identity standard and routes to human approval. When a new AI use case is registered, the governance layer checks it against the current architecture standards before the use case is approved to proceed to design.

This layer is the operating rules of the AI platform. Every other layer enforces policies that this layer defines. Human-in-the-loop workflows are triggered when those policies are challenged. Changes to the policies are governed through the same change management process as changes to any other critical architecture artefact.

The Paved Road Pattern

The most effective way to operationalise this architecture is through what Trusenta calls "golden paths:" a set of pre-approved, pre-configured deployment patterns that teams can follow to move from idea to production quickly, safely and in full compliance with enterprise standards.

A "golden path" for an AI use case might include: an approved model provider, a configured orchestration template, a retrieval pattern with data access controls pre-applied, evaluation criteria defined for the use case type, monitoring dashboards pre-built and a governance sign-off workflow that runs automatically when the use case is registered. Teams that follow the paved road do not need to engage the Architecture Review Board for every deployment. They are already operating within approved boundaries.

Teams that want to deviate from the "golden path" using a different model, extending agent permissions or accessing data outside the approved scope; trigger the change workflow. The deviation is reviewed, the risk is assessed and a human decision is made. If the deviation is approved, it either becomes part of the paved road (if it is likely to be needed again) or is granted as a specific exception with defined conditions and a review date.

This is how governance scales with AI delivery rather than becoming a bottleneck to it.

Build Versus Buy: The Platform Decision Framework

Almost no organisation builds all six layers from scratch. Almost no organisation buys a single platform that covers all six layers well. The realistic approach is a hybrid: cloud-native services for infrastructure-heavy layers (model access, monitoring), purpose-built or open-source tooling for orchestration, and a governance and standards layer that is configured to reflect the organisation's specific architecture standards rather than a vendor's generic defaults.

The decision framework for each layer should consider: existing technology investments and integration cost, the organisation's AI maturity and the speed at which the platform needs to be stood up, the degree to which governance requirements are met out of the box versus requiring significant configuration, and the long-term operational cost of each approach.

What the decision framework should not do is optimise each layer independently. The layers are interdependent. A monitoring solution that does not integrate with the governance layer's policy objects cannot trigger the right alerts. An orchestration layer that does not enforce the permissions defined in the governance layer leaves agent behaviour ungoverned. Platform coherence, the ability of all six layers to work together, is more important than having the best-in-class tool in each individual layer.

The Trusenta Perspective

We work with organisations at every stage of AI platform maturity; from those that have not yet started to those managing dozens of active deployments and feeling the cost of having built them without a platform underneath. The consistent finding is that organisations which invest in the governance and standards layer early spend far less on remediation later. The "golden path" they build become a competitive advantage; faster delivery, more consistent risk management and a much shorter path from new use case idea to production deployment.

For Australian organisations, the governance layer also serves a compliance function that is growing in importance. As APRA, the OAIC and sector-specific regulators increase their expectations around AI oversight and auditability, the governance layer of the AI platform is what produces the evidence those expectations require.

Key Takeaways

- Scaling AI on a foundation of individual pilots is a path to technical debt, inconsistent governance and mounting operational risk. Platformisation is the only sustainable path to scale.

- A well-designed enterprise AI platform has six layers: model access, orchestration, retrieval and knowledge, evaluation and safety, monitoring and audit, and governance and standards.

- The governance and standards layer is the operating rules of the AI platform. It defines the codified artefacts that every other layer enforces, and triggers human-in-the-loop workflows when those artefacts are challenged.

- The paved road pattern is how governance scales with delivery: pre-approved deployment patterns that teams can follow without ARB engagement, with deviation workflows for anything outside the approved paths.

- Platform coherence, the ability of all six layers to work together, matters more than having the best product in each individual layer.

Coming Up in Part 4: RAG, Agents and the Reality Gap

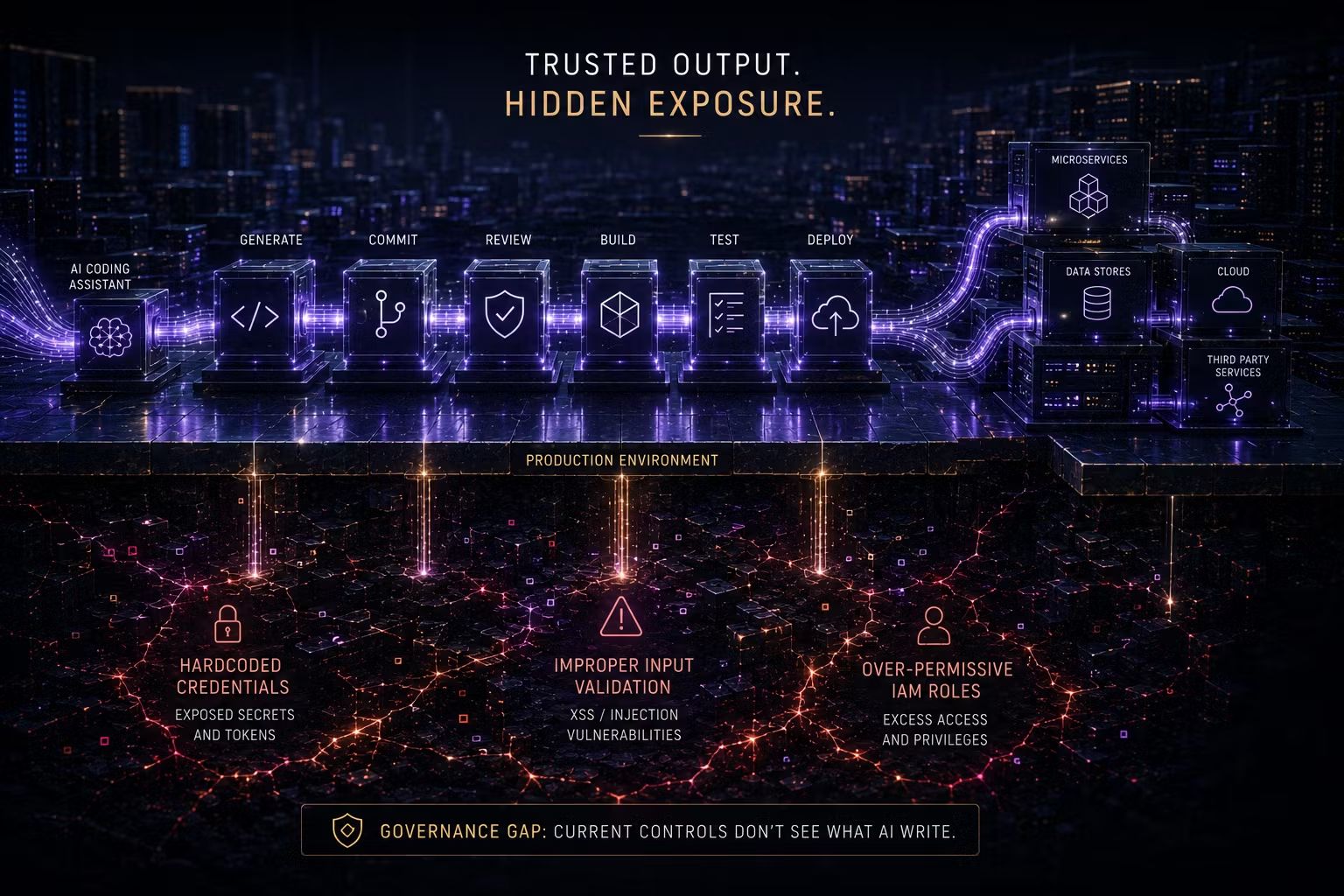

In Part 4, Mark Miller examines the architectural patterns for RAG and agentic AI that actually survive production: including why RAG and agents solve fundamentally different problems (and should not be treated as a maturity progression), how to bound agent behaviour using codified artefacts and what the OWASP Agentic Top 10 means for EA.

How Trusenta Can Help

Enterprise Architecture in the AI Era includes platform architecture assessment and design as a core deliverable — evaluating your current AI tool landscape against the six-layer reference architecture, identifying gaps and producing a platform roadmap that sequences investment for maximum governance and delivery impact.

Enterprise Architecture Function Establishment is for organisations that recognise the AI platform governance challenge but do not yet have the EA function capability to address it. This service establishes the EA function, operating model and initial set of codified artefacts needed to govern an enterprise AI platform from day one.

Author

With over 30 years of experience delivering real technology outcomes, he combines strategic insight with deep technical expertise across enterprise, cloud and AI. At Trusenta, he helps organisations move beyond AI hype to accountable, sustainable impact.

https://www.linkedin.com/in/shanecoetser/