Enterprise Architecture

AI-Ready Enterprise Architecture: The New Non-Negotiables

Most AI projects don't fail because of the model. They fail because the data, identity and observability foundations were never built. This post examines what AI-ready enterprise architecture actually requires and why each requirement must be codified as an enforceable standard, not a best-practice document.

March 1, 2026

8

min read

Here is a finding that surprises almost every leadership team we work with: the majority of AI projects that stall or fail in production do not fail because they chose the wrong model. They fail because the organisation was not architecturally ready for AI. The data was not trustworthy. The access controls were not designed for autonomous agents. The monitoring systems were built for applications, not for probabilistic, non-deterministic AI behaviour. The model was fine. The foundation was not.

This is what AI-ready enterprise architecture actually means, not a technology refresh or an infrastructure upgrade; but a set of foundational capabilities that must exist and be codified before AI can be deployed responsibly at scale. In Part 1 of this series we established that EA artefacts must shift from documents to machine-readable objects. This part examines which artefacts matter most and what making them AI-ready actually requires.

What Makes Enterprise Architecture AI-Ready?

There are four non-negotiable foundations. Each has always existed in some form within EA. What has changed is the standard they need to meet and the fact that meeting that standard requires these foundations to be codified as structured objects that AI systems intake workflows and governance processes can actually act on.

The four foundations are: AI-ready data, identity and authorisation patterns for AI, cross-cutting observability and an enforceable policy layer. None of them can live in a document. All of them must be codified.

Foundation 1: AI-Ready Data as an Architectural Requirement

Data readiness is consistently the first failure point in enterprise AI programmes and yet most organisations still treat it as a data team responsibility rather than an EA responsibility. That distinction matters because AI-ready data is an architectural requirement, not a data quality programme.

What does AI-ready data require from an architecture standpoint? Four things: quality, lineage, permissions and metadata. Quality means the data is accurate, consistent and current enough to be used for AI-driven decisions. Lineage means the provenance of every data asset used to train or ground an AI system is traceable. Permissions means data access policies for AI agents are explicitly defined and enforced; not inherited from application-level access controls that were never designed for autonomous, tool-using systems. Metadata means every AI-relevant data asset is documented with its classification, sensitivity, permitted use cases and responsible owner.

Each of these is a codified artefact. A data domain's AI-readiness standard is not a principle in a strategy document. It is a structured object in your architecture repository that an AI use case intake process checks against before a deployment is approved. If the data standard for a proposed use case is not met, the intake workflow routes to remediation before progressing.

The NIST AI RMF's March 2025 update placed significant new emphasis on model provenance and data integrity as architectural requirements. For organisations deploying AI in Australia, this is increasingly relevant as regulatory expectations around AI transparency tighten across the financial services and government sectors.

Foundation 2: Identity and Authorisation Patterns for AI Agents

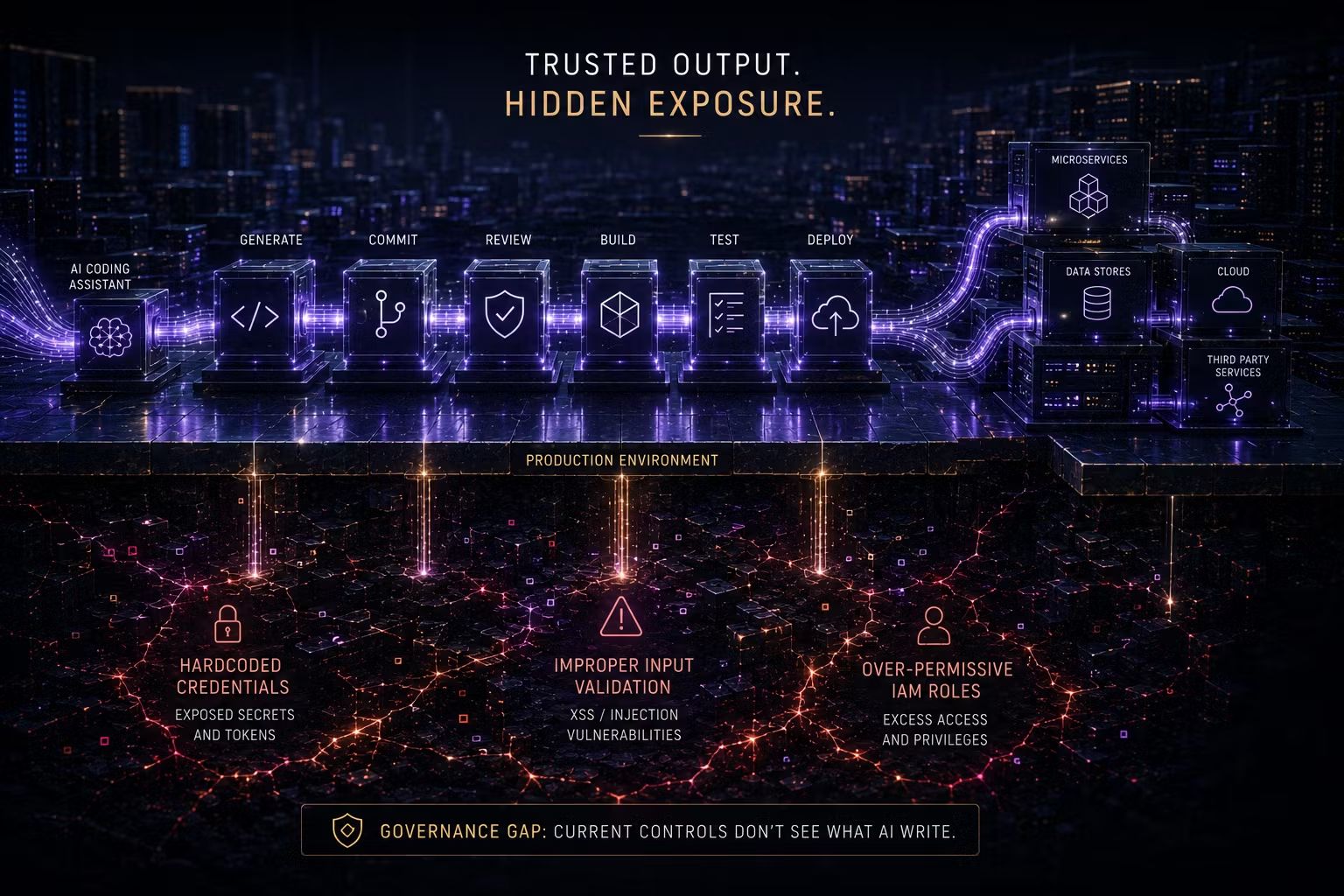

Traditional identity and access management was designed for humans. A person authenticates, receives a set of permissions appropriate to their role and accesses systems within those permissions. This model breaks when applied to AI agents and the break is significant.

An AI agent does not have a stable role. It has a task, a set of tools and a planning loop that may invoke those tools in ways that were not explicitly anticipated at design time. EA must codify identity and authorisation patterns for AI agents as explicit architectural standards. This means: each agent has a defined identity separate from the human users it serves; each agent's tool permissions are explicitly scoped to the minimum required for its designated task; tool invocation is logged with sufficient detail to reconstruct what the agent did and why; and deviations from the permitted scope trigger an exception workflow rather than silently failing or worse, silently succeeding.

These are not configuration choices left to individual development teams. They are architectural standards that must be codified and enforced across every AI deployment in the organisation. When a team proposes extending an agent's tool permissions beyond the approved scope, that change triggers a review workflow surfacing the impacted architecture object, assessing downstream risk and routing to human approval before the change is made.

Foundation 3: Observability Built for AI Behaviour

Application monitoring was built to answer: is the system up and is it performing as expected? AI observability must answer a harder question: is the AI system making decisions that are accurate, safe, within its boundaries and aligned with business intent? These are fundamentally different questions and the monitoring infrastructure that answers the first cannot answer the second without significant extension.

AI observability requires, at minimum: tracing of every model invocation with inputs, outputs and latency; evaluation metrics that measure output quality against defined acceptance criteria (not just uptime); cost telemetry that tracks spend per model call, per use case and per business unit; drift detection that identifies when model behaviour is changing over time; and incident response hooks that trigger when evaluation metrics fall below threshold or anomalous behaviour is detected.

Each of these is a codified architecture requirement. The observability standard for AI deployments is not a monitoring checklist. It is a structured object that every AI deployment must satisfy before going to production. When drift is detected, the system surfaces the relevant architecture objects, assesses whether the drift constitutes a boundary violation and routes to human review if it does.

Foundation 4: An Enforceable Policy Layer

The three foundations above (e.g. data, identity and observability) are specific capability areas. The fourth is what connects them: a policy layer that defines the rules governing AI behaviour across the enterprise and enforces those rules at runtime.

A policy that says AI systems must not access personal data without explicit authorisation is meaningless if it exists only in a governance document. It becomes meaningful when it is codified as a machine-readable policy object that is enforced at the data access layer; so that an AI system physically cannot access out-of-scope personal data, rather than being trusted not to. The policy is the artefact. The enforcement is the architecture.

When an AI system or delivery team wants to operate outside a policy boundary, that request triggers a structured workflow that surfaces the specific policy object being challenged, assesses the risk of the proposed change and routes the decision to the appropriate authority.

The AI-Ready Architecture Checklist

Before any AI deployment progresses to production, the following should be confirmed as codified, not just documented:

- Data: All data sources used by the AI system are registered, classified and assessed against the AI data-readiness standard for their domain. Lineage is traceable. Permissions are explicitly defined for AI access.

- Identity: The AI agent or model has a defined identity, its tool permissions are explicitly scoped to least-privilege and its invocation is logged with full traceability.

- Observability: Evaluation metrics are defined and baselining is complete. Tracing, cost telemetry and drift detection are operational before deployment, not after.

- Policy: All applicable policy objects are identified, confirmed as current and enforced at runtime, not referenced in a compliance document.

- Change governance: If the AI system deviates from its approved boundaries, the workflow is known, the responsible owner is identified and the process for human-in-the-loop review is operational.

The Trusenta Perspective

The organisations we work with that have the strongest AI outcomes share one characteristic: they built the foundations before they built the features. They did not start with the most impressive use case . They started with the data classification, the agent identity standard and the observability baseline. Those foundations felt slow at first. They became the reason subsequent deployments were fast, safe and auditable.

In an Australian market context, AI-ready architecture is increasingly a compliance prerequisite. Organisations operating under APRA CPS 230, the Privacy Act or sector-specific AI guidance cannot rely on post-hoc governance of AI behaviour. The data, identity and policy foundations must exist before the AI is deployed and they must be codified well enough to be evidenced in an audit.

Key Takeaways

- Most AI failures are not model failures. They are data, identity and observability failures that could have been addressed before deployment.

- AI-ready data requires quality, lineage, permissions and metadata to be codified as structured architecture objects, not documented as best practices.

- Identity and authorisation patterns for AI agents must be explicitly defined at the agent level. Inherited application-level controls are insufficient for autonomous, tool-using systems.

- AI observability requires evaluation metrics, drift detection and cost telemetry in addition to standard uptime monitoring and these requirements should be codified at intake, not retrofitted after deployment.

- An enforceable policy layer connects the foundations: rules about data access, tool permissions and output constraints must be enforced at runtime, not referenced in governance documents.

Coming Up in Part 3: Enterprise AI Platform Architecture — The Reference Architecture That Actually Scales

In Part 3, Shane Coetser presents a vendor-neutral reference architecture for enterprise AI platforms; examining the six platform layers every organisation needs, the build-versus-buy decisions that shape how quickly they can be established and how the governance layer sits within the platform stack to enforce AI-readiness standards at speed.

How Trusenta Can Help

Enterprise Architecture in the AI Era addresses exactly the gap this post describes. If your EA function has documented AI data principles and access policies but has not yet codified them as enforceable, machine-readable standards, this advisory engagement provides the methodology to make that transition; covering data, identity, observability and policy layers as a coherent architecture programme.

AI Governance provides the structured intake and assessment platform that turns AI-readiness checks into operational reality. Rather than reviewing readiness through manual checklists, it provides a codified assessment workflow that evaluates each AI use case against your architecture standards flagging gaps in data readiness, identity design or observability before deployment is approved.

Author

Mark brings a rare blend of C-suite leadership and hands-on consulting experience to Trusenta. As former SVP of Services, SVP of Business Opeartions, Managing Director and CIO he brings a breadth of experinece in his specialty in guiding organisations through AI strategy, governance and adoption; bridging ambition with practical execution. His focus is on helping clients embed AI responsibly, at scale and in service of real business outcomes.

https://www.linkedin.com/in/consult-mmiller/