AI Governance

Part 8: AI Governance at Pace - 10 Things Enterprises Must Get Right

AI adoption inside most enterprises is now outpacing the governance teams responsible for overseeing it. Manual review processes that worked for ten use cases will not work for a hundred. This post explores how AI can be used to augment governance itself; from automated pre-screening and suggested risk scores to auto-tagging, while keeping human judgement at the centre of every significant decision.

March 2, 2026

7

min read

Use AI to Augment AI Governance

Part 8 of 10 — AI Governance at Pace

If you are governing AI with purely manual effort, you will always lag behind AI adoption.

This is not a future problem. For most enterprises, it is already happening. 80% of enterprises have 50 or more generative AI use cases in development, according to the 2025 AI Governance Benchmark Report, yet most have only a handful in production. The reason, cited by 44% of governance leaders, is that the governance process itself is too slow. A further 56% point to disconnected systems as the primary blocker to scaling responsibly.

The irony is sharp. AI is being adopted precisely because it automates effort and accelerates decisions. Yet the function responsible for governing AI is still relying on manual intake forms, spreadsheet registers, email-based approvals and individual expertise spread across too few people. At some point, and for many organisations that point has already arrived, the gap between AI deployment speed and governance throughput becomes a structural problem. Not just a resourcing one.

The answer is not to hire your way out of it. It is to apply AI to the governance function itself.

Why AI Assisted Governance Is No Longer Optional at Scale

There is a straightforward capacity argument here. If a governance team can thoroughly assess ten AI use cases per quarter using manual processes, and the business is submitting forty, something will give. Either assessments become superficial, backlogs grow so long that teams bypass governance entirely, or risk decisions get made informally without documentation or accountability.

All three outcomes are worse than a well-designed, AI-supported process.

The 2025 AI Governance Benchmark Report found that teams using manual governance processes spend 56% of their time on governance-related administration (e.g. data collection, formatting, chasing information and populating registers) rather than on the analytical and judgement work those processes are supposed to support. That is a significant structural inefficiency. And it compounds as use case volumes grow.

IBM's internal governance programme offers a useful reference point. They govern over 1,000 AI models and achieved a 58% reduction in data clearance processing time by investing in governance automation without reducing oversight quality. Governance didn't slow their AI programme down. It accelerated it by removing uncertainty and reducing risk-driven delays.

The question is not whether to automate parts of AI governance. At scale, it is not optional. The question is which parts to automate and how to do it without creating a false sense of control.

Examples of AI Augmented Governance in Practice

The most effective applications of AI in governance are not about removing humans from decisions. They are about removing humans from the administrative work that precedes decisions, so that expert time is spent on the judgement calls that actually need it.

Automated pre-screening of use cases

When a business unit submits a new AI use case for governance review, a significant portion of the intake process is procedural: is the submission complete? Does it include the required fields? Is there enough information to begin a risk assessment? Manual pre-screening of incomplete or poorly described submissions wastes reviewer time before any substantive work begins.

AI-assisted pre-screening can check submissions against defined completeness criteria, flag missing information, identify duplicate or near-duplicate use cases already in the register and categorise submissions by deployment context all before a human reviewer sees the record. This does not assess the risk. It ensures that when the assessor opens a submission, it is ready to be assessed.

Suggested risk scores based on patterns

Risk scoring of AI use cases involves evaluating dimensions such as data sensitivity, decision impact, regulatory exposure and deployment scope. For an experienced assessor working with a mature framework, many of these assessments follow recognisable patterns. An AI system used in credit decisioning will score differently on decision impact than a content generation tool used internally. A system processing health information will score differently on data sensitivity than one using publicly available data.

AI can surface suggested risk scores based on patterns from previously assessed use cases with similar characteristics. The assessor reviews, adjusts and approves, the suggestion does not replace their judgement, but it does reduce the time spent on assessments where the pattern is clear, freeing attention for the genuinely novel or ambiguous cases that require more careful analysis.

Auto-tagging of risk types and framework requirements

A well-governed AI register maps each use case to the relevant risk categories and regulatory framework requirements that apply to it. Doing this manually for every submission (e.g. tagging applicable EU AI Act provisions, relevant ISO 42001 controls, NIST AI RMF functions and Australian regulatory considerations) is time-consuming and prone to inconsistency.

AI can analyse use case descriptions and automatically apply relevant tags: risk types (bias, hallucination, operational, privacy), applicable regulatory frameworks and suggested control categories. This creates consistency across the register, reduces the cognitive load on assessors handling high volumes and ensures that framework coverage is not dependent on the individual reviewer's knowledge of every applicable standard.

Human in the Loop as a Design Principle

The risk of AI augmented governance, if not designed carefully, is automation that produces a false sense of oversight. Suggested risk scores accepted without scrutiny. Auto-tags treated as definitive rather than indicative. Pre-screening that clears submissions that should have prompted further questioning.

Human-in-the-loop is the design principle that prevents this. It means AI assists and humans decide. The distinction between those two roles needs to be explicit in the system design, not just assumed.

The International Association of Privacy Professionals makes a useful observation here: assigning a human to act as the oversight point is not by itself sufficient. The goals of that oversight need to be defined in advance. Is the human there to verify completeness? To apply domain judgement? To catch bias in the suggested score? To provide regulatory interpretation? Unless the specific purpose of human involvement is defined at each decision point, the risk is that human review becomes rubber-stamping. They are present in the process but not genuinely active in it.

Effective human-in-the-loop design for AI governance means:

- Defining where AI assists and where humans decide. Pre-screening and initial tagging are good candidates for AI assistance. Final risk classification, approval or rejection of high-risk use cases and escalation decisions are not.

- Making AI suggestions visible and challengeable. Assessors should always see what the AI suggested and why, with the ability to override and record their reasoning. Unexplained suggestions that cannot be interrogated undermine the audit trail.

- Designing for exception, not just throughput. The value of AI assistance is most visible when it surfaces an anomaly such as a use case that does not fit prior patterns, a risk score that diverges from the suggested baseline. The system should be designed to surface these for human attention, not bury them.

A 2024 MIT Sloan Management Review study found that enterprises implementing human-in-the-loop controls reported 40% faster AI adoption rates than those pursuing full automation. Keeping humans meaningfully involved does not slow governance down. It makes the process defensible and sustainable at pace.

How AI Support Frees Experts to Focus on Judgement Not Admin

There is a talent dimension to this that does not get enough attention. The people best qualified to govern AI are those with the combination of technical literacy, regulatory knowledge and contextual business judgement the role demands are not in abundant supply. Spending their time on data entry, formatting and chasing incomplete submissions is not just inefficient. It is a misallocation of scarce capability.

When governance platforms handle the administrative and pattern-matching work, experts can direct their attention to the decisions that genuinely require it: the ambiguous use cases where the risk picture is unclear, the novel applications where there is no prior pattern to draw on, the escalations where business value and governance concern are in genuine tension.

This is the core value proposition of AI augmented governance. Not fewer people involved in governance. More meaningful involvement from the right people, at the right points, on the decisions that actually matter.

Forrester Research estimates that spending on AI governance tools will exceed $15 billion by 2030, representing 7% of total enterprise AI software expenditure. The investment case is not just compliance. It is operational: organisations with mature, automated governance deploy AI faster, with higher confidence and lower remediation costs than those still running governance on spreadsheets and goodwill.

How Do You Know Which Parts of Governance to Automate First?

Not every governance activity is a good candidate for AI assistance. The right starting points share a few characteristics: they are high-volume and repetitive, they follow recognisable patterns and their outputs are reviewed by a human before being acted upon.

Use case intake and pre-screening almost always qualify. Initial risk scoring for straightforward use case categories often qualifies. Framework tagging and control suggestion typically qualify. Final risk classification, approval decisions and escalations do not.

A useful diagnostic is to map your governance workflow and mark each activity against two dimensions: how much of the activity is administrative versus analytical, and how much human expertise is required to perform it well. Activities that are predominantly administrative and do not require deep expertise are automation candidates. Activities that require contextual judgement, regulatory interpretation or stakeholder engagement should stay with people that are supported, not replaced, by AI.

For Australian organisations operating under APRA's CPS 230 and preparing for the regulatory expectations that follow Australia's continuing AI policy development, the discipline of documented, traceable governance decisions will become increasingly important. AI augmented governance creates better audit trails than manual processes where every suggested score, every automated tag and every human override is logged. That traceability is not a byproduct of automation. It is one of its principal governance benefits.

The Trusenta Take

We have seen governance programmes stall not because organisations lacked intent, but because the process didn't scale. A framework that works well for five use cases per quarter becomes a bottleneck at fifty. When governance becomes the constraint on responsible AI deployment, something has gone wrong and the solution is not to weaken the governance, it is to make the process smarter.

The organisations that govern AI well at scale are not the ones with the largest governance teams. They are the ones that have designed their governance processes to do the administrative and pattern-matching work automatically, so that expert time is reserved for the decisions that genuinely require it. AI augments the function. Humans own the outcomes.

Key Takeaways

- Manual governance processes do not scale with AI adoption. Once use case volumes rise, administrative overhead crowds out analytical quality and the process becomes a bottleneck or a formality.

- AI assisted governance is not about removing humans from oversight. It is about removing humans from the administrative work that precedes oversight, so expert time is spent on judgement, not data entry.

- Automated pre-screening, suggested risk scoring and auto-tagging are the highest-value early automation targets: high volume, pattern-based and always subject to human review before acting.

- Human in the loop must be a deliberate design principle, not an assumption. Define where AI assists and where humans decide ensuring the system makes AI suggestions visible, challengeable and auditable.

- Better governance automation produces better audit trails. Every suggestion, tag and override is logged creating traceability that manual processes rarely achieve consistently.

Coming Up in Part 9

In Part 9, we examine how to measure whether your AI governance programme is actually working. Governance that cannot demonstrate its own effectiveness is difficult to defend to boards, regulators and audit functions and hard to improve. We explore the metrics and indicators that distinguish active, effective governance from a well-documented programme that exists on paper, and what a meaningful AI governance dashboard looks like in practice.

How Trusenta Can Help

AI Governance — Trusenta's AI Governance product is built specifically for the scale challenge this post describes. It structures use case intake, applies AI-assisted risk classification and generates suggested assessments that reviewers confirm, adjust or override keeping humans in the decision seat while removing the administrative burden that slows manual programmes down. If your governance process is becoming a bottleneck rather than an enabler, this is where to start.

Smart Voice — Stakeholder consultation is one of the most time-intensive parts of governance at scale. Trusenta's Smart Voice product uses AI-moderated interviews to conduct structured governance consultations across large stakeholder groups, automatically synthesising themes and insights. It applies the same logic as AI augmented governance to the human engagement work: consistent questioning, automated analysis and expert review of synthesised findings rather than raw transcripts.

AI Governance Maturity Uplift — If your organisation has foundational governance in place but is experiencing the throughput and consistency problems this post describes, Trusenta's AI Governance Maturity Uplift service is designed to close that gap. It redesigns your governance workflows for operational scale, configures TRUSENTA.IO to handle intake, assessment and monitoring at volume and equips your team to govern AI at pace without sacrificing oversight quality.

Conclusion

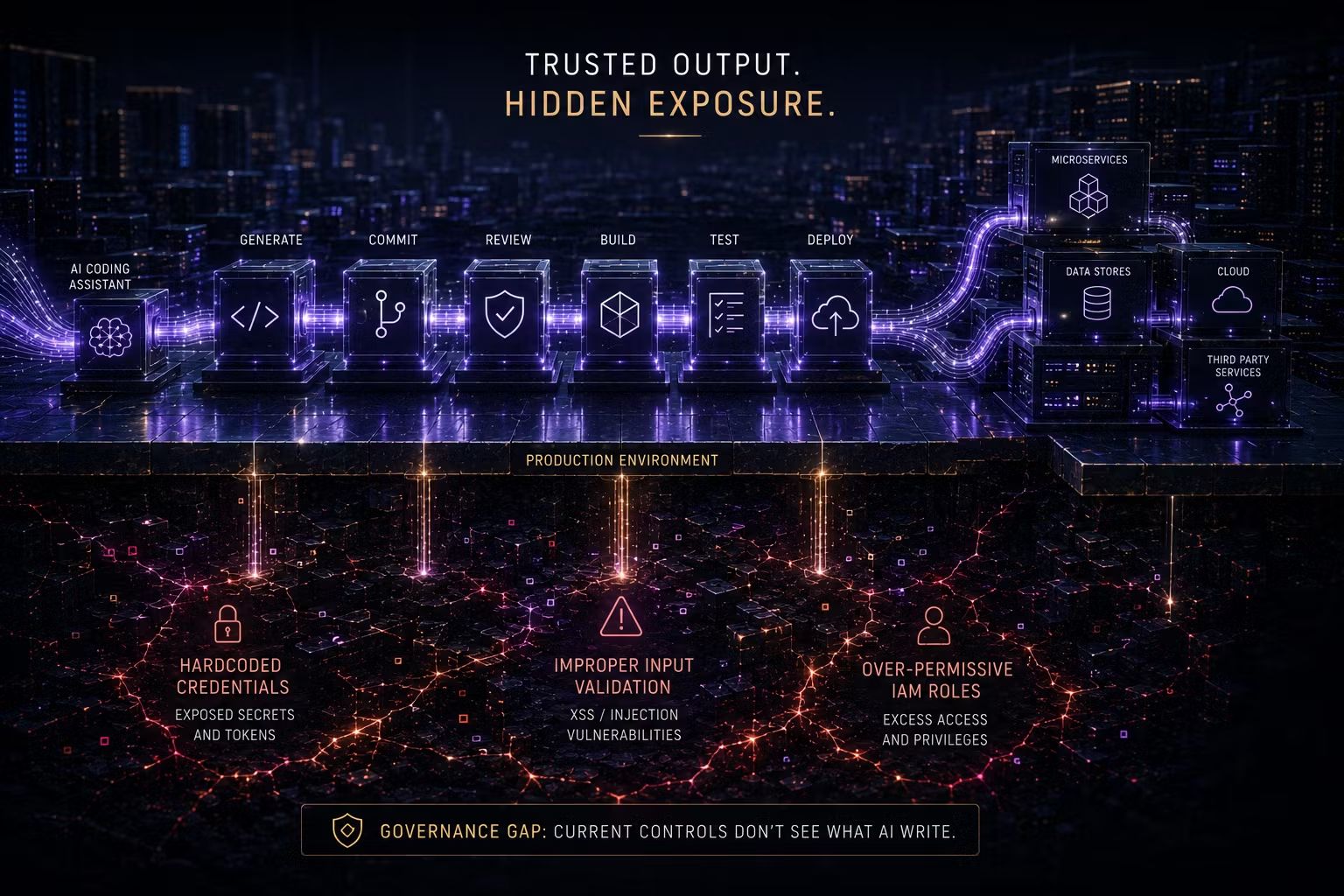

The governance gap is real and it is widening. AI adoption inside enterprises is accelerating; manual governance capacity is not. The organisations that close this gap will not do it by hiring faster than adoption grows. They will do it by applying the same logic to governance that they apply to everything else: automate the routine, augment the complex and reserve human expertise for the decisions that genuinely require it. AI that governs AI with humans owning the outcomes is not a theoretical possibility. For the organisations that build it deliberately, it is becoming a competitive advantage.

Where could AI remove friction in your current governance process without sacrificing control?

#AIforGovernance #AugmentedGovernance #AIinRisk #AIWorkflow

Author

Mark brings a rare blend of C-suite leadership and hands-on consulting experience to Trusenta. As former SVP of Services, SVP of Business Opeartions, Managing Director and CIO he brings a breadth of experinece in his specialty in guiding organisations through AI strategy, governance and adoption; bridging ambition with practical execution. His focus is on helping clients embed AI responsibly, at scale and in service of real business outcomes.

https://www.linkedin.com/in/consult-mmiller/