AI Governance

Part 6: AI Governance at Pace - 10 Things Enterprises Must Get Right

AI governance reviews are breaking down at the handoffs between teams. Every extra email adds weeks to approval cycles and introduces unnecessary risk. In Part 6 of our series, we explore how enterprises can establish centralised intake, automated routing and clear status definitions to transform fragmented review processes into a genuine competitive advantage.

February 16, 2026

6

min read

Streamline Cross-Functional Reviews: Where AI Governance Goes Wrong

There is a moment that every governance professional recognises. An AI initiative has been scoped, a business case has been built and internal champions are ready to move. Then the emails start: One to Legal, Another to Privacy, A follow-up to Security, A separate thread to Risk and somewhere in the middle of it all; Technology is waiting for input it never received.

This is where AI governance fails; not in the policies, not in the principles and not in the intent. It fails at the handoffs.

The Real Cost of Fragmented AI Reviews

The typical enterprise AI review process is a study in well-meaning dysfunction. Multiple specialist teams (e.g. Legal, Privacy, Security, Risk and Technology) each apply their own lens to an AI initiative, but they do so in isolation. Each team receives a different version of the documentation, assesses risks according to its own criteria and communicates findings through a separate email chain.

The consequences are entirely predictable. Cycle times blow out from weeks to months. Business units grow frustrated and begin finding ways around the process. Shadow AI proliferates precisely because the formal path is too slow and too opaque. Worse still, the quality of risk outcomes does not improve in proportion to the time invested. When teams work in isolation, they miss the interconnected risks that only become visible when Legal, Privacy, Security and Technology consider an initiative together.

Every extra email in this process is not just a minor administrative inconvenience. It is a compounding delay and a compounding risk. A question that sits unanswered in an inbox for three days means another three days before a follow-up can be issued, another three days before a response arrives and another three days before the next team in the chain can begin its work. The mathematics of sequential, email-driven reviews are punishing.

Why AI Assessments Demand a Different Approach

Traditional enterprise review processes were designed for a world where new technology was introduced slowly and in discrete, well-understood categories. AI does not conform to those conditions. AI initiatives can span multiple risk domains simultaneously, involve novel data flows that Privacy and Security have never encountered and produce decisions that Legal must assess against regulations that may not yet have settled.

This complexity means that the sequential siloed review model is not merely inefficient, it is structurally inadequate. An AI use case involving a generative model with customer data access, automated decision outputs and third-party vendor involvement cannot be adequately assessed when each reviewing team sees only its own slice of the picture.

What enterprises need is not a faster version of the old process. They need a fundamentally different one built around centralised intake, automated routing and collaborative assessment.

Centralised Intake: The Foundation of Effective Review

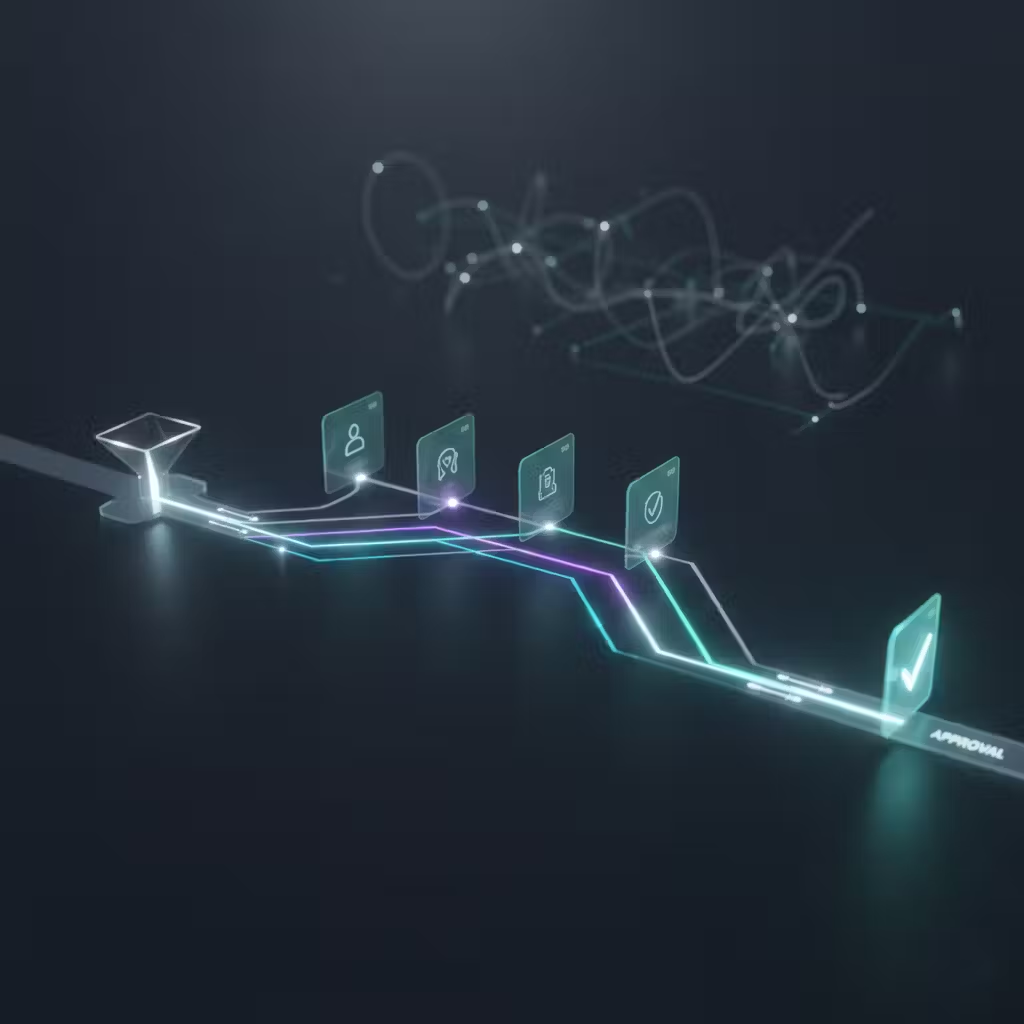

The first and most important structural change is replacing ad hoc submission with a structured, centralised intake process. When an AI initiative enters the review process, it should do so once through a single, standardised mechanism that captures all of the information each reviewing team will need.

A well-designed intake form does more than gather basic details. It elicits the information required for risk classification: the nature of the AI system, the data it will access, the decisions it will support or automate, the third-party components involved and the business units and customer populations that will be affected. This upfront investment in structured information collection pays dividends across every subsequent step of the review.

Centralised intake also eliminates one of the most common sources of delay: teams having to request additional information because the submission they received was incomplete. When intake is standardised, reviewing teams can begin their work immediately rather than spending the first week of a review cycle chasing documentation.

Automated Routing: Getting the Right Eyes on Every Initiative

Once an AI initiative has been submitted through centralised intake, the next challenge is ensuring it reaches the right reviewing teams without manual coordination overhead. This is where automated routing transforms the process.

Routing logic can be defined based on the characteristics of the initiative. An AI system that accesses personally identifiable information is automatically routed to Privacy. One that involves a third-party model or vendor is automatically routed to both Security and Technology. A system that will produce or inform customer-facing decisions is automatically routed to Legal and Risk. Reviews that require input from all domains are flagged for coordinated assessment rather than sequential handoffs.

The value of automation here extends beyond speed. When routing is manual and dependent on the knowledge and judgment of an individual coordinator, the quality of routing decisions is inherently variable. When routing is rules-based and automated, every initiative receives the same standard of triage regardless of who submitted it, when it was submitted or how busy the coordination team happens to be.

Clear Status Definitions: The Language of Transparent Governance

One of the most underrated elements of an effective cross-functional review process is a shared, well-defined set of status definitions. In the absence of clear status language, stakeholders are left to interpret ambiguous signals: a half-completed email thread, a meeting that has not yet been scheduled or a document that was reviewed but never formally responded to.

Effective AI governance processes define a small number of clear status states and enforce them consistently. An initiative should be in one of the following conditions at any point in time: new, in review, changes required, approved or rejected. Each status should carry a defined meaning, a defined owner and a defined set of actions that must occur before the status can progress.

When status is transparent and consistently maintained, several important things happen. Business units know exactly where their initiative stands without needing to chase reviewers. Leadership can monitor the volume and velocity of the review pipeline. Bottlenecks become visible as initiatives accumulate in particular status states. And the entire review process becomes auditable, a property that is increasingly important as regulators begin to scrutinise not just the outcomes of AI governance but the processes by which those outcomes were reached.

Better Risk Outcomes and Shorter Cycle Times Are Not a Trade-off

There is a common assumption in enterprise governance that thoroughness and speed are in tension, that moving faster necessarily means accepting more risk. The cross-functional AI review process is one area where this assumption can be confidently challenged.

Streamlined reviews with centralised intake, automated routing and clear status definitions do not compromise risk outcomes. They improve them. When all reviewing teams receive the same complete information at the same time, they can identify interconnected risks that sequential review processes miss entirely. When routing logic ensures that the right expertise is always engaged, the quality of assessment is more consistent. When status is transparent, issues are identified and escalated faster.

At the same time, cycle times fall dramatically. Initiatives that previously took eight to twelve weeks to navigate a fragmented email-driven process can move through a well-designed review workflow in two to three weeks. That reduction in cycle time translates directly into faster time-to-value for AI initiatives and a substantial reduction in the shadow AI behaviour that fragmented governance processes inevitably produce.

Embedding Streamlined Reviews into Your Governance Operating Model

The structural changes described here are centralised intake, automated routing and clear status definitions. These are not aspirational. They are achievable and they do not require a multi-year transformation programme to realise.

The starting point is honest assessment of the current state. How many teams are currently involved in reviewing an AI initiative? How does each team receive the submission? What happens at each handoff? How long does each stage take? Where do initiatives stall most frequently and why?

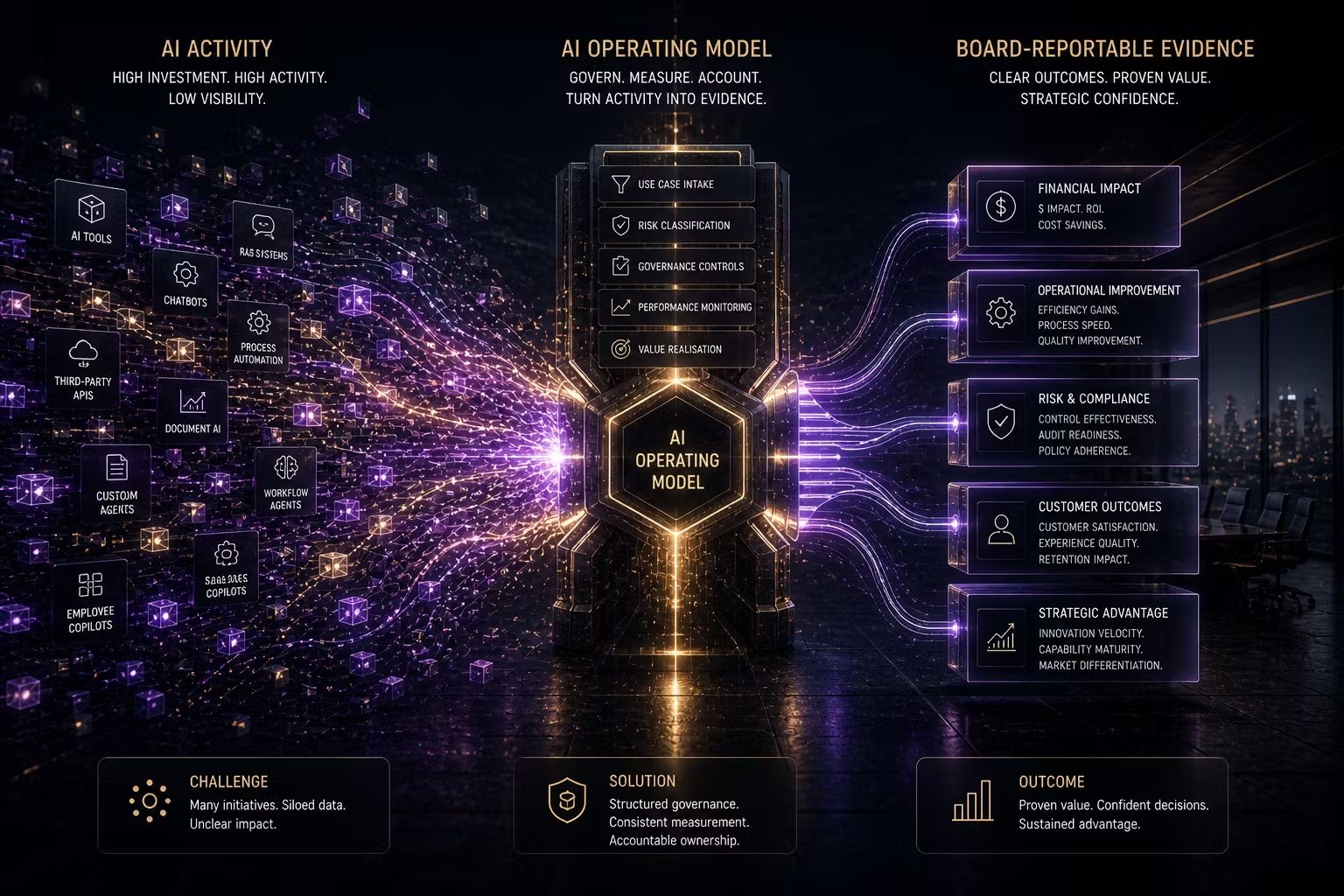

With a clear picture of the current process, it becomes possible to design an improved one and to identify the platform capabilities needed to support it. Purpose-built AI governance platforms provide the intake forms, routing logic and status tracking that transform fragmented email processes into structured, measurable workflows. The governance operating model is then built around those platform capabilities rather than relying on individual coordinators to hold the process together.

Looking Ahead

Cross-functional reviews are where AI governance either earns the trust of the business or loses it. A process that is slow, opaque and dependent on email is a process that business units will route around. A process that is fast, transparent and structured is a process that business units will engage with and that will genuinely improve the risk outcomes of AI deployment at scale.

How many emails does it currently take to get an AI initiative approved in your organisation? S

Relevant Trusenta Products and Services

The cross-functional review challenges described in this post are exactly what TRUSENTA.IO AI Governance is built to address. The platform provides centralised use case intake, structured risk assessment workflows, automated routing across Legal, Privacy, Security, Risk and Technology teams and real-time status tracking; replacing email-driven processes with a single, transparent governance environment.

For organisations seeking to establish or mature the governance operating model that underpins these workflows, our AI Governance Maturity Uplift service delivers streamlined review frameworks, defined decision rights and TRUSENTA.IO configuration in a focused four to six week engagement. Organisations operating at enterprise scale can explore our AI Governance Enterprise service for unified governance across multiple business units, regions and regulatory jurisdictions.

#CrossFunctional #ReviewWorkflow #AIApprovals #RiskAndCompliance

Author

Mark brings a rare blend of C-suite leadership and hands-on consulting experience to Trusenta. As former SVP of Services, SVP of Business Opeartions, Managing Director and CIO he brings a breadth of experinece in his specialty in guiding organisations through AI strategy, governance and adoption; bridging ambition with practical execution. His focus is on helping clients embed AI responsibly, at scale and in service of real business outcomes.

https://www.linkedin.com/in/consult-mmiller/