Enterprise Architecture

RAG, Agents and the Reality Gap: Architectural Patterns That Survive Production

Most organisations treat RAG as a stepping stone to agents. That framing is wrong and it leads to architectural decisions that create real production risk. This post examines what RAG and agents are actually for, how to bound agent behaviour as codified artefacts and what the OWASP Agentic Top 10 means for enterprise architects.

March 8, 2026

9

min read

There is a widely repeated framing in enterprise AI that goes something like this: you start with RAG, then once you are ready you graduate to agents. RAG is the beginner pattern. Agents are the advanced one. Maturity means moving from one to the other.

This framing is wrong. And it is wrong in a way that leads organisations to make architectural decisions that create genuine production risk. RAG and agents do not sit on a maturity curve. They solve fundamentally different problems. Treating them as sequential stages means deploying agents in situations that call for RAG and building RAG systems where the business need actually requires agents; often with consequences that only become visible in production.

What RAG Actually Is and What It Is For

Retrieval-augmented generation is a read pattern. It connects a language model to a knowledge source so that the model's responses are grounded in specific, current organisational information rather than its training data alone. The model retrieves relevant content from the knowledge source, uses it to inform its response and returns an answer that can be traced back to the source material.

RAG is the right architectural choice when the use case is about accessing and synthesising information. A customer service assistant that answers questions about product documentation. An internal knowledge tool that helps staff find policy guidance. A research assistant that summarises relevant material from a document repository. These are information retrieval use cases. The AI is reading and synthesising. It is not taking actions with side effects in the world.

This distinction matters architecturally. A RAG system that returns an incorrect or misleading answer is a quality problem. It can be detected, evaluated and remediated. The consequences are bounded. The system has not changed any state in any external system. It has produced an output that a human can assess and act on or discard.

What Agents Actually Are and What They Are For

An AI agent is a system that can take actions with side effects. It does not just retrieve and synthesise information; it invokes tools that change state in the world. It sends emails, creates records, updates databases, calls APIs, executes code. The planning loop of an agent decides which tools to invoke, in what sequence, based on intermediate results that were not fully anticipated at design time.

Agents are the right architectural choice when the use case requires the AI to do something, not just know something. A procurement agent that identifies approved vendors, creates a purchase order and routes it for approval. A compliance monitoring agent that detects a potential policy violation, gathers supporting evidence and creates a case for human review. A code review agent that identifies issues, proposes fixes and raises pull requests. These use cases require action. RAG cannot address them.

The risk profile of agents is categorically different from RAG. When an agent takes an incorrect action, the consequences may be irreversible. A sent email cannot be unsent. A created record changes the state of a system that other processes depend on. The OWASP Agentic AI Top 10, published in December 2025, identifies the leading risks in agentic AI deployments: goal hijacking (the agent is manipulated into pursuing objectives other than the ones it was designed for), excessive tool permissions (the agent has access to tools it should not be able to invoke) and inadequate human oversight (the agent takes consequential actions without appropriate human review checkpoints).

These are not theoretical risks. They are the risks that cause agentic AI projects to fail in production, generate incidents or get shut down entirely.

How to Bound Agent Behaviour as Codified Artefacts

The architectural response to these risks is to treat agent boundaries as codified artefacts; structured objects in the governance layer of the AI platform that define precisely what an agent is permitted to do, under what conditions and with what human oversight requirements.

A well-designed agent boundary artefact defines four things: the agent's permitted tool set (the complete and explicit list of tools the agent can invoke, with no implicit inheritance from broader permissions); the conditions under which human review is required before the agent proceeds (the specific decision types, risk thresholds or output states that trigger a human-in-the-loop checkpoint); the maximum autonomy window (the number of planning steps or tool invocations the agent can make before it must surface its plan to a human for review); and the escalation path (what happens when the agent encounters a situation outside its defined boundaries; which human receives the escalation, through which channel and within what timeframe).

These artefacts are not configuration comments in code. They are managed objects in the governance layer, subject to the same change control as any other critical architecture artefact. When a delivery team wants to expand an agent's tool set, that request surfaces the boundary artefact, triggers an impact assessment and routes to human approval before the change is made. When an agent is observed invoking a tool in a way that was not anticipated, the boundary artefact is the reference point for assessing whether the behaviour is a boundary violation requiring governance action.

The Decision Framework: When to Use RAG and When to Use Agents

How do you decide which pattern fits a given use case? The decision turns on three questions.

Information versus action. Does the use case require the AI to know something and tell a human or does it require the AI to do something in an external system? If the answer is knowing and telling, RAG is almost certainly the right starting point. If the answer is doing, you need to consider agents.

Deterministic versus adaptive. Does the use case have a predictable, well-defined path from input to output? Or does it require the AI to adapt its approach based on intermediate results that cannot be fully anticipated? Deterministic workflows can often be addressed with structured prompting or workflow automation without agentic planning loops. Adaptive workflows with genuine decision branching based on real-time state may require agents.

Consequence of error. What happens if the AI gets it wrong? If the consequence is a poor answer that a human can assess and discard, the risk profile is manageable with good evaluation and quality controls. If the consequence is an irreversible action in an external system, the risk profile demands the full agent boundary governance framework: explicit tool scoping, human checkpoints, sandboxed execution environments and a tested escalation path.

OWASP Agentic AI Top 10: What Enterprise Architects Must Act On

The OWASP Agentic AI Top 10 provides a structured taxonomy of the risks that matter most in production agentic deployments. For enterprise architects, the three that require immediate architectural response are:

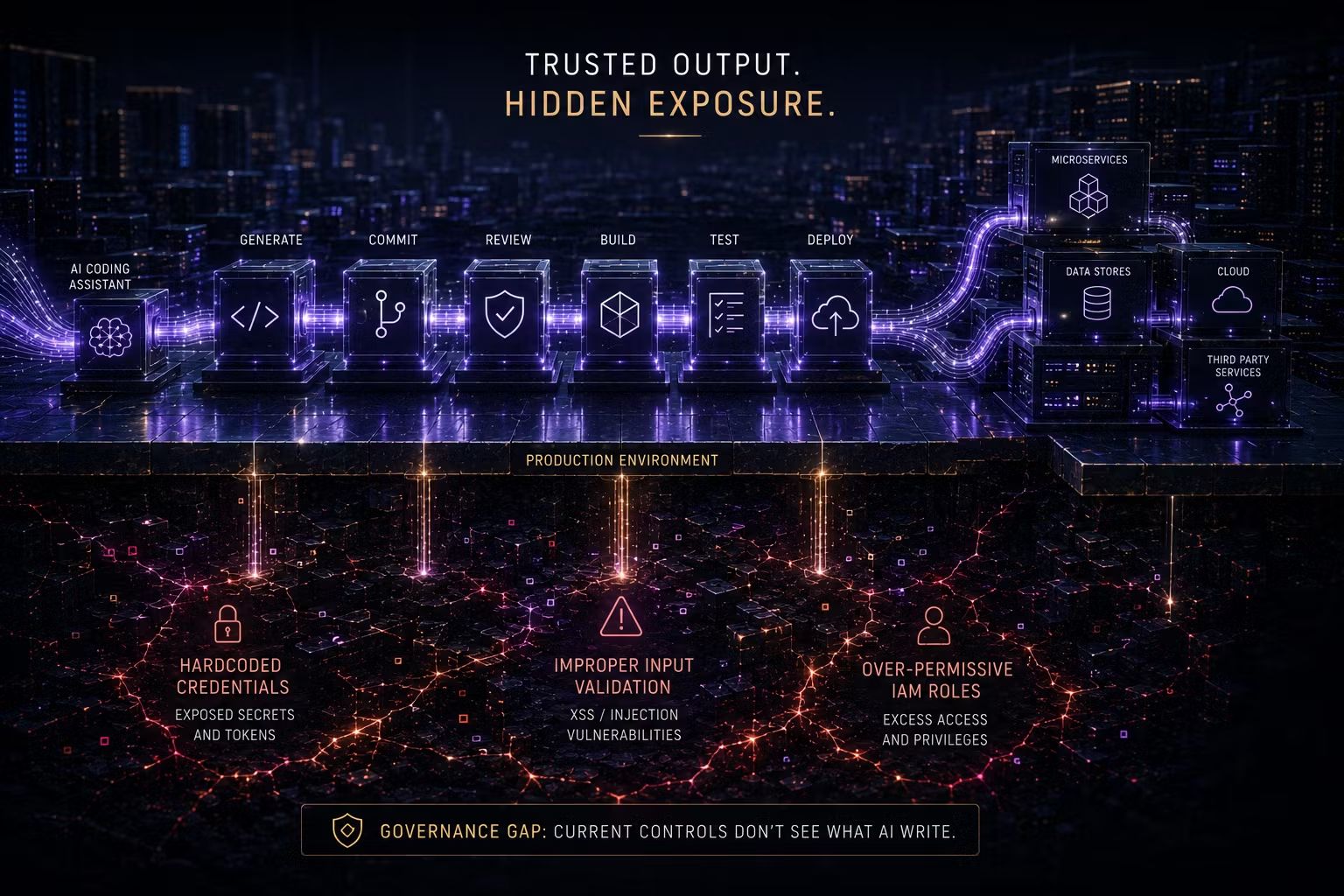

Goal hijacking (prompt injection at the agent level). An attacker or a malformed input causes the agent to pursue a goal other than the one it was designed for. The architectural defence is strict input validation at every tool invocation, output validation against the agent's defined objective and anomaly detection that flags behaviour diverging from the intended planning trajectory. These are codified in the evaluation and safety layer of the platform.

Excessive tool permissions. The agent has access to tools beyond what its use case requires, which means a compromised or misbehaving agent can cause harm beyond its intended scope. The architectural defence is least-privilege tool scoping, codified in the boundary artefact and enforced at the orchestration layer. Every tool permission is explicit. There are no implicit permissions inherited from user roles or service accounts.

Inadequate human oversight. The agent takes consequential actions without human review. The architectural defence is mandatory human-in-the-loop checkpoints for defined decision types, codified in the boundary artefact and enforced before the agent is permitted to proceed. These checkpoints are not optional design choices left to individual teams. They are governance requirements applied at the platform level.

The Trusenta Perspective

We see organisations get into trouble with agents in a consistent pattern: the use case is genuinely appropriate for agentic AI, the model performs well in testing and the deployment goes live with enthusiasm. Then, in production, the agent encounters a situation outside its test distribution. It makes a decision that a human would not have made. The action has side effects that are difficult or impossible to reverse. The incident response process surfaces the fact that there were no codified boundary artefacts, no human-in-the-loop checkpoints for the decision type in question and no audit trail that allows the sequence of events to be reconstructed.

The fix is not to stop using agents. It is to govern them properly from the start. Codified boundary artefacts, enforced at the platform's governance and standards layer, are how you capture the value of agentic AI without accepting the risks that come with ungoverned autonomy.

Key Takeaways

- RAG and agents are not a maturity progression, they solve different problems. RAG is a read pattern for information retrieval; agents are required when the AI must take actions with side effects in the world.

- The risk profile of agents is categorically different from RAG. Incorrect agent actions may be irreversible; the OWASP Agentic Top 10 identifies goal hijacking, excessive tool permissions and inadequate human oversight as the leading risks.

- Agent boundaries should be codified artefacts in the governance layer defining permitted tool sets, human review conditions, maximum autonomy windows and escalation paths as managed, change-controlled objects.

- The decision to use RAG versus agents turns on three questions: information versus action, deterministic versus adaptive workflow and the consequence of error.

- Human-in-the-loop checkpoints for consequential agent decisions are governance requirements, not optional design choices; and they must be enforced at the platform level, not left to individual delivery teams.

Coming Up in Part 5: AI Governance as Architecture

In Part 5, Shane Coetser maps NIST AI RMF, ISO 42001, the EU AI Act and OWASP controls directly to the codified EA artefacts and platform enforcement mechanisms we have discussed across this series; showing how treating governance as architecture, rather than as a compliance function that runs alongside it, is what makes these frameworks achievable in practice.

How Trusenta Can Help

Enterprise Architecture in the AI Era covers agent boundary design as a core component of the platform architecture engagement — including the codified artefact templates, change workflow design and platform enforcement patterns that keep agentic AI safe in production.

Risk Management provides the risk taxonomy and control linkage framework that makes OWASP Agentic Top 10 risks manageable. Each risk category maps to a set of codified controls that can be assessed against your current agent deployments, with treatment tracking and residual risk visibility that your risk and compliance teams require.

Author

Mark brings a rare blend of C-suite leadership and hands-on consulting experience to Trusenta. As former SVP of Services, SVP of Business Opeartions, Managing Director and CIO he brings a breadth of experinece in his specialty in guiding organisations through AI strategy, governance and adoption; bridging ambition with practical execution. His focus is on helping clients embed AI responsibly, at scale and in service of real business outcomes.

https://www.linkedin.com/in/consult-mmiller/